The Election Deepfakes Of India, USA, Slovakia, London And Taiwan: A Threat to Democracy

Why it matters:

- AI-generated audio was used to disenfranchise U.S. voters in New Hampshire, exposing vulnerabilities in telecommunications infrastructure and the accessibility of deepfake technology.

- Political consultant Steve Kramer orchestrated the scheme to prompt stricter regulations on AI in politics, highlighting the need for updated laws to address synthetic media manipulation.

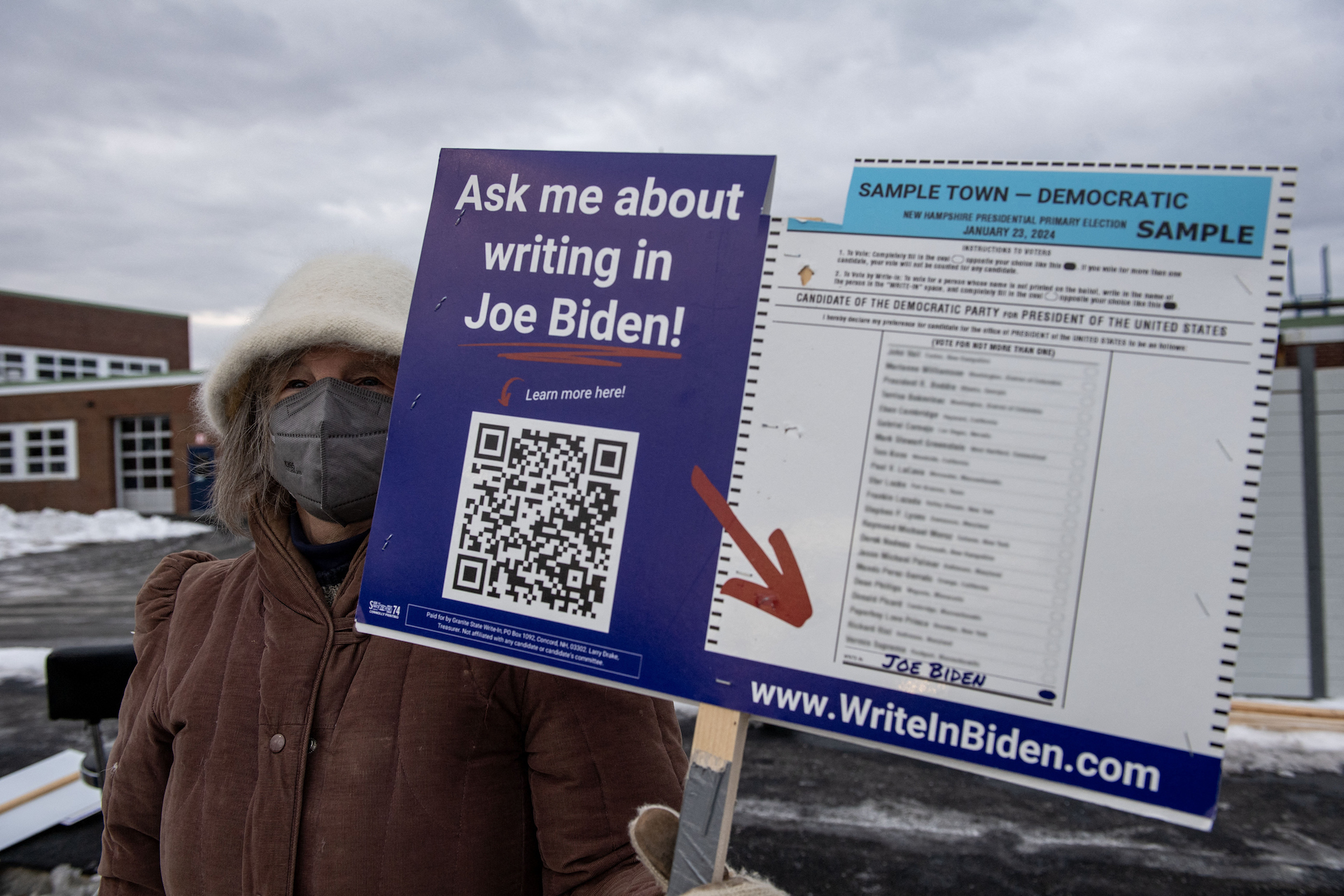

On January 21, 2024, residents of New Hampshire received a phone call that marked a permanent shift in American political warfare and Election Deepfakes. The caller ID displayed the personal cell phone number of Kathy Sullivan, a former chair of the state Democratic Party. When voters answered, they heard the familiar, halting cadence of President Joe Biden. The voice used his signature vernacular, opening with “What a bunch of malarkey,” before delivering a message designed to suppress turnout in the upcoming primary election.

The audio instructed voters to “save” their vote for the November general election. It falsely claimed that voting in the primary would preclude them from casting a ballot in the fall. This event was not a prank. It was the confirmed instance of AI-generated audio being deployed to disenfranchise U. S. voters. The operation targeted between 5, 000 and 25, 000 individuals just two days before the polls opened. The incident exposed the fragility of the nation’s telecommunications infrastructure and the terrifying accessibility of deepfake technology.

Federal investigators quickly traced the source of the calls. The trail led to Steve Kramer, a political consultant working for the presidential campaign of Representative Dean Phillips. While the Phillips campaign denied any knowledge of the operation, Kramer later admitted to orchestrating the scheme. His objective was to test the regulatory environment and, paradoxically, to prompt stricter regulations on AI in politics. The method he employed was worrying simple and inexpensive.

Kramer did not require a team of software engineers or a sophisticated studio. He hired Paul Carpenter, a New Orleans-based magician and illusionist, to create the fake audio. Carpenter, who holds world records for straitjacket escapes, received a payment of $150 via Venmo for the job. Using software from the startup ElevenLabs, Carpenter generated the clone of President Biden’s voice in less than 20 minutes. The total cost to create the deceptive audio was approximately one dollar. This between the cost of execution and the chance damage to the democratic process stunned regulators.

The transmission of the calls relied on a chain of telecom providers that failed to verify the origin of the traffic. Kramer utilized Life Corporation to initiate the calls, which were then routed through Lingo Telecom. Lingo Telecom assigned the calls an “A-level attestation,” a technical standard meant to signify that the carrier has verified the caller’s identity. In this case, Lingo Telecom failed to perform due diligence. They accepted the traffic based on a prior relationship with Life Corporation. This failure allowed the spoofed number of Kathy Sullivan to appear on thousands of devices, lending the call an air of legitimacy.

The Federal Communications Commission (FCC) responded with speed. The agency leveraged the “Truth in Caller ID Act” to pursue penalties against the entities involved. In September 2024, the FCC finalized a $6 million fine against Steve Kramer for his role in the operation. The commission also reached a settlement with Lingo Telecom. The carrier agreed to pay a $1 million civil penalty and implement a strict compliance plan to prevent future abuses. These fines represented the major financial enforcement actions against the use of AI in election interference.

New Hampshire authorities also took legal action. Attorney General John Formella charged Kramer with 13 counts of felony voter suppression and 13 misdemeanor counts of impersonation of a candidate. The indictment alleged that Kramer knowingly attempted to deter voters from exercising their rights. The legal proceedings highlighted a serious gap in existing laws. Most statutes were written for an era of robocalls and mailers, not synthetic media that can mimic a president with near-perfect accuracy.

The technical forensics provided by Pindrop, a security firm, were instrumental in the investigation. Pindrop analyzed the audio and identified the specific artifacts left by the ElevenLabs text-to-speech engine. This analysis confirmed the synthetic nature of the voice and helped link the audio file back to the tools used by Carpenter. The incident demonstrated that while detection tools exist, they are currently reactive rather than preventative. The calls had already reached thousands of voters before any analysis could take place.

The table details the operational hierarchy of the New Hampshire deepfake scandal, illustrating the low barrier to entry for bad actors.

| Role | Entity/Individual | Action | Financial Consequence |

|---|---|---|---|

| Orchestrator | Steve Kramer | Commissioned audio, directed targeting | $6, 000, 000 FCC Fine |

| Creator | Paul Carpenter | Generated AI voice using ElevenLabs | Paid $150 (Cost ~$1) |

| Carrier | Lingo Telecom | Transmitted calls with false ID | $1, 000, 000 Settlement |

| Victim | Kathy Sullivan | Phone number spoofed on caller ID | None (Reputational harm) |

This scandal served as a proof of concept for election interference in the AI age. It proved that a single operative with a budget of less than $500 could disrupt a federal election and trigger a multi-agency federal investigation. The New Hampshire primary incident was not a dirty trick. It was a warning shot. The tools used to deceive voters are commoditized, cheap, and available to anyone with an internet connection.

Slovakia 2023 Election Deepfakes: How Audio Deepfakes Breached the 48-Hour Election Moratorium

On September 28, 2023, just two days before the Slovak parliamentary elections, a coordinated disinformation campaign exploited a specific legal vulnerability in the nation’s electoral system. Voters began receiving audio files through Telegram and Facebook that purported to feature Michal Šimečka, the leader of the liberal Progressive Slovakia party, and Monika Tódová, a prominent journalist from the daily newspaper Denník N. The recordings captured a conversation that never happened. In the fabricated audio, the two voices discussed a plan to rig the election by purchasing votes from the country’s Roma minority. A second clip featured a synthetic voice resembling Šimečka threatening to raise the price of beer, a culturally sensitive commodity in Slovakia, by nearly 100 percent.

The timing of this release was calculated to maximize damage while minimizing the possibility of defense. Slovakia enforces a strict 48-hour moratorium prior to voting. During this silence period, media outlets and politicians are legally prohibited from campaigning or addressing political controversies. The deepfakes circulated while Šimečka and Tódová were barred by law from issuing public denials through standard media channels. This legal blackout created a vacuum of verified information that the AI-generated content filled immediately.

| Party | Leader | Final Vote Share | Exit Poll Projection | Outcome |

|---|---|---|---|---|

| Smer-SD | Robert Fico | 22. 94% | ~19-20% | Victory |

| Progressive Slovakia (PS) | Michal Šimečka | 17. 96% | ~20-22% | Defeat |

| Hlas-SD | Peter Pellegrini | 14. 70% | ~15% | Coalition Partner |

The technical quality of the audio files showed signs of artificial generation yet remained convincing enough to sway undecided voters. AFP fact-checkers later identified the clips as AI-manipulated, noting unnatural phrasing and digital artifacts. Yet the correction came too late. The content had already spread from Telegram channels to thousands of Facebook feeds. The narrative that the pro-Western Progressive Slovakia party intended to manipulate the vote and increase living costs took root in the final hours before the polls opened.

Robert Fico and his Smer-SD party secured 22. 94 percent of the vote, defeating Progressive Slovakia which finished with 17. 96 percent. While multiple factors contributed to the result, the incident demonstrated the efficacy of audio deepfakes when paired with institutional constraints like a media moratorium. The inability of the targeted candidate to respond in real time allowed the fabrication to stand as the final piece of information voters consumed before casting their ballots. This event marked the confirmed instance in a European Union member state where AI-generated audio was deployed as a primary weapon to disrupt the final stage of a national election.

“The deepfake was released just a few days before the election. This led to wonder if AI had influenced the outcome, and contributed to Michal Simecka’s Progressive Slovakia party coming in second.” , The Hacker News, January 2025

The Slovak case exposes a serious flaw in legacy election laws designed for the print and broadcast era. Moratoriums intended to prevent last-minute mudslinging serve as a shield for digital disinformation. Bad actors can release synthetic media during these protected windows, knowing that their are legally silenced. The incident forces a reevaluation of how democracies manage the final hours of a campaign when the speed of AI generation outpaces the speed of verification.

India 2024: The Resurrection of Deceased Leaders for Political Campaigning

In the lead-up to India’s 2024 general election, the Dravida Munnetra Kazhagam (DMK) party deployed a campaign strategy that dissolved the boundary between history and the present. M. Karunanidhi, the iconic former Chief Minister of Tamil Nadu and DMK patriarch, appeared on large projected screens to address voters. He wore his trademark yellow scarf and dark sunglasses, his head tilted in its familiar stance, and his voice carried its signature rasp. Yet, Karunanidhi had died in 2018. Through high-fidelity deepfake video and synthesized audio, the party resurrected their deceased leader to endorse his son, current Chief Minister M. K. Stalin, and mobilize the party cadre.

This was not a singular anomaly part of a systematic “battle of the ghosts” in Tamil Nadu politics. The rival All India Anna Dravida Munnetra Kazhagam (AIADMK) countered by resurrecting their own late supremo, J. Jayalalithaa, who passed away in 2016. On February 24, 2024, marking her 76th birth anniversary, the party released an AI-generated audio clip in which Jayalalithaa’s voice urged voters to support Edappadi K. Palaniswami. In the 40-second clip, the synthetic voice recited her famous catchphrase, “I am because of the people, I am for the people,” allowing a dead politician to campaign for a future she would never see.

The scale of this operation signals a booming industry for synthetic media in Indian politics. Industry estimates indicate that political parties funneled approximately $50 million into AI-generated content for the 2024 election pattern. The barrier to entry for this technology has collapsed; creators like Divyendra Singh Jadoun, known as “The Indian Deepfaker,” report charging as little as ₹125, 000 ($1, 500) for a high-quality deepfake video and ₹60, 000 ($720) for an audio clone. This affordability allowed regional parties to deploy sophisticated disinformation and propaganda tools previously available only to state actors.

| Deceased Leader | Party | Year of Death | AI Medium Used | Campaign Objective |

|---|---|---|---|---|

| M. Karunanidhi | DMK | 2018 | Deepfake Video & Audio | Endorse son M. K. Stalin; mobilize youth wing. |

| J. Jayalalithaa | AIADMK | 2016 | Synthesized Audio | Support successor Edappadi K. Palaniswami. |

| H. Vasanthakumar | Congress | 2020 | Deepfake Video | Campaign for son Vijay Vasanth (2021/2024). |

The ethical of these “ghost campaigns” are severe. Unlike the New Hampshire robocalls, which sought to suppress votes through deception, these resurrections exploit emotional nostalgia to manipulate voter sentiment. They ascribe specific, contemporary political opinions to individuals who cannot consent or refute them. For instance, the AI avatar of Karunanidhi specifically praised the “Dravidian model” of governance implemented years after his death, rewriting his political legacy to suit current administrative needs.

This phenomenon introduces a unique threat to democratic integrity: the inability of the electorate to distinguish between a leader’s historical record and their fabricated present-day endorsements. When dead leaders can be programmed to say anything, the historical record becomes malleable, and the credibility of political communication further. The Election Commission of India issued advisories against the misuse of AI to spread misinformation, yet the use of “authorized” deepfakes by parties to resurrect their own leaders falls into a regulatory gray area, leaving voters to navigate a where even death is no longer a bar to political ambition.

The Sadiq Khan Audio Fabrication: Weaponizing Artificial Intelligence in the UK

The Armistice Day Deception

In November 2023, a sophisticated piece of AI-generated audio targeted London Mayor Sadiq Khan, marking a dangerous escalation in the use of synthetic media to incite civil unrest. Released just days before Armistice Day, a solemn national holiday in the United Kingdom honoring war dead, the fabricated clip purported to show Khan disparaging the commemoration in favor of pro-Palestinian protests. The timing was calculated to exploit heightened tensions surrounding the Israel-Gaza conflict, with the specific intent of mobilizing far-right groups against the Mayor’s office.

The audio, which circulated rapidly on TikTok and X (formerly Twitter), featured a voice mimicking Khan’s London accent and cadence. In the recording, the fake Khan was heard saying, “I don’t give a flying s*** about the Remembrance weekend,” and claiming that the “paramount” priority was a “one-million-man Palestinian march” scheduled for the same day. The fabrication went further, with the voice asserting, “I control the Met Police, they do as the Mayor of London tells them.” These statements were entirely fictitious. Khan had not made these remarks, nor does the Mayor of London hold operational command over the Metropolitan Police Service.

Anatomy of the Fabrication

Forensic analysis and investigative reporting by the BBC later identified the source of the audio as a social media user operating under the handle “HJB News.” The creator, identified only as “Henry,” claimed the clip was intended as satire. Yet, the technical execution suggested a deliberate attempt to deceive. The audio absence the obvious robotic artifacts common in early deepfakes, though it did contain subtle linguistic errors. Specifically, the reference to a “one-million-man march” was an anachronism; the phrase historically refers to a 1995 civil rights demonstration in Washington, D. C., and was not the terminology used by organizers of the 2023 London protests.

even with these inconsistencies, the audio achieved immediate viral status. Metrics from X indicate the clip received over 200, 000 views within the hour of its release. By the time debunking efforts gained traction, the audio had been shared hundreds of thousands of times across multiple platforms, frequently within closed WhatsApp groups where fact-checking is virtually impossible. The content acted as a catalyst for real-world action. On November 11, 2023, far-right counter-protesters clashed with police in central London, resulting in dozens of arrests. Khan later stated that the fake audio was a primary driver of this disorder, describing it as a “red rag to a bull.”

Legal Impunity and Police Response

The aftermath of the incident exposed a serious gap in British law regarding AI-generated disinformation. The Metropolitan Police launched an investigation into the source of the audio concluded that the creation and distribution of the clip did not constitute a criminal offense. Under the Communications Act 2003 and the Public Order Act 1986, the threshold for prosecution requires proof of a threat to kill or intent to cause immediate violence. The fake audio, while inflammatory, fell into a legal gray zone, it was a fabrication designed to mislead, it did not meet the strict statutory definition of a crime.

This legal paralysis allowed the creator to face no consequences, setting a precedent that political deepfakes could be generated with impunity. The incident demonstrated that existing frameworks, designed for print and broadcast eras, are ill-equipped to handle the speed and of algorithmic weaponization.

| Date | Event | Details |

|---|---|---|

| Nov 10, 2023 | Audio Release | Fake clip posted on TikTok/X; gains 200k+ views in hour one. |

| Nov 11, 2023 | Armistice Day | Far-right groups clash with police; 120+ arrests made. |

| Nov 12, 2023 | Police Review | Met Police announce review of the audio by specialist officers. |

| Nov 13, 2023 | Investigation Closed | Met Police conclude no criminal offense was committed. |

| Feb 14, 2024 | Creator Identified | BBC tracks creator “Henry”; he claims it was “news with humor.” |

Taiwan 2024: Defense method Against Cognitive Warfare and AI Disinformation

The January 2024 presidential election in Taiwan served as a global crucible for cognitive warfare, with the island nation operating as a primary testing ground for AI-enhanced disinformation tactics. Unlike the crude social media bot farms of previous pattern, the 2024 campaign featured a sophisticated “whole-of-society” assault involving deepfake audio, generative AI text, and cross-platform coordination designed to trust in democratic institutions. Taipei’s response, yet, established a new operational standard for digital defense, leveraging a synchronized network of government agencies, civil society fact-checkers, and legal frameworks to neutralize threats before they could alter the electoral outcome.

One of the most significant incidents occurred in August 2023, when a manipulated audio file circulated on social media purporting to feature Taiwan People’s Party (TPP) candidate Ko Wen-je. The 58-second clip, which sounded identical to Ko’s distinctive speech pattern, falsely depicted him criticizing Vice President Lai Ching-te’s visit to the United States. The Taiwan People’s Party immediately denounced the audio as a fabrication. Forensic analysis by the Ministry of Justice Investigation Bureau (MJIB) later confirmed the file was a deepfake, likely generated using voice-cloning technology. This event marked a serious escalation: attackers were no longer just spreading false text were manufacturing primary source evidence to manufacture scandals.

The volume of these attacks was substantial. Data from the civil society database Cofacts indicated a 40% increase in disinformation reports compared to the previous year. To combat this, the Taiwanese government activated a multi- defense grid. The Ministry of Digital Affairs (MODA) deployed AI-driven detection systems capable of inspecting up to 30, 000 items of suspicious content daily. By May 2024, this system had flagged over 900, 000 chance fraud and disinformation cases, significantly reducing the manual load on human analysts. This technological shield was by legislative action; in May 2023, Taiwan’s legislature amended the Presidential and Vice Presidential Election and Recall Act, introducing heavy penalties for the dissemination of deepfake content and granting authorities the power to demand the removal of verified false media within 48 hours.

Civil society organizations provided the second pillar of Taiwan’s defense. The Taiwan FactCheck Center (TFC) and MyGoPen operated as rapid-response units, debunking viral hoaxes within hours of their appearance. A notable success involved a deepfake video of Democratic Progressive Party (DPP) candidate Lai Ching-te, which had been manipulated to make him appear to support a political alliance he actually opposed. Fact-checkers quickly located the original footage, created side-by-side comparisons, and distributed the debunking reports across LINE groups and Facebook, killing the rumor’s momentum. Public trust in these non-governmental bodies proved important; surveys conducted after the election showed that 74% of Taiwanese citizens were aware of these organizations, and over 70% trusted their verification over anonymous online sources.

The following table outlines the specific defense method deployed during the 2024 election pattern, categorizing them by sector and operational function.

| Sector | Entity | Primary method | Operational Impact |

|---|---|---|---|

| Government | Ministry of Justice Investigation Bureau (MJIB) | Cognitive Warfare Research Center | Forensic analysis of deepfake audio/video; confirmed Ko Wen-je audio fabrication. |

| Government | Ministry of Digital Affairs (MODA) | AI-Based Fraud Detection System | Automated inspection of 30, 000+ daily cases; flagged 900, 000+ items by mid-2024. |

| Legislature | Legislative Yuan | Election Act Amendments (May 2023) | Criminalized deepfake usage in campaigns; mandated 48-hour takedown windows for platforms. |

| Civil Society | Taiwan FactCheck Center (TFC) | Rapid Debunking & Media Literacy | Neutralized “cheap fakes” and deepfakes via viral verification reports on LINE/Facebook. |

| Civil Society | Cofacts | Crowdsourced Verification Database | Processed a 40% surge in user-reported disinformation; provided real-time threat data. |

| Tech Sector | Taiwan AI Labs | Infodemic Platform | Identified coordinated troll group behaviors and non-native manipulation patterns. |

The effectiveness of these measures relied heavily on speed and pre-bunking strategies. Authorities and NGOs frequently released “inoculation” content, warnings about specific types of expected disinformation, before the attacks fully materialized. For instance, when rumors of vote-counting fraud began to circulate on TikTok and YouTube immediately after polls closed, the Central Election Commission (CEC) and fact-checkers were prepared with pre-verified explanations of the counting process. This preemptive action prevented the “Stop the Steal” narrative from gaining the traction seen in other democracies.

Taiwan’s experience demonstrates that legislative bans on deepfakes are insufficient without a proactive, technology-enabled enforcement method. The collaboration between the MJIB’s forensic teams and civil society’s distribution networks created a “nerd immunity” that made the population more resistant to cognitive manipulation. While the attacks were sophisticated, the defense was widespread, proving that democratic resilience against AI warfare requires not just better software, a operational pact between the state and its citizens.

The Liar’s Dividend: Politicians Dismissing Real Scandals as AI Fabrications

The most insidious threat to democratic accountability is not the creation of fake evidence, the dismissal of real evidence. This phenomenon, known as the “Liar’s Dividend,” allows public figures to evade responsibility by claiming that genuine incriminating audio or video is AI-generated. Between 2023 and 2025, this tactic evolved from a theoretical risk into a standard political defense strategy, creating a “zero-trust” environment where objective truth is treated as a matter of opinion.

In January 2024, long-time political operative Roger Stone provided a textbook example of this defense. When Mediaite published an audio recording of Stone discussing the assassination of Democrats Jerry Nadler and Eric Swalwell, Stone did not deny the intent; he attacked the medium itself. He categorically dismissed the recording as “AI manipulation,” a claim he made without providing forensic evidence. This pivot shifted the load of proof from the accused to the accuser, forcing journalists to prove the negative, that the audio was not synthetic, rather than focusing on the content of the threat.

Former President Donald Trump frequently deployed this strategy throughout the 2024 and 2025 political pattern. In January 2024, after a video circulated showing him struggling to pronounce the word “anonymous” during a speech, Trump claimed on Truth Social that the footage was “AI” generated by his political enemies. He repeated this defense in September 2025, when a viral video showed objects being thrown from a White House window. even with his own staff confirming to reporters that the footage depicted a contractor performing routine maintenance, Trump publicly insisted the video was “AI-generated,” directly contradicting his administration’s official narrative.

| Date | Public Figure | Incident | Defense Claim | Outcome/Verification |

|---|---|---|---|---|

| April 2023 | Elon Musk (Tesla) | Past statements on Autopilot safety | Lawyers argued statements could be deepfakes to avoid testimony | Judge rejected argument as “deeply troubling” |

| April 2023 | P. Thiaga Rajan (India) | Audio accusing own party of corruption | Claimed clips were “machine-generated” | Forensic analysis suggested one clip was likely authentic |

| Jan 2024 | Roger Stone (USA) | Audio discussing assassination of MPs | Dismissed as “AI manipulation” | Source confirmed authenticity to media |

| Sept 2025 | Donald Trump (USA) | Video of debris thrown from White House | Claimed video was “AI-generated” | White House staff confirmed it was real maintenance work |

The strategy is not limited to the United States. In April 2023, Palanivel Thiaga Rajan, the Finance Minister of the Indian state of Tamil Nadu, faced a scandal when audio clips surfaced in which he appeared to accuse his own party, the DMK, of amassing illicit wealth. Rajan released a statement dismissing the clips as “machine-generated” and “deepfake,” citing the ease of cloning voices. While forensic analysis commissioned by news outlets suggested at least one of the clips was authentic, the “deepfake defense” allowed the party to muddy the waters and delay political. The mere existence of the technology provided a shield of plausible deniability that did not exist a decade prior.

Corporate leaders have also attempted to use this ambiguity. In April 2023, lawyers for Tesla CEO Elon Musk argued in court that he should not be compelled to testify about his past statements regarding Autopilot safety because those statements could be deepfakes. Santa Clara County Superior Court Judge Evette Pennypacker rejected the argument, noting that allowing such a defense would allow public figures to “avoid taking ownership of what they did actually say and do.” This legal maneuver signaled a dangerous precedent: if the authenticity of any public record can be challenged, the evidentiary basis of the legal system collapses.

“The danger is not just that people believe lies, that they disbelieve the truth. When a politician can point to a real video of themselves and say ‘that’s AI,’ and 40% of the population believes them, accountability is dead.”

By late 2025, the line between satire, fabrication, and reality had been deliberately blurred. In the New York City mayoral race of November 2025, former Governor Andrew Cuomo’s campaign utilized AI-generated videos to attack opponent Zohran Mamdani. While these were not “deepfake defenses” in the strict sense, they contributed to an environment where voters could no longer distinguish between documented reality and synthetic fiction. When voters cannot trust their eyes and ears, the “Liar’s Dividend” pays out to the most brazen fabricator.

The Economics of Disinformation: The Collapse of Cost blocks

The most dangerous shift in the disinformation war is not technological, economic. In 2018, producing a convincing “deepfake” required a Hollywood-level budget, a team of visual effects artists, and weeks of rendering time on server-grade hardware. By 2025, the cost of high-fidelity reality fabrication had plummeted to the price of a latte. This collapse in cost has transformed synthetic media from a tool of state intelligence agencies into a weapon available to any motivated partisan with a debit card.

Market data from 2024 indicates that the financial barrier to entry for voice cloning has evaporated. Services like ElevenLabs offer “starter” tiers for as little as $5 per month, allowing users to clone a voice with near-perfect accuracy using less than one minute of reference audio. In 2019, this process required hours of clean studio recordings and thousands of dollars in compute time. Today, a 30-second clip ripped from a YouTube video is sufficient to train a model that can make a political candidate say anything, in any language, with emotional inflection.

| Metric | 2018 Standard | 2025 Reality |

|---|---|---|

| Voice Training Data | 10+ hours of studio audio | 3 to 10 seconds of noisy audio |

| Production Cost | $10, 000+ (Specialized VFX) | $0, $30 (Consumer SaaS) |

| Hardware Required | Data Center / Render Farm | Gaming Laptop (RTX 3060) or Cloud |

| Technical Skill | PhD / Senior Engineer | Basic Literacy |

The New Hampshire robocall incident serves as the definitive case study for this new economic reality. Political consultant Steve Kramer paid a New Orleans magician a mere $150 to create the fraudulent audio recording of President Biden. The AI generation itself likely cost less than $1 in compute credits. This $151 investment triggered a multi-state investigation, a Federal Communications Commission fine of $6 million, and a nationwide emergency of confidence in electoral integrity. The return on investment for chaos agents is astronomically high.

Underground markets have mirrored this commodification. Reports from 2025 show that “Deepfake-as-a-Service” is a staple on the dark web. A “synthetic identity kit”, comprising a cloned voice, an AI-generated face, and supporting fake documentation, sells for approximately $5. Custom video creation services, once the domain of elite hackers, are advertised for $50 to $200 per minute. This “democratization” of fraud means that local mayoral races and school board elections are as as presidential campaigns. The tools required to a reputation are cheaper than a Netflix subscription.

Hardware requirements have followed the same downward trajectory. In 2020, running a convincing face-swap model required expensive, enterprise-grade GPUs. By 2025, a standard consumer gaming laptop equipped with an NVIDIA RTX 3060 card (approximate retail price $300) possesses enough processing power to generate high-fidelity deepfakes locally. This shift moves the threat vector from centralized, monitorable server farms to millions of unmonitored bedrooms and basements. Open-source software like DeepFaceLab, which powers over 95% of deepfake videos, is free to download, further removing financial friction.

The for election security are severe. When the cost of producing a lie method zero, the volume of disinformation becomes infinite. Traditional “troll farms” required salaries and office space. Modern AI agents can generate thousands of unique, voice-cloned robocalls per hour for pennies. The economic asymmetry is absolute: a defender must spend millions to verify and debunk, while an attacker spends pocket change to deceive.

Platform Retreat: The Reduction of Trust and Safety Teams During Global Elections

As the 2024 global election super-pattern method, the digital infrastructure designed to protect democratic discourse was systematically dismantled. Between 2022 and 2024, major technology companies executed a calculated retreat from election integrity, slashing the very teams responsible for monitoring deepfakes, foreign interference, and coordinated disinformation campaigns. This reduction in force was not a matter of efficiency; it was a capitulation that left the digital public square unguarded during the most serious voting year in history.

The of this retreat is quantifiable. Following Elon Musk’s acquisition of Twitter ( X) in October 2022, the platform reduced its global trust and safety staff by 30%, cutting the headcount from 4, 062 to 2, 849. More serious, the engineering teams dedicated to building safety tools were gutted by 80%, dropping from 279 engineers to just 55. This decimation of technical capability had immediate consequences: the Australian eSafety Commission reported that X’s response time to user reports of hateful conduct slowed by 20% following the cuts.

Meta, the parent company of Facebook and Instagram, followed a similar trajectory. In late 2023, the company laid off approximately 21, 000 employees, a move that disproportionately impacted its trust and safety divisions. Internal reports indicated that Meta dissolved the specific engineering team responsible for a tool used by third-party fact-checkers to flag misinformation, blinding its own immune system. By early 2025, Meta initiated another round of performance-based cuts affecting 5% of its workforce, further eroding institutional knowledge.

| Platform | Reduction Metric | Key Impact on Election Integrity |

|---|---|---|

| X (Twitter) | 80% cut to safety engineering staff; 30% cut to global trust & safety. | Response time to hateful conduct reports slowed by 20%; 62, 000 suspended accounts reinstated. |

| Meta | ~21, 000 total layoffs (2023) with “outsized” impact on safety teams. | Dissolved engineering team for fact-checking tools; removed 17 serious safety policies. |

| YouTube (Google) | Laid off 1/3 of the unit protecting against misinformation. | Reversed policy on removing 2020 election denial content in June 2023. |

| Twitch | Over 900 layoffs (2023, 2024), including Responsible AI teams. | Reduced capacity to monitor live-streamed extremist content and deepfakes. |

The consequences of these reductions were not theoretical. They manifested in specific, high-profile failures to contain synthetic media. In January 2024, sexually explicit AI-generated deepfakes of Taylor Swift flooded X, amassing over 47 million views before the platform could intervene. It took X approximately 17 hours to block searches for the terms, a delay that experts attribute directly to the absence of adequate staffing and automated detection systems. While not political in nature, the incident served as a stress test for the 2024 election, proving that the platforms absence the capacity to halt the viral spread of high-harm AI content.

This incapacity extended to political advertising. An investigation by Global Witness in 2024 revealed that TikTok approved 90% of advertisements containing false and misleading election information, even with policies explicitly banning such content. The ads, which included wrong election dates and false claims about voting methods, were not caught by either human moderators or automated filters. Similarly, a report on the EU parliamentary elections found that Russian disinformation networks were able to “flood” X with little resistance, utilizing over 50, 000 coordinated accounts to spread anti-democratic narratives.

Policy rollbacks accompanied the personnel cuts. A Free Press report documented that between November 2022 and November 2023, Meta, X, and YouTube shared removed 17 serious policies designed to curb hate speech and misinformation. YouTube explicitly announced in June 2023 that it would no longer remove content advancing false claims about widespread fraud in the 2020 U. S. election, a reversal that signaled a broader industry shift away from active moderation. This policy vacuum, combined with the “liar’s dividend”, where bad actors can dismiss real evidence as AI-generated, created a fertile environment for the unchecked spread of election deepfakes.

The retreat was also clear in the handling of manipulated media that did not strictly fit the definition of “AI-generated.” Meta’s Oversight Board criticized the company’s “incoherent” policy after it refused to remove a cheap-fake video of President Biden that was edited to suggest inappropriate behavior. Because the video was not created using generative AI, it fell outside the narrow scope of Meta’s manipulated media policy, allowing it to remain online. This bureaucratic loophole, maintained by a shrunken policy team, exemplified the platforms’ inability to adapt to the evolving threat of 2024.

The Telegram Vector: Unmoderated Spread of Synthetic Media in Encrypted Channels

While mainstream platforms like Meta and Alphabet have implemented watermarking and adversarial screening teams, Telegram has emerged as the primary “staging ground” for the weaponization of synthetic media. Operating outside the jurisdiction of standard Western compliance frameworks, the platform’s encrypted channels function as a dark laboratory where state actors and decentralized troll farms test, refine, and amplify deepfake content before injecting it into the broader social media ecosystem.

Data from the 2024, 2025 election pattern reveals a distinct operational pattern: disinformation campaigns no longer launch directly on open platforms. Instead, they use Telegram as a. Microsoft’s Threat Analysis Center identified this during the 2024 U. S. election, noting that Russian influence operations used Telegram channels to seed AI-generated propaganda. Once these assets, fake whistleblowers, synthetic audio clips, or doctored news reports, gain traction among hyper-partisan communities in encrypted chats, they are manually reposted by unwitting users to X (formerly Twitter), TikTok, and Facebook, laundering the content’s origin.

The “Synthetic ” method: From Encryption to Virality

The mechanics of this transfer are systematic. A piece of synthetic media is uploaded to a “burner” Telegram channel, frequently mixed with legitimate news to build credibility. From there, it is cross-posted to thousands of connected channels using automated bots. The following table illustrates the flow of synthetic assets observed during the 2024 election pattern.

| Stage | Action | Avg. Time to Execution | Primary Actor |

|---|---|---|---|

| Creation | Generation of synthetic audio/video (e. g., “Wolf News” anchors, fake Biden audio). | N/A | State-aligned actors (Russia, China) |

| Seeding | Upload to niche Telegram channels (5k-50k subscribers). | T-Minus 48 Hours | Channel Admins / Bot Farms |

| Incubation | Organic discussion and radicalization of the narrative. | 24-48 Hours | Hyper-partisan user base |

| Injection | Manual reposting to X, TikTok, and Instagram by “real” users. | Zero Hour | Unwitting ” ” Users |

| Amplification | Algorithmic pickup on mainstream platforms. | Post-Injection | Mainstream Algorithms |

This ” ” strategy circumvents the initial detection filters of major platforms. By the time a deepfake video of a fabricated news anchor reaches TikTok, it is uploaded by a legitimate user account with a history of authentic behavior, making algorithmic flagging significantly more difficult.

Industrial- Synthetic Infrastructure

The infrastructure supporting these political operations is frequently dual-use, sharing resources with non-consensual pornography networks. In early 2024, investigations exposed a sprawling network of at least 150 Telegram channels dedicated to the creation and distribution of non-consensual deepfake imagery. These channels, with over 220, 000 subscribers, utilized “nudify” bots that allowed users to strip clothing from images of women using generative AI.

This same infrastructure was repurposed for political harassment. Female politicians and journalists became primary. The sheer volume of synthetic content forced Telegram to acknowledge the emergency; the platform reported removing over 952, 000 pieces of deepfake pornography in 2025 alone. Yet, this enforcement was largely reactive. Security researchers noted that for every channel banned, multiple mirror channels appeared within hours, frequently retaining the same subscriber base through backup invite links.

State-Aligned Operations: The “Spamouflage” and “Doppelgänger” Connection

Beyond decentralized harassment, Telegram hosted sophisticated state-backed campaigns. Graphika, a social network analysis firm, tracked the “Spamouflage” network, a pro-Chinese influence operation that utilized AI-generated news anchors to disseminate anti-U. S. narratives. These synthetic avatars, created using commercial AI video tools, presented scripted propaganda as legitimate news reports. While these videos struggled to gain organic traction on YouTube due to strict labeling policies, they flourished in Telegram’s unmoderated environment.

Similarly, the Russian “Doppelgänger” campaign used Telegram to distribute links to cloned websites, fake versions of legitimate media outlets like The Washington Post or Fox News. These clones hosted AI-written articles and deepfake videos attacking U. S. support for Ukraine. The operation relied on Telegram’s “Instant View” feature and lax URL screening to present these fabrications as credible sources to users within the app.

“Telegram has become an incubator for problematic content… frequently featuring conspiratorial, hyper-partisan, and fringe narratives. The platform’s lenient moderation policies allow these assets to mature before they are deployed to the wider web.” , 2024 Research Paper, University of Southern California

The absence of consistent moderation on Telegram creates a distinct asymmetry in the information war. While Western democracies pressure public platforms to label or remove synthetic media, the Telegram vector remains wide open. The platform’s refusal to implement hash-matching for known deepfakes or to cooperate with external fact-checking bodies ensures that it remains the weapon of choice for actors seeking to destabilize democratic processes through synthetic means.

Watermarking Failures: Technical Limitations of C2PA and Labeling Standards

The global strategy to combat election deepfakes has largely relied on a “label and trace” method, spearheaded by the Coalition for Content Provenance and Authenticity (C2PA). This industry standard, backed by Adobe, Microsoft, and initially embraced by major platforms, promised a digital chain of custody, a tamper-clear seal for media files. yet, technical realities have shattered these optimistic projections. By late 2024, security researchers and data scientists confirmed that C2PA and proprietary watermarking tools like Google’s SynthID are functionally obsolete against motivated political operatives.

The fundamental flaw of C2PA lies in its architecture: it relies on metadata, which is fragile. Like a luggage tag that can be ripped off, C2PA credentials do not survive basic file manipulations. A 2024 analysis by the University of Maryland demonstrated that “visual paraphrasing”, the act of regenerating an image to look identical while altering its pixel structure, successfully strips provenance data 100% of the time. also, the simplest “attack” remains the most: taking a screenshot. When a user screenshots a verified image on a smartphone, the new file is generated without the original C2PA manifest, rendering the authentication useless.

Proprietary watermarking solutions have fared no better. Google’s SynthID, designed to imperceptible signals into AI-generated audio and images, faces a catastrophic failure rate against adversarial attacks. Independent testing in December 2025 revealed that “re-nosing” techniques, processing a watermarked image through a secondary diffusion model with low denoising strength, could scrub the watermark while preserving the visual content. Specialized tools available on GitHub achieve a 79% success rate in neutralizing SynthID markers, allowing bad actors to “clean” deepfakes before deploying them in election campaigns.

Platform Implementation and Labeling Gaps

The technical fragility is compounded by inconsistent enforcement across social media platforms. While companies pledged to label AI-generated content during the 2024 election pattern, their execution was porous. A post-election audit of X (formerly Twitter) found that 74% of misleading AI-generated posts analyzed were not correctly labeled. This failure was not technical structural; the platform had rolled back significant content moderation resources prior to the election, creating a permissive environment for unmarked synthetic media.

The following table outlines the failure points of current authentication standards against common evasion techniques used in influence operations.

| Authentication Method | Evasion Technique | Success Rate of Evasion | Technical Vulnerability |

|---|---|---|---|

| C2PA Metadata | Screenshot / Screen Recording | 100% | Metadata is external to the visual/audio and is lost during capture. |

| C2PA Metadata | Social Media Upload | 90%+ | Most platforms (e. g., Telegram, WhatsApp) strip metadata to reduce file size. |

| Google SynthID | Diffusion “Re-nosing” | 79% | Pixel-level noise is overwritten by secondary generation passes. |

| Visible Watermarks | Inpainting / Cropping | 95% | AI inpainting tools can direct reconstruct the area under the watermark. |

| Platform Labels | Metadata Stripping | High | Labels frequently rely on the presence of metadata; if stripped, the label does not trigger. |

The “opt-in” nature of these standards creates a massive loophole for election interference. C2PA and watermarking are voluntary adopted by responsible companies like OpenAI and Adobe. They are nonexistent in the open-source models frequently used by state-sponsored actors. Models such as Stable Diffusion or Flux, which can be run locally on consumer hardware, do not enforce watermarking. Consequently, a bad actor generating disinformation in a basement in St. Petersburg or Tehran is not bound by the constraints of Silicon Valley’s safety.

Even when watermarks survive, they frequently fail to trigger platform defenses. Meta’s labeling system, which relies on detecting specific signals in file metadata, proved ineffective when users uploaded content that had been processed through third-party editing software. In the high- environment of the 2024 U. S. election, this created a false sense of security. Voters were told to look for labels that rarely appeared, leading to a dangerous assumption that unlabeled content was authentic. The reliance on these brittle technical standards has not only failed to stop deepfakes has arguably made the information ecosystem more unclear by validating a system that works only for the compliant.

Viral Velocity vs Verification Latency: The Time Gap in Fact-Checking AI Content

The defining struggle of the modern information war is not between truth and lies, between speed and latency. In the algorithmic ecosystem of 2024 and 2025, AI-generated disinformation achieves viral saturation in minutes, while verification processes operate on a timeline of hours or days. This temporal mismatch, known as the “verification latency gap,” allows synthetic media to inflict irreversible damage, crashing markets, smearing candidates, or inciting violence, long before a correction can be issued.

The incident on May 22, 2023, involving a fake image of an explosion at the Pentagon, serves as the definitive case study for this phenomenon. At 10: 09 AM ET, a verified Twitter account masquerading as a Bloomberg news feed posted an AI-generated image of black smoke billowing near the U. S. Department of Defense complex. Within moments, the image was amplified by major accounts with millions of followers, including the Russian state-controlled network RT. The impact was immediate and financial: the S&P 500 dropped approximately 0. 3 percent, wiping out roughly $500 billion in market capitalization in minutes. By the time the Arlington Fire Department issued a denial, the algorithmic damage was done. The truth arrived via a press release; the lie arrived via a push notification.

The Asymmetry of Effort

The core problem is an asymmetry of effort and speed. Generative AI tools can produce high-quality deceptive content in seconds at near-zero cost. Conversely, the forensic analysis required to disprove that content, analyzing shadows, checking metadata, and cross-referencing with on-the-ground sources, remains a labor-intensive human process. A 2025 report by the Poynter Institute found that while AI generation takes moments, professional fact-checking organizations frequently require hours or even days to problem a conclusive verdict on complex media.

The following table illustrates the between the viral velocity of AI content and the sluggish pace of verification method, using verified incidents from the 2024-2025 period.

| Incident | Time to Viral Saturation | Peak Reach Before Takedown | Time to Verification/Action | Latency Gap |

|---|---|---|---|---|

| Pentagon Explosion Hoax (May 2023) | ~4 minutes | Global Market Impact ($500B swing) | ~20 minutes (Official Denial) | 16 minutes (serious window of financial damage) |

| Taylor Swift AI Deepfakes (Jan 2024) | ~2 hours | 47 Million Views (Single Post) | 17 Hours (Platform Removal) | 15 Hours (Unchecked viral spread) |

| Biden NH Robocall (Jan 2024) | Immediate (Direct Dial) | ~25, 000 Residents | ~12 Hours (AG Investigation Start) | 12 Hours (Voter suppression active) |

The Taylor Swift incident in January 2024 exemplifies the failure of platform moderation to keep pace with viral velocity. Explicit AI-generated images of the singer circulated on X (formerly Twitter) for 17 hours before being removed. During that window, a single post garnered 47 million views. By the time the platform blocked searches for the singer’s name, the images had already migrated to encrypted messaging apps and other forums. The correction method, content removal, was functionally useless against the speed of distribution.

Algorithmic Accelerants and the Reach of Corrections

Social media algorithms are designed to prioritize engagement, and studies consistently show that, outrageous, or fear-inducing content generates more engagement than factual reporting. A foundational MIT study established that falsehoods spread six times faster than the truth on Twitter, a metric that has only worsened with the advent of hyper-realistic AI. In 2025, a Yale University study on X’s “Community Notes” feature found that while labeling misleading posts reduced reposts by approximately 46 percent, the vast majority of engagement occurred before the note was attached. The label acts as a tombstone for the lie, not a shield against it.

“Traditional fact-checking takes hours or days. AI misinformation generation takes minutes. A journalist trained on 2023 detection methods might develop false confidence, declaring obvious AI content as authentic simply because it passes outdated tests.” , Reporter’s Guide to Detecting AI-Generated Content, 2025

also, the reach of a correction rarely matches the reach of the original falsehood. While a sensational deepfake may reach millions, the subsequent fact-check frequently struggles to break out of the “truth bubble,” reaching only thousands of users who likely already doubted the original claim. This creates a permanent “misinformation residue” in the public consciousness, where the lie is remembered, and the correction is never seen.

The Liar’s Dividend

The proliferation of deepfakes has also birthed a secondary phenomenon known as the “Liar’s Dividend.” This concept describes how the mere existence of AI-generated content allows bad actors to dismiss genuine incriminating evidence as “fake.” As the public becomes sensitized to the reality of deepfakes, their baseline trust in all media.

In 2024 and 2025, political strategists have increasingly observed candidates using “informational uncertainty” as a defense. When confronted with real audio recordings or video footage of gaffes or misconduct, the standard defense has shifted from “I didn’t say that” to “That could be AI.” This creates a double bind for fact-checkers: they must work twice as hard to prove that fake content is fake, and equally hard to prove that real content is real. The load of proof has shifted entirely to the verifier, while the liar benefits from the fog of war.

Human detection is no longer a viable defense. A 2025 study revealed that human participants could identify high-quality deepfake video only 24. 5 percent of the time, worse than a coin flip. With human intuition failing and automated detection tools lagging behind generation capabilities, the time gap between a viral lie and the established truth is not closing; it is widening.

Regulatory Patchwork: The Inconsistency of US State Laws on Election Deepfakes

The absence of a unified federal statute governing AI-generated election content has left individual states to construct their own defenses. This has resulted in a fragmented legal environment where a video might be a felony in Minnesota, a civil liability in California, and entirely unregulated in Ohio. As of July 2025, 47 states have enacted form of deepfake legislation, yet the specific provisions regarding political speech vary wildly. This inconsistency creates a compliance nightmare for national campaigns and leaves voters in different jurisdictions with unequal protections against digital disenfranchisement.

State legislatures have primarily focused on three method: temporal bans, disclosure mandates, and criminal penalties. The “blackout periods”, windows of time before an election when deepfakes are strictly prohibited, illustrate the. Texas, an early adopter with Senate Bill 751 (2019), enforces a 30-day prohibition window prior to an election. In contrast, California’s AB 730 (2019) extends this window to 60 days. Minnesota’s updated statutes push the boundary further, criminalizing the dissemination of deceptive media starting 90 days before a political party nominating convention or the beginning of absentee voting. A campaign operative could legally release a manipulated audio clip in Houston on October 1st that would land them in prison if released in Minneapolis.

The severity of punishment also fluctuates across state lines. Minnesota stands out for its aggressive criminalization. Under House File 1370, enacted in 2023 and strengthened in 2024, violating the election deepfake ban is a crime punishable by up to five years in prison and a $10, 000 fine if the intent is to injure a candidate or influence an election result. The law also mandates that convicted candidates forfeit their nomination or office. Texas classifies the offense as a Class A misdemeanor. Conversely, other states rely on civil remedies, allowing candidates to sue for damages stopping short of incarceration. This uneven risk profile suggests that bad actors may simply launch their disinformation campaigns from jurisdictions with lenient or non-existent statutes.

California has attempted to enforce the strictest controls, these efforts have collided with constitutional challenges. In 2024, the state passed AB 2839, which sought to broaden the definition of “materially deceptive content” and allow for immediate injunctive relief. Yet, in October 2024, a federal judge blocked the law, citing Amendment concerns. The court ruled that the statute was not narrowly tailored and could inadvertently suppress satire and parody. This legal defeat shows the difficulty of regulating synthetic media without infringing on protected speech, a balance that varies from circuit to circuit.

Disclosure requirements offer another of complexity. Michigan’s package of bills, signed in late 2023, focuses heavily on transparency. It requires any “materially deceptive media” distributed within 90 days of an election to carry a clear label stating it was “manipulated.” Failure to do so results in a misdemeanor for the offense and a felony for subsequent violations within five years. Washington and Oregon have adopted similar “watermarking” method, prioritizing voter information over outright bans. These laws assume that if voters know content is synthetic, they discount it, a hypothesis that remains untested.

The following table compares key provisions of election deepfake laws in select states, demonstrating the regulatory as of late 2025.

| State | Statute/Bill | Prohibition Window | Primary Penalty Type | Disclosure Exception? |

|---|---|---|---|---|

| Texas | SB 751 | 30 Days | Criminal (Class A Misdemeanor) | No |

| California | AB 730 / AB 2839* | 60 Days / 120 Days | Civil / Injunctive Relief | Yes (if labeled) |

| Minnesota | HF 1370 | 90 Days** | Criminal (Felony, up to 5 years) | No |

| Michigan | HB 5141 | 90 Days | Misdemeanor / Felony | Yes (must be clear) |

| New Mexico | HB 182 | 30 Days (Primary) / 60 Days (General) | Criminal (Misdemeanor) | Yes |

| *AB 2839 faced a federal injunction in Oct 2024. **Includes period before nominating conventions. | ||||

This patchwork creates a “race to the bottom”. Political action committees and dark money groups can exploit the states with the weakest definitions of “deceptive media.” For instance, while Utah defines “synthetic media” with specific technical criteria, other states use broader, more subjective language like “intent to mislead.” A video that is considered a harmless parody in Florida might be prosecuted as election interference in Colorado. Without a federal standard, the integrity of a national election depends on the weakest link in this chain of state-level regulations.

The EU AI Act: Implementation Gaps Regarding Political Satire and Disinformation

The European Union’s Artificial Intelligence Act (EU AI Act), hailed as the world’s detailed AI law, formally entered into force in August 2024. While European officials framed the legislation as a global standard for digital safety, a forensic examination of its implementation timeline reveals a serious vulnerability: the specific transparency rules designed to flag deepfakes not be legally enforceable until August 2, 2026. This two-year lag creates a regulatory vacuum during a period of intense electoral activity across the continent, leaving voters exposed to sophisticated disinformation campaigns that the law is technically designed to prevent temporally unable to stop.

The core of the EU’s defense against deepfakes lies in Article 50, which mandates that providers of AI systems must mark synthetic content in a machine-readable format (watermarking) and that deployers must disclose when content has been artificially generated or manipulated. Yet, these obligations remain dormant during the serious 2024-2025 election pattern. In the interim, enforcement relies on a voluntary “Code of Practice” that absence the bite of statutory penalties, allowing platforms and bad actors to operate with minimal oversight.

The “Satire” Loophole

Beyond the timeline deficit, the text of Article 50 contains a structural exemption that disinformation purveyors are already exploiting. The regulation states that transparency obligations do not apply where the content forms part of an “clear artistic, creative, satirical, or fictional work or programme.” In such cases, the requirement is limited to a disclosure that does not ” the display or enjoyment of the work.”

This “satire defense” provides a legal shield for malicious actors. By framing political disinformation as “parody” or “creative expression,” creators can bypass strict labeling requirements. During the 2024 European Parliament elections, researchers observed multiple instances where deepfake audio recordings of candidates were circulated on social media. When challenged, distributors frequently claimed the clips were satirical, freezing moderation efforts as platforms hesitated to remove content that might be protected speech under the new definitions.

| Regulatory Provision | Intended Function | Implementation Status | Real-World Exploit |

|---|---|---|---|

| Article 50(2) | Mandatory watermarking by AI providers. | Enforceable Aug 2026. | Deepfake tools currently release unmarked content; detection tools fail to identify 2025 election fakes. |

| Article 50(4) | Labeling of deepfakes by deployers. | Enforceable Aug 2026. | Political operatives release unlabeled audio/video, claiming “satire” exemption if caught. |

| Article 5 (Prohibitions) | Ban on AI for subliminal manipulation. | Active Feb 2025. | High load of proof for “subliminal” intent allows overt disinformation to. |

| GPAI Rules | widespread risk assessment for large models. | Active Aug 2025. | Open-source models released prior to Aug 2025 remain largely unmonitored. |

The Enforcement Void

The delay in full implementation means that for the 2024 and 2025 election pattern, the EU relies on the Digital Services Act (DSA) and voluntary commitments from tech giants to the gap. This method has proven insufficient. The DSA focuses on illegal content and widespread risks does not specifically criminalize unlabeled deepfakes unless they violate other laws (e. g., defamation). Consequently, a deepfake of a candidate admitting to a crime is frequently treated as a content moderation problem rather than a legal violation, subject to the slow and inconsistent internal review processes of social media platforms.

Data from the 2024 EU elections indicates that platform response times to reported deepfakes averaged 24 to 48 hours, an eternity in a viral news pattern. In Slovakia, a deepfake audio recording of a party leader discussing election rigging was released just 48 hours before the moratorium on campaigning. By the time fact-checkers debunked the audio and platforms began to downrank it, the clip had already been shared thousands of times, chance shifting the final vote margin. Under the fully implemented AI Act, such content would theoretically require immediate labeling, the current “transitional period” offers no such guarantee.

Technical Reality vs. Legislative Intent

The “machine-readable” marking requirement in Article 50 also faces technical blocks that the legislation does not fully address. Current watermarking standards, such as C2PA (Coalition for Content Provenance and Authenticity), are easily stripped by simple video editing techniques like cropping, re-encoding, or screen recording. The AI Act demands that these markings be “strong,” yet no technology currently exists that can survive the “analog hole”, where a user records a screen with another device, without degrading the content quality.

also, the reliance on providers to implement these fixes ignores the proliferation of open-source AI models. While major companies like OpenAI and Google may comply with voluntary codes in 2025, bad actors increasingly use uncensored, open-source models hosted on decentralized networks. These tools absence any safety guardrails or watermarking features, rendering the AI Act’s provider-centric method ineffective against the primary sources of election-related deepfakes.

The convergence of a delayed timeline, a broad satire exemption, and technical limitations creates a dangerous environment for European democracy. Until August 2026, the EU’s digital borders remain porous to AI-generated interference, with the AI Act serving more as a future pledge than a present shield.

Micro-Targeting 2. 0: Integrating Generative AI into Persuasion Architectures

The era of static voter files and broad demographic buckets is over. In its place, political operatives have deployed “Micro-Targeting 2. 0,” a sophisticated persuasion architecture that integrates large language models (LLMs) with real-time behavioral data. Unlike traditional micro-targeting, which relied on fixed attributes like age or zip code to serve pre-written ads, this new model uses generative AI to create unique, hyper-personalized content for individual voters. The system does not just deliver a message; it engages in an autonomous, adaptive loop of persuasion, measuring a target’s reaction and refining its output in milliseconds.

This shift represents a fundamental change in the mechanics of electioneering. In 2024 and 2025, researchers and developers unveiled systems capable of “autonomous social engineering.” One such project, CounterCloud, demonstrated the terrifying efficiency of this technology. For less than $400 per month, the fully autonomous system could scrape the web for opposing viewpoints, generate counter-narratives using LLMs, and post them across social platforms without human intervention. The system created fake journalist profiles, generated audio clips, and engaged in debates, operating 24/7 with a 90% success rate in creating “convincing” content. This is not a tool for efficiency; it is a weaponized persuasion machine.

The effectiveness of these architectures is backed by worrying metrics. A 2025 study by researchers at Cornell University and the Swiss Federal Institute of Technology found that AI-powered chatbots were significantly more persuasive than human-written static messages. In controlled experiments involving the 2024 U. S. presidential election, a pro-Harris AI model was able to shift the opinions of likely Trump voters by 3. 9 percentage points, an effect size roughly four times larger than traditional TV advertising. Conversely, a pro-Trump AI model moved likely Harris voters by 1. 5 percentage points. The study revealed that “information density”, the AI’s ability to rapidly deploy fact-sounding claims, accounted for 44% of its persuasive impact.

These systems operate on a framework frequently described in technical circles as the Model of Adaptive Persuasion (MAP). This architecture consists of three distinct: an internal that assesses the voter’s motivation and ability to process information; a “route of thinking” that determines whether to use logical (central) or emotional (peripheral) appeals; and a strategy that selects specific tactics like “social proof” or “scarcity.” In 2025, the ElecTwit simulation framework further exposed how multi-agent systems could exploit these, identifying over 25 specific persuasion techniques used by LLMs to manipulate voter sentiment in realistic social media environments.

| Feature | Traditional Micro-Targeting (2012-2020) | Micro-Targeting 2. 0 (2024-Present) |

|---|---|---|

| Core Technology | Static Databases, A/B Testing, Human Copywriting | Generative LLMs, Autonomous Agents, Real-Time Sentiment Analysis |

| Content Generation | One-to- (Segments receive same ad) | One-to-One (Unique content for each voter) |

| Adaptability | Slow (Weeks to adjust campaigns) | Instant (Milliseconds to adjust tone/argument) |

| Cost Efficiency | High (TV/Print ads: $200k+ budgets) | Low (AI Token generation: <$0. 01 per interaction) |

| Persuasion Lift | ~1% shift in voter intent | ~3. 9% shift in voter intent (AI Chatbots) |

The commercialization of these tools has been rapid. Platforms like Votivate have integrated advanced modeling to allow campaigns to target voters with granular precision. yet, the real danger lies in the “democratization” of disinformation. Fine-tuning a standard open-source model on specific political narratives can boost its persuasiveness by up to 51%, according to 2025 data from the Oxford Internet Institute. This means that even under-resourced campaigns or foreign actors can deploy “bot swarms”, networks of AI agents that coordinate to flood a specific district with tailored misinformation, without the need for a massive staff or budget.

The chart illustrates the comparative “Persuasion Lift” of various campaign methods, highlighting the dominance of interactive AI over traditional media.

Chart Description: Comparative Persuasion Effectiveness (2024-2025 Data)

A bar chart compares the percentage point shift in voter preference across three mediums.

- TV Advertising: A small blue bar showing a 0. 9% shift.

- Human Canvassing: A moderate green bar showing a 1. 8% shift.

- AI Chatbot Interaction: A towering red bar showing a 3. 9% shift.

The visual show the force-multiplying effect of generative AI in political discourse.

Regulatory frameworks have failed to keep pace with this technological leap. While the European Union’s AI Act includes transparency requirements for synthetic media, and the U. S. Federal Election Commission has debated rules on labeling, the decentralized nature of these architectures makes enforcement nearly impossible. A “manipulation machine” running on a private server in a non-extradition country can target voters in Pennsylvania or Michigan with impunity, bypassing platform safeguards by mimicking human behavior patterns so perfectly that detection algorithms are rendered useless.

Visual Saturation: The Normalization of AI Imagery in the Trump-Harris pattern

The 2024 election pattern marked the moment synthetic media transitioned from a theoretical threat to a pervasive, ambient feature of American political discourse. Unlike the, high-profile “deepfake” incidents feared in 2020, the 2024 was defined by a relentless volume of low-grade, hyper-partisan AI imagery. This saturation did not deceive; it exhausted the electorate’s capacity for verification, creating a “liar’s dividend” where reality itself became a matter of partisan preference.

By late 2024, the sheer quantity of synthetic content on social platforms had reached measurable ubiquity. A May 2025 retrospective analysis of X (formerly Twitter) data from the election period revealed that approximately 12% of all political images shared on the platform were AI-generated. More telling was the concentration of this output: just 10% of users were responsible for 80% of these synthetic uploads, indicating a coordinated, industrial- deployment rather than organic grassroots creation.

This flood of imagery forced a shift in voter perception. A Tech Policy Press/YouGov poll conducted in September 2024 found that 58% of registered voters believed they had encountered AI-generated political content in their social feeds, a sharp increase from 47% just three months prior. The public was not just seeing these images; they were expecting them.

The Architecture of “Memetic” Disinformation

The normalization process was accelerated by the strategic pivot from deception to “memetic warfare.” Campaigns and surrogates began deploying AI imagery not always to fool voters in the traditional sense, to signal tribal loyalty and emotional truth over factual accuracy. The Ron DeSantis campaign’s early use of AI-generated images showing Donald Trump hugging Dr. Anthony Fauci set the tactical precedent. While debunked by forensics experts, the images successfully anchored the narrative of a Trump-Fauci alliance in the minds of primary voters before the truth could catch up.

Donald Trump’s campaign later adopted and amplified this strategy. In August 2024, Trump posted a series of AI-generated images to Truth Social, including one depicting Taylor Swift as Uncle Sam with the caption “Taylor wants you to vote for Donald Trump.” Another set showed young women wearing “Swifties for Trump” t-shirts. When challenged, the defense was not that the images were real, that they were “satire” or “internet culture,” blurring the lines between political endorsement and shitposting.

| Date | Content Description | Origin/Platform | Strategic Intent |

|---|---|---|---|

| June 2023 | Trump hugging/kissing Anthony Fauci | DeSantis Campaign / X | Undermine Trump’s COVID-19 record with base |

| March 2024 | Trump posing with groups of Black voters | Supporters / Facebook | Fabricate narrative of minority support |

| August 2024 | Kamala Harris addressing “Communist” rally | Elon Musk / X | Visualizing the “Comrade Kamala” pejorative |

| August 2024 | Taylor Swift / “Swifties for Trump” endorsement | Trump / Truth Social | Manufacture perception of cultural momentum |

The “Liar’s Dividend” and the Reverse Deepfake

The most corrosive effect of this visual saturation was the emergence of the “reverse deepfake”, the phenomenon where real events are dismissed as AI fabrications. As synthetic media became common, political actors gained the ability to plausibly deny reality.

In August 2024, following a massive Kamala Harris rally at a Detroit aircraft hangar, Donald Trump publicly claimed the crowd size was falsified using AI. “There was nobody at the plane, and she ‘A. I.’d’ it,” he posted on Truth Social, even with thousands of witnesses and extensive media coverage confirming the crowd’s existence. In previous pattern, such a claim might have been dismissed as a fringe conspiracy. In 2024, amidst a sea of actual AI fakes, the accusation resonated with a base already conditioned to distrust their eyes.

This skepticism was quantifiable. Pew Research Center data from September 2024 showed that 57% of Americans were “extremely or very concerned” that AI would be used to distribute misleading information. yet, this concern did not lead to higher vigilance; it led to paralysis. When everything could be fake, the load of proof for reality became for the average voter.

Platform Complicity and the Grok Factor

The infrastructure for this saturation was provided largely by the platforms themselves. X’s introduction of the Grok AI chatbot, available to premium subscribers, removed the technical blocks to creating photorealistic disinformation. Unlike competitors with strict guardrails, Grok allowed users to generate images of candidates in compromising or illegal scenarios with minimal friction.

In one documented instance, Grok was used to generate hyper-realistic images of Vice President Harris in a communist military uniform, which were then amplified by Elon Musk himself. The platform’s algorithm, which prioritizes high-engagement visual content, ensured these fabrications traveled faster than fact-checks. By the time corrections were issued, frequently via the platform’s own “Community Notes”, the images had already accrued millions of impressions, cementing the visual association in the voter’s subconscious.

Russian Influence Operations: The Evolution of Doppelganger and AI Content

The architecture of modern Russian information warfare has shifted from human-curated troll farms to automated, AI-driven systems capable of flooding the digital ecosystem with fabricated reality. At the center of this evolution is “Doppelganger,” a persistent influence operation identified in May 2022. Unlike previous campaigns that relied heavily on organic engagement, Doppelganger industrializes deception by cloning legitimate media outlets to launder pro-Kremlin narratives. Between 2022 and 2025, the operation evolved from simple website spoofing to a sophisticated, generative AI-powered apparatus targeting the United States, France, and Germany.

The operation is orchestrated by two Russian entities, the Social Design Agency (SDA) and Structura National Technologies, operating under the direct supervision of the Russian Presidential Administration. Intelligence released by the U. S. Department of Justice in September 2024 confirmed that Sergei Kiriyenko, the Deputy Chief of Staff to President Vladimir Putin, personally directed these efforts. The campaign’s primary tactic, known as “typosquatting,” involves registering domains that mimic trusted news sources, such as washingtonpost. pm instead of washingtonpost. com, or spiegel. ltd for the German outlet Der Spiegel. These clone sites host fabricated articles and videos that are then amplified by networks of tens of thousands of social media bots.

By late 2023, Doppelganger had integrated generative AI to its production of disinformation. This shift marked a serious departure from manual content creation. The operation began using Large Language Models (LLMs) to generate news articles, draft social media posts, and create comments that mimicked the vernacular of specific target demographics. A report from the DFRLab on February 26, 2025, revealed that the network had expanded into “Operation Undercut,” a parallel campaign utilizing AI-edited videos and images to undermine support for Ukraine and U. S. foreign policy. This phase saw the deployment of AI-generated narration on TikTok, where accounts posted hundreds of videos that garnered millions of views while using content masking techniques to evade platform detection.

| Metric | Verified Data | Source / Context |

|---|---|---|

| Seized Domains | 32 | U. S. Dept. of Justice seizure (Sept 2024) targeting U. S. election interference. |

| Bot Network Size | 50, 000+ | German Foreign Office identified active accounts on X in a 6-week period (2024). |

| Content Volume | 31, 000+ Articles | Published on the central “Recent Reliable News” (RRN) portal by Sept 2024. |

| Ad Spend | $105, 000 | Early campaign spending on Meta ads to promote clone sites (2022, 2023). |

| Targeted Outlets | 17+ Major Brands | Includes The Washington Post, Fox News, Bild, The Guardian, and Le Monde. |

The sophistication of Doppelganger lies in its “Operation Overload” (also known as Matryoshka), a sub-campaign designed to exhaust the resources of Western journalists and fact-checkers. Operatives flood media organizations with fake requests for verification, frequently including AI-generated audio or video evidence that requires time-consuming forensic analysis to debunk. In 2024, this tactic was deployed against French and American media outlets, aiming to distract investigative teams during serious election pattern. The operation also utilized a “burning” infrastructure model, where disposable domains were registered, used for a massive burst of traffic, and then abandoned within hours to stay ahead of blacklist filters.

The “Stars of David” incident in France demonstrates the network’s ability to digital disinformation with physical reality. In November 2023, French authorities attributed the amplification of photos showing anti-Semitic graffiti in Paris to the Doppelganger network. A bot network of over 1, 000 accounts on X (formerly Twitter) published nearly 2, 600 posts in a coordinated effort to stoke social tension. This hybrid method, using digital assets to inflame physical events, represents a dangerous maturation of Russian influence tactics.