Negligence allegations and shareholder litigation following the July 2024 Falcon sensor update outage

Microsoft does not require a WHQL (Windows Hardware Quality Labs) signature for every configuration file, only for the driver binary.

Why it matters:

- A defective configuration update distributed by CrowdStrike Holdings, Inc. caused a catastrophic disruption to millions of Windows systems, highlighting the importance of software safety practices.

- The crash resulted from an internal failure in applying standard testing procedures to "Rapid Response Content," bypassing the usual rigorous testing applied to other parts of the Falcon platform.

The 'Rapid Response' Loophole: How Channel File 291 Bypassed Staged Rollouts

Technical Root Cause: The Logic Error in Channel File 291

The Architecture of Channel File 291

To understand the catastrophic failure of July 19, 2024, one must examine the specific method CrowdStrike uses to update its defenses. The component at the center of this disaster was Channel File 291. This file is not a driver binary or a traditional software executable. It is a configuration file. Specifically, it belongs to a category CrowdStrike calls “Rapid Response Content.” These files contain behavioral heuristics and rules that the Falcon sensor interprets in real time. The purpose of Channel File 291 was to control the execution of “Named Pipes,” a method in Windows used for communication between different processes. Cybercriminals frequently abuse Named Pipes to move laterally across a network or to establish command and control channels.

The Falcon sensor operates as a kernel-mode driver, known as `CSAgent. sys`. Because it runs in the kernel, or “Ring 0,” it has complete access to system memory and hardware. This position is necessary for security software to intercept malicious activity before it can damage the operating system. Yet this privilege comes with a severe cost. Any error in kernel mode does not crash the application; it crashes the entire operating system. Channel File 291 was designed to be read and executed by this kernel driver. The file contained “Template Instances,” which are essentially sets of instructions or data fields that tell the driver what to look for.

The specific update released on that Friday morning was intended to target newly observed malicious Named Pipes used by common command-and-control frameworks. The update introduced a new version of the configuration data. This data was structured according to a specific format called an IPC Template Type. The sensor code relies on these templates to know how to parse the data coming from the cloud. The stability of the entire system rested on the assumption that the data provided in the Channel File would perfectly match the structure expected by the sensor’s code. That assumption proved fatal.

The Parameter Count Mismatch

The technical root cause of the outage was a logic error involving a gap in input parameters. The IPC Template Type used for this specific update defined twenty-one distinct input fields. These fields represent different attributes or data points that the sensor needs to evaluate to decide if a Named Pipe is malicious. The definition file, which acts as a blueprint for the content, stated that twenty-one inputs were required.

The problem lay in the sensor code itself. The Content Interpreter, the part of the `CSAgent. sys` driver responsible for reading these files, was only programmed to provide twenty input fields. This created a fundamental mismatch. The blueprint said “expect twenty-one items,” the only delivered twenty. In C++ programming, which is the language used for Windows kernel drivers, managing memory arrays is a manual and precise task. If a program attempts to read data from a memory location that has not been allocated or assigned to it, the consequences are immediate and severe.

When the new Channel File 291 was downloaded to millions of machines, the Content Interpreter began its work. It loaded the Template Instance and started processing the rules. The logic within the update instructed the interpreter to check the twenty- input field. The interpreter obeyed. It went to the memory address where the twenty- value should have been. because the sensor had only allocated space for twenty values, that address was invalid. It was “out of bounds.”

The Wildcard Factor

A disturbing question arises from this analysis: why did this not happen sooner? CrowdStrike had released updates to Channel File 291 before July 19. The feature itself was introduced in February 2024 with sensor version 7. 11. Between March and April 2024, the company deployed three other Template Instances using this same IPC Template Type. Those updates did not crash systems. They functioned perfectly.

The difference on July 19 was the specific data values used in the update. The previous instances utilized “wildcard” matching for the twenty- field. In the context of this system, a wildcard tells the interpreter to ignore the specific value of a field and accept anything. When the logic encounters a wildcard, it does not need to read the actual data from the input array. It simply marks the check as passed and moves on. Therefore, in the earlier updates, the Content Interpreter never actually attempted to access the memory address for the twenty- input. The mismatch existed, it was dormant.

The update on July 19 was different. It replaced the wildcard with a specific, non-wildcard matching criterion. It instructed the sensor to look for a concrete value in that twenty- slot. This forced the Content Interpreter to perform the read operation. It reached out to the memory array, stepped past the twentieth item, and tried to read the twenty-. That memory did not belong to the array. The CPU detected an illegal memory access. In a user-mode application, this would cause the program to close. In the kernel, the processor triggers a hardware exception. Windows catches this exception and, to protect data integrity, immediately halts the system. This is the “Blue Screen of Death” with the stop code `PAGE_FAULT_IN_NONPAGED_AREA` or a similar access violation.

The Failure of the Content Validator

The existence of a bug in the driver code is serious, software bugs are inevitable. The true negligence lies in how this buggy file escaped into the wild. CrowdStrike employs an automated testing system known as the Content Validator. This tool is responsible for checking every configuration file before it is pushed to the content delivery network. The Content Validator should have rejected the malformed Channel File 291. It did not.

The Content Validator contained a logic flaw mirroring the one in the sensor. The validator checked the new Template Instance against the Template Type definition. Since the definition said “twenty-one inputs are valid,” and the file contained twenty-one inputs, the validator marked the file as correct. The validator failed to cross-reference this against the actual capabilities of the sensor code. It validated the data against the *intent* of the design, not the *reality* of the deployment.

also, the automated testing suites used by CrowdStrike were insufficient. The tests performed prior to release used the same wildcard logic that had masked the problem in earlier months. There was no specific test case designed to verify non-wildcard matching for that twenty- field. The testing process assumed that if the file structure looked correct, the sensor would handle it. This assumption ignored the hard constraints of C++ memory management. A simple “fuzzing” test, which throws random and unexpected data at the driver to see if it crashes, would likely have triggered this fault immediately. The absence of such rigorous testing for a kernel-mode component suggests a dangerous gap in quality assurance.

widespread Fragility in Kernel Design

This incident exposes a deep fragility in the architecture of modern Endpoint Detection and Response (EDR) systems. To stay ahead of adversaries, companies like CrowdStrike built method to push updates instantly, bypassing the slow and cautious certification process required for kernel drivers. They achieved this by creating a “driver that reads data” rather than updating the driver itself. Channel File 291 was technically just data. Microsoft does not require a WHQL (Windows Hardware Quality Labs) signature for every configuration file, only for the driver binary (. sys file).

CrowdStrike used this loophole to deliver “Rapid Response” capabilities. By treating executable logic as data, they gained speed bypassed the safety checks inherent in the driver update process. The sensor was built to act as a interpreter, essentially a virtual machine running inside the Windows kernel. This is an incredibly complex engineering feat. Complexity in the kernel is the enemy of stability.

The logic error in Channel File 291 was not a complex race condition or a subtle timing attack. It was a basic “off-by-one” error, a counting mistake. The fact that such a rudimentary error could bring down 8. 5 million systems indicates that the safeguards surrounding this interpretation engine were wholly insufficient. The system had no “bounds checking” at runtime. A resilient driver would check if the index it is about to read is within the valid range of the array (e. g., “is 21 <= 20?"). If the check fails, the driver should log an error and discard the update. Instead, the CrowdStrike sensor blindly trusted the input, marched off the edge of its allocated memory, and took the world's digital infrastructure down with it.

This failure was not just a coding error; it was a failure of defensive programming. In kernel development, paranoia is a virtue. Developers must assume that every input is chance malformed and that every memory access is a chance crash. The code in `CSAgent. sys` absence this necessary paranoia. It assumed the validator would catch mistakes. The validator assumed the definition was correct. This chain of trust was broken at every link, resulting in a single point of failure that no amount of marketing about “AI-powered protection” could mitigate.

Input Mismatch: The 21-Input Update vs. the 20-Input Sensor Expectation

The Arithmetic of Failure: 21 Inputs vs. 20 Expectations

At the core of the July 19, 2024, global outage lay a rudimentary arithmetic error that no modern software supply chain should permit, let alone one operating at the Windows kernel level. The technical root cause was not a sophisticated cyberattack or a complex race condition, a structural mismatch between the engine and its fuel. The Falcon sensor’s code, running on millions of endpoints, was hardwired to accept 20 input fields. The configuration update sent by CrowdStrike, Channel File 291, contained 21. This gap, known as the “Input Mismatch,” serves as the primary evidence in shareholder litigation and the Delta Air Lines lawsuit, where plaintiffs it demonstrates a breakdown in basic quality assurance. The specific method of failure involved the Inter-Process Communication (IPC) Template Type. This template defines the data structure the sensor uses to evaluate system behavior. In February 2024, CrowdStrike introduced a new IPC Template Type intended to detect attack techniques involving named pipes. The definition file for this template specified 21 input fields. Yet, the actual sensor integration code, the C++ logic responsible for interpreting this data, was only provisioned to handle 20.

The Validator’s Blind Spot

The existence of a mismatch is a coding error; the deployment of that mismatch to 8. 5 million devices is a process failure. The “Content Validator,” an internal tool designed to vet updates before they leave CrowdStrike’s servers, failed to flag the gap. Post-incident reviews revealed that the validator contained a logic flaw. While the template definition correctly listed 21 inputs, the validation logic did not enforce a strict schema check against the sensor’s binary capabilities. Testing procedures further obscured the danger. Between March and April 2024, CrowdStrike deployed earlier versions of the IPC Template. These versions technically included the 21st field, the detection logic used “wildcard matching” for that specific input. In software testing, a wildcard match ignores the specific value of a field, allowing the test to pass without the code attempting to read the underlying memory address in a specific way. The July 19 update was the instance where the 21st input required a specific, non-wildcard string match. This shift from passive wildcard to active evaluation triggered the latent defect.

The Kernel Panic method

When the Falcon sensor received the update, the Content Interpreter, a component running with Ring 0 kernel privileges, attempted to execute the new logic. The instruction set dictated a comparison operation on the 21st input field. The C++ code, yet, had only allocated a data array for 20 pointers. 1. **The Instruction:** The logic requested data from index 20 (the 21st slot, as arrays are zero-indexed). 2. **The Reality:** The array ended at index 19. 3. **The Violation:** The CPU attempted to read the memory address immediately following the allocated array. In a user-mode application (Ring 3), such an “out-of-bounds read” results in the program crashing, a nuisance, not a catastrophe. The application closes, and the user restarts it. Because the Falcon sensor operates in kernel mode, the consequences are widespread. The Windows operating system, detecting an illegal memory access by a kernel driver, executed a bug check to protect the integrity of the hardware. This bug check manifests as the Blue Screen of Death (BSOD) with the stop code `PAGE_FAULT_IN_NONPAGED_AREA`. The timing of this crash exacerbated the damage. The failure occurred early in the boot process, frequently before the Falcon management agent could fully initialize and receive a rollback command. This created the “boot loop” phenomenon where affected machines would crash, restart, load the faulty driver again, and crash immediately, rendering remote remediation impossible for thousands of IT administrators.

Legal and Negligence

The simplicity of the 21-versus-20 error has become a focal point for legal action. In *Delta Air Lines v. CrowdStrike*, the airline’s legal team characterizes this mismatch not as a mere accident, as gross negligence. The argument posits that a competent software vendor, particularly one charging a premium for ” ” protection, must employ fuzzing and boundary testing that would inevitably detect an out-of-bounds read. Shareholder class action suits echo this sentiment, alleging that CrowdStrike’s representations of its “AI-powered” and “validated” platform were materially misleading given that a manual static analysis or a basic schema validation script could have prevented the disaster. The mismatch exposes a gap between the company’s marketing of sophisticated, proprietary technology and the reality of its internal testing rigor, which, in this instance, failed to count to twenty-one.

| Component | Configuration | Status |

|---|---|---|

| IPC Template Definition | Defined 21 Input Fields | Updated correctly in definition files. |

| Sensor Integration Code | Supported 20 Input Fields | Hardcoded limit in C++ binary. |

| Content Validator | Passed the Update | Logic error missed schema conflict. |

| Testing Methodology | Wildcard Matching | Masked the read error during QA. |

| Execution Result | Out-of-Bounds Read | Kernel Panic (BSOD). |

Failure of the Content Validator: The Internal Tool Bug That Missed the Flaw

The Automated Gatekeeper’s Collapse

The catastrophe that struck on July 19, 2024, did not originate from a malicious external actor from the silent failure of an internal quality assurance tool. At the center of CrowdStrike’s deployment pipeline stood the Content Validator, a proprietary software utility designed to act as the final checkpoint for Rapid Response Content updates. This tool bore the responsibility of verifying the syntax and integrity of configuration files before they reached millions of sensors worldwide. In the case of Channel File 291, the Content Validator failed to perform its primary function. It marked a malformed file as safe, permitting a lethal logic error to propagate to the global fleet.

CrowdStrike’s Preliminary Post Incident Review (PIR) identified this failure as the immediate technical trigger for the outage. The company admitted that a bug existed within the Content Validator itself. This software defect caused the validator to approve the problematic Template Instances contained in the update. The validator checked the file against the defined parameters of the IPC Template Type and determined, erroneously, that the content was valid. This “pass” signal allowed the update to bypass any further scrutiny, moving directly to the production environment. The reliance on this single, flawed tool removed the safety net for the entire customer base.

The Logic Error Behind the Green Light

The specific nature of the validator’s failure involves a gap between expected inputs and actual sensor capabilities. The IPC Template Type used to generate the update defined 21 input fields. The Content Validator reviewed the new Template Instances and confirmed they matched this 21-field definition. yet, the Content Interpreter, the component residing on the actual endpoint sensors, was only programmed to accept 20 inputs. The validator absence the logic to detect this mismatch. It verified that the update matched the template definition failed to verify if the sensor could handle that definition.

This logic error meant the validator was enforcing a rule set that was incompatible with the reality of the deployed sensors. When the validator scanned Channel File 291, it saw data that mathematically fit the template structure. It did not check for the “out-of-bounds” risk that arises when a 21st parameter is forced upon a system expecting only 20. This gap in the validation logic turned the tool into a rubber stamp. It provided a false sense of security, confirming the file’s internal consistency while ignoring its external compatibility. The result was a “valid” file that acted as a poison pill for the Windows operating system.

The Wildcard Blind Spot

A serious factor in the validator’s failure was the testing methodology used during the development of the IPC Template Type. CrowdStrike revealed that earlier testing of this template type involved “wildcard matching” for the 21st input field. In software testing, a wildcard allows the system to accept any value or no value without triggering specific processing logic. Because the tests used wildcards, the Content Interpreter on the sensor never attempted to read a specific data value from that 21st slot during the testing phase. The system remained stable because the dangerous memory read operation was never actually executed.

The Content Validator was not programmed to distinguish between a wildcard test case and a specific production value. When the July 19 update introduced a non-wildcard matching criterion, a specific value, into that 21st field, the sensor attempted to read it. This action triggered the out-of-bounds memory read that caused the crash. The validator, having seen the template pass previous tests (which were masked by wildcards), treated the new instance as safe. It failed to recognize that shifting from a wildcard to a concrete value fundamentally changed the risk profile of the update. This blind spot allowed the fatal code to pass through the gate simply because previous, less specific versions had not caused a crash.

Reliance on “Trust” Over Testing

The failure of the Content Validator was compounded by a procedural decision to trust historical data over fresh verification. CrowdStrike’s PIR stated that the deployment proceeded based on “trust in the checks performed in the Content Validator, and previous successful IPC Template Instance deployments.” Because instances deployed on March 5, April 8, and April 24 had succeeded, the system assumed the July 19 instance would also succeed. This assumption proved fatal. The previous instances had not utilized the 21st input field in a way that triggered the latent bug.

This reliance on “trust” forms the core of the negligence allegations leveled by shareholders and affected clients like Delta Air Lines. Legal complaints that assuming a new update is safe because old updates were safe violates basic principles of software engineering. The Content Validator was the sole barrier for Rapid Response Content, yet it was not subjected to a fresh round of rigorous testing for this specific deployment. The process treated the validator as an infallible oracle, ignoring the possibility that the tool itself might be defective or that the new data might interact differently with the sensor.

The Absence of Redundancy

The architecture of the validation process reveals a serious absence of redundancy. For standard sensor updates (code changes), CrowdStrike employs a staged rollout and extensive beta testing. For Rapid Response Content (configuration changes), the process relied almost exclusively on the Content Validator. Once the validator gave the green light, the system pushed the update to all online sensors immediately. There was no secondary check, no “canary” deployment to a small subset of machines, and no human review of the validator’s output.

This single point of failure meant that a bug in the testing tool became a bug in the production environment. In high- engineering, it is standard practice to “test the testers”, to verify that validation tools are working correctly. The July 19 incident shows that the Content Validator had not been adequately tested against the scenario of an input mismatch. The tool was running on a logic flaw that remained dormant until the specific conditions of Channel File 291 exposed it. The absence of a secondary validation meant there was no safety method to catch the validator’s mistake.

Litigation and the “Cutting Corners” Argument

The malfunction of the Content Validator has become a focal point in the legal battles following the outage. In the class action lawsuit Plymouth County Retirement Association v. CrowdStrike, plaintiffs allege that the company’s failure to maintain a functioning validation system constitutes gross negligence. The complaint cites the PIR’s admission of a “bug in the Content Validator” as evidence that CrowdStrike prioritized speed over safety. The argument posits that a cybersecurity firm charging a premium for protection has a duty to ensure its own internal safety tools are free from serious defects.

Delta Air Lines has been particularly vocal, with its legal representatives stating that CrowdStrike “cut corners” and “circumvented” standard testing. By relying on a buggy automated tool instead of a diversified testing strategy, CrowdStrike exposed its customers to avoidable risk. The failure of the validator is not viewed by plaintiffs as an honest mistake as a widespread failure of governance. They that the company should have known the validator was insufficient for the task of protecting the Windows kernel from malformed data.

Post-Incident Changes to Validation

In the wake of the outage, CrowdStrike has announced significant changes to the Content Validator. The company stated it is adding “additional validation checks” to the tool to specifically guard against input mismatches. They are also moving away from the single-point-of-failure model. Future Rapid Response Content undergo a staged rollout, similar to sensor updates, rather than an immediate global push. This shift acknowledges that the Content Validator, regardless of how bugs are fixed, should never be the sole arbiter of safety for a global fleet.

The company also committed to “local developer testing” and “content update and rollback testing” as new in the process. These measures are designed to catch logic errors that the automated validator might miss. yet, for the millions of users who faced the Blue Screen of Death on July 19, these improvements come too late. The incident remains a clear lesson in the dangers of automation bias, the tendency to trust a software tool simply because it is software, without verifying that it is actually doing its job. The Content Validator was meant to be the shield; instead, it was the open door.

Kernel-Level Vulnerability: Architectural Risks of Ring 0 Access

The Ring 0 Trap: Absolute Power, Absolute Failure

The catastrophic failure of July 19, 2024, was not a coding error; it was the inevitable result of a high-risk architectural gamble known as kernel-mode execution. To understand the magnitude of the negligence allegations leveled against CrowdStrike, one must examine the specific environment in which the Falcon sensor operates: Ring 0. In the Windows operating system architecture, Ring 0 is the most privileged level of execution, reserved for the kernel and hardware drivers. Code running here has unrestricted access to system memory and hardware. It operates without the safety nets provided to standard user applications running in Ring 3. When a user-mode application crashes, the program closes, and the user returns to the desktop. When a kernel-mode driver crashes, the entire operating system halts immediately to prevent data corruption, resulting in the “Blue Screen of Death” (BSOD).

CrowdStrike’s Falcon sensor, specifically the csagent. sys driver, resides in this unforgiving territory. The decision to operate at Ring 0 provides the sensor with deep visibility into system processes, allowing it to detect rootkits and sophisticated malware that might hide from user-mode antivirus tools. Yet, this privilege comes with a non-negotiable requirement for perfection. A single memory violation in Ring 0 triggers a system-wide panic. On July 19, the logic error in Channel File 291 caused the driver to attempt a read operation from an invalid memory address. The Windows kernel, detecting an illegal access attempt in a non-paged area, executed a bug check with the stop code PAGE_FAULT_IN_NONPAGED_AREA. This method is designed to protect the integrity of the system, in this instance, it rendered 8. 5 million devices inoperable.

The architectural negligence argument centers on the mismatch between the environment’s fragility and the update method’s aggression. Kernel drivers are subject to months of rigorous testing and certification by Microsoft’s Windows Hardware Quality Labs (WHQL) before deployment. CrowdStrike, yet, bypassed this slow stabilization process for its “Rapid Response” updates. By designing the csagent. sys driver to read definition files (Channel Files) at boot time, CrowdStrike created a loophole that allowed them to inject unverified logic into the kernel without Microsoft’s direct oversight. The driver itself remained unchanged and signed, the content it processed was volatile and flawed. This architecture treated the Windows kernel, a space requiring surgical precision, with the casual fluidity of a web browser updating a cached page.

The 2009 EU Accord and Microsoft’s Hands-Off Policy

Following the outage, scrutiny turned toward Microsoft’s role in permitting third-party vendors such as CrowdStrike to operate with such dangerous privileges. Microsoft officials, including Vice President of Enterprise and OS Security David Weston, pointed to a 2009 agreement with the European Commission as the primary reason they could not simply lock vendors out of the kernel. The 2009 interoperability undertaking was a legal settlement designed to assuage antitrust concerns. It mandated that Microsoft provide third-party security vendors with the same level of access to Windows APIs that Microsoft’s own security products enjoyed. This legal framework forced Microsoft to keep the kernel gates open, preventing them from enforcing a safer, user-mode-only ecosystem similar to Apple’s macOS.

Microsoft’s defense highlights a regulatory paradox: laws intended to competition inadvertently instability. By ensuring that competitors like CrowdStrike could access the kernel to build ” ” detection tools, regulators accepted a trade-off where the stability of the Windows ecosystem relied on the internal quality assurance of independent vendors. Microsoft signs the binary drivers to verify their identity, they do not, and legally cannot, inspect the proprietary content files that those drivers load. As Weston noted in the aftermath, the Channel File 291 was a binary blob meaningful only to CrowdStrike’s interpreter. Microsoft’s signing process validated the container (the driver), not the poison inside it (the update).

This separation of responsibility created a “grey zone” of liability. CrowdStrike exploited the kernel access guaranteed by the EU ruling failed to uphold the stability standards associated with that privilege. Critics that while the EU mandated access, it did not mandate recklessness. The ability to run in the kernel does not compel a vendor to push untested updates to it at 4: 09 AM on a Friday. The negligence lies not in the access itself, in the abuse of that access for speed over safety.

Updates in a Static Environment

The core of the architectural risk is the collision between “Rapid Response” methodology and kernel-mode rigidity. Modern software development prizes agility, continuous integration, and instant updates. Kernel development prizes stasis, verification, and caution. CrowdStrike attempted to force the former into the latter. The Falcon sensor’s architecture was built to ingest new threat definitions instantly, a need in a security environment where new malware appear hourly. Yet, implementing this capability inside the kernel created a structural hazard.

In a safer architecture, the kernel driver would act as a dumb data collector, passing telemetry to a user-mode service where the complex logic and pattern matching would occur. If the user-mode service crashed due to a bad update, the machine would stay online, and the service could restart. CrowdStrike, yet, performed the pattern matching (the logic that failed) directly in the kernel to minimize latency and prevent malware from killing the user-mode service. This design choice prioritized the sensor’s survival over the host system’s survival. When the sensor failed, it took the host down with it.

Security experts have long advocated for the adoption of eBPF (Extended Berkeley Packet Filter) on Windows, a technology used in Linux that allows sandboxed programs to run in the kernel without the risk of crashing the entire system. While Microsoft has been working on eBPF support, it was not sufficiently mature or mandated at the time of the incident. CrowdStrike’s reliance on a legacy filter driver model, combined with a cloud-native update cadence, created a “glass cannon”, against threats shattering under the slightest internal defect. The refusal to decouple the volatile content updates from the kernel driver constitutes the primary technical argument for gross negligence.

Litigation Status: The Distinction Between Fraud and Negligence

As of early 2026, the legal from the outage has bifurcated into two distinct tracks: securities fraud litigation and commercial negligence claims. In January 2026, a significant development occurred when U. S. District Judge Robert Pitman in Austin, Texas, dismissed the shareholder class action lawsuit against CrowdStrike. The investors had alleged that CrowdStrike defrauded them by making materially false statements about the quality of their software testing prior to the crash. Judge Pitman ruled that the plaintiffs failed to prove “scienter”, the intent to deceive. The court found that while CrowdStrike’s testing procedures were demonstrably insufficient, incompetence does not equate to securities fraud. The company did not lie about having testing; they simply had testing that failed to catch a specific, catastrophic bug.

This dismissal, yet, does not absolve CrowdStrike of liability in the commercial sector. The lawsuit filed by Delta Air Lines in Georgia continues to proceed, focusing on “gross negligence” and “breach of contract” rather than fraud. Delta’s legal team that CrowdStrike owed a duty of care to its customers to ensure that updates pushed to the kernel were safe. The distinction is important: the shareholder suit failed because it required proof of a lie; the Delta suit requires only proof of reckless disregard for safety. The “Rapid Response” method, which bypassed staged rollouts for kernel-level changes, serves as Exhibit A in the argument for gross negligence. Delta contends that pushing an update to 8. 5 million kernels simultaneously, without a canary test group, deviates so far from standard industry practice that it constitutes a breach of the duty of care.

The outcome of the Delta litigation likely hinge on the interpretation of the Service Level Agreements (SLAs) and the limitation of liability clauses. Most software contracts cap liability at the cost of the subscription. yet, if Delta can prove gross negligence, that CrowdStrike knowingly ignored the risks of its kernel architecture, those liability caps may be pierced. The architectural decisions discussed here, operating in Ring 0, bypassing WHQL for content updates, and failing to implement staged deployment, form the factual bedrock of these ongoing legal battles.

Architectural Comparison: Kernel vs. User Mode Risks

| Feature | Kernel Mode (Ring 0) | User Mode (Ring 3) |

|---|---|---|

| Access Level | Unrestricted access to hardware and memory. | Restricted, virtualized memory access. |

| Failure Consequence | Total System Crash (BSOD). | Application Crash (Recoverable). |

| CrowdStrike Usage | Used for csagent. sys driver and threat detection. |

Used for management UI and cloud communication. |

| Update Risk | High. Flaws cause OS instability. | Low. Flaws cause service interruption. |

| Microsoft Oversight | Drivers signed by WHQL; content updates unclear. | Standard application signing; less restrictive. |

The industry is watching to see if the financial penalties from the Delta suit force a shift in this architecture. Microsoft has already signaled an intent to restrict kernel access further, chance rendering the “CrowdStrike model” obsolete in future versions of Windows. Until then, the architectural risk remains a dormant liability for every enterprise relying on kernel-level endpoint detection.

Plymouth County Retirement Association v. CrowdStrike: The Lead Shareholder Plaintiff

| Date | Event | Significance |

|---|---|---|

| July 19, 2024 | Global Outage | Falcon sensor update crashes 8. 5M devices; stock drops 11%. |

| July 30, 2024 | Complaint Filed | Plymouth County files class action in W. D. Texas. |

| Oct 30, 2024 | Lead Plaintiff Appointed | NY State Comptroller takes over lead plaintiff role due to larger losses. |

| Jan 14, 2026 | Case Dismissed | Judge Pitman rules plaintiffs failed to prove intent to defraud. |

The 'Validated, Tested, and Certified' Allegation: Scrutinizing Marketing Claims

The Scienter Ruling: Judicial Dismissal of Shareholder Fraud Claims (Jan 2026)

Delta Air Lines v. CrowdStrike: Investigating the $500 Million Damages Claim

SECTION 9 of 14: Delta Air Lines v. CrowdStrike: Investigating the $500 Million Damages Claim

The legal collision between Delta Air Lines and CrowdStrike represents the single largest financial aftershock of the July 2024 outage. While other carriers recovered within forty-eight hours, Delta’s operations collapsed for five days. This extended paralysis resulted in 7, 000 cancelled flights and stranded 1. 3 million passengers. Delta CEO Ed Bastian quantified the financial at over $500 million. This figure includes $380 million in direct lost revenue and $170 million in recovery costs. The airline filed suit in October 2024. They alleged gross negligence and computer trespass. This litigation tests whether a software vendor can be held liable for damages that vastly exceed their contractual liability caps. CrowdStrike’s defense relies on a standard limitation of liability clause. Their contract with Delta caps damages at “single-digit millions.” This amount is likely under $10 million. It covers only a fraction of Delta’s claimed losses. To bypass this cap, Delta’s legal team, led by David Boies, must prove “gross negligence” or “willful misconduct.” These legal standards invalidate liability waivers in jurisdictions. Delta that CrowdStrike “forced” the faulty Channel File 291 update onto their systems without permission. They characterize this action as a trespass. The airline contends that CrowdStrike bypassed its own testing and Microsoft’s certification process. Delta claims this recklessness removes the protection of the liability cap. The “computer trespass” allegation introduces a legal argument for software updates. Delta asserts that they did not authorize the specific transmission of the faulty file. They the update acted like malware rather than a legitimate patch. This framing attempts to shift the dispute from a contract problem to a tort claim. Tort claims frequently allow for punitive damages. A Georgia Superior Court judge allowed this claim to proceed in May 2025. The ruling rejected CrowdStrike’s motion to dismiss the negligence and trespass counts. This decision exposed CrowdStrike to a chance jury trial where the full $500 million claim remains in play. CrowdStrike counters that Delta’s own incompetence caused the prolonged downtime. The cybersecurity firm points to the rapid recovery of American Airlines and United Airlines. Both carriers use the same Falcon sensor resumed normal operations days before Delta. CrowdStrike alleges that Delta refused their offers of on-site assistance. They claim Delta’s “antiquated IT infrastructure” could not handle the manual reboot process required to fix the boot loop. Specifically, Delta’s crew-tracking system failed to synchronize. This failure left pilots and flight attendants out of position even after the servers came back online. CrowdStrike they cannot be liable for Delta’s absence of disaster recovery resilience. Public relations missteps exacerbated the legal tension. Reports circulated that CrowdStrike sent $10 Uber Eats vouchers to partners as an apology. While not a direct settlement offer to Delta, the gesture was widely mocked. It symbolized the disconnect between the of the damage and the vendor’s response. Delta this “insufficient” apology as evidence of CrowdStrike’s failure to grasp the severity of the emergency. The voucher incident became a focal point in the court of public opinion. It reinforced Delta’s narrative of a vendor that prioritized speed and profit over customer safety and accountability. The litigation reveals the fragility of the software supply chain. Delta’s dependency on the Falcon sensor gave CrowdStrike root-level access to serious systems. The update bypassed Delta’s internal change control boards. It landed directly on production servers. Delta this architecture makes the vendor a single point of failure. CrowdStrike maintains that real-time security requires immediate updates. They that waiting for customer approval would leave systems to zero-day attacks. This conflict between operational stability and rapid security response sits at the heart of the lawsuit. Discovery in the case has unearthed internal communications regarding the “Rapid Response” process. Delta’s lawyers focus on the decision to push Channel File 291 to all sensors simultaneously. They this violated industry standards for staged rollouts. Evidence suggests CrowdStrike’s internal validator tool contained a bug that approved the malformed file. Delta claims this proves the negligence was widespread rather than a one-off human error. If a jury agrees, CrowdStrike could face damages that set a new precedent for software liability. The outcome determine if vendors can hide behind liability caps when their errors cause catastrophic economic loss. The case continues to move through the Georgia courts as of early 2026. Both sides are entrenched. Delta refuses to accept the liability cap. CrowdStrike refuses to set a precedent of paying consequential damages. The industry watches closely. A victory for Delta would force software vendors to rewrite their contracts and insurance policies. It would signal the end of the “use at your own risk” era for enterprise software. Until a verdict or settlement is reached, the $500 million claim hangs over CrowdStrike as a warning of the true cost of negligence.

The 'Computer Trespass' Argument: Delta's Claim of Unauthorized Updates

The Legal Theory: Updates as Unauthorized Access

At the core of Delta’s argument was the assertion that CrowdStrike exceeded its authorized access privileges. Under the **Georgia Computer Systems Protection Act (O. C. G. A. § 16-9-93(b))**, computer trespass occurs when an individual or entity uses a computer or network without authority and with the intention of deleting or altering data or obstructing its use. Delta’s complaint, spearheaded by attorney David Boies, argued that the airline had specifically configured its systems to reject automatic updates for serious infrastructure, employing a “staged rollout” policy to prevent exactly the type of catastrophic failure that occurred on July 19. Delta contended that CrowdStrike’s “Rapid Response” method, the channel through which the faulty configuration file was delivered, bypassed these user-defined controls. By pushing code to the kernel level of Delta’s servers without adhering to the airline’s established update preferences, Delta argued that CrowdStrike acted “without authority.” The complaint stated: *”CrowdStrike created a backdoor to bypass Delta’s controls… This unauthorized access was knowingly committed to prioritize CrowdStrike’s speed over Delta’s safety.”* This framing was serious for Delta. Standard vendor contracts, including the 2022 Subscription Services Agreement (SSA) signed between the two parties, include **Limitation of Liability** clauses that cap damages at a fraction of the total contract value, frequently in the single-digit millions. By alleging a statutory tort like computer trespass, Delta sought to pierce these contractual shields. If the court accepted that CrowdStrike’s actions constituted an independent statutory violation rather than just a contractual failure, the liability caps might not apply, chance opening the door to the full **$500 million** in damages Delta claimed, plus punitive damages.

Judicial Validation: The May 2025 Ruling

CrowdStrike’s legal team, led by Quinn Emanuel Urquhart & Sullivan, moved swiftly to dismiss the trespass claim, characterizing it as a creative meritless attempt to “tortify” a standard commercial contract dispute. They argued that the “Economic Loss Rule” generally prevents parties from suing in tort for purely financial losses arising from a contract. CrowdStrike asserted that the SSA granted them broad permission to update the software to maintain security, describing the relationship as ” ” and the updates as a fundamental part of the service Delta paid for. yet, in a significant ruling on **May 16, 2025**, Judge Kelly Lee Ellerbe of the Fulton County Superior Court rejected CrowdStrike’s motion to dismiss the trespass count. Judge Ellerbe’s order validated the plausibility of Delta’s theory at the pleading stage. The court noted that if Delta had indeed affirmatively elected *not* to receive automatic updates, as they alleged, then any update delivered in contravention of that election could legally be considered “unauthorized.” Judge Ellerbe wrote: *”With each new ‘content update,’ Delta would receive unverified and unauthorized programming and data running in the kernel level of its Microsoft OS-enabled computers… Delta has sufficiently alleged CrowdStrike’s unauthorized access was knowingly committed.”* The ruling clarified that statutory duties, such as those imposed by the Computer Trespass law, exist independently of the contract. This decision sent shockwaves through the SaaS (Software as a Service) industry. It suggested that vendors could chance be held liable for “hacking” their own customers if they pushed updates that violated specific customer configurations or consent, even if a broad service agreement was in place.

The “Rapid Response” method as the Trespass Vector

The technical underpinning of the trespass claim focused heavily on the distinction between “Sensor Updates” and “Content Updates.” Delta’s IT administrators had control over *Sensor Updates* (the actual executable code of the Falcon agent), which they could delay and test. yet, **Channel File 291** was a *Content Update* (configuration data), which CrowdStrike’s architecture was designed to stream immediately to all online sensors, bypassing the sensor update policies. Delta argued that this architectural distinction was unclear and deceptive. They claimed they were led to believe their “N-1” or “N-2” version policies (running one or two versions behind the latest release) protected them from *all* immediate changes. By labeling the kernel-crashing logic as “content” rather than “code,” CrowdStrike created a vector for modification that the customer could not block. In the context of the trespass statute, Delta argued this was akin to a landlord entering a tenant’s apartment without notice by using a master key that the tenant was told didn’t exist.

CrowdStrike’s Defense and Industry

CrowdStrike vehemently denied the trespass characterization. In legal filings and public statements, they argued that characterizing a security update as a “trespass” was absurd and dangerous. They contended that the very nature of endpoint protection requires real-time updates to defend against rapidly evolving threats. If vendors were paralyzed by the fear of “trespass” lawsuits for every background update, the global cybersecurity posture would crumble. “Delta is attempting to criminalize the standard industry practice of keeping security software up to date,” a CrowdStrike spokesperson stated following the May 2025 ruling. “They authorized us to protect their systems. We cannot protect what we cannot update.” even with these protests, the survival of the trespass claim meant the case would proceed to discovery, a phase where Delta could demand internal CrowdStrike communications regarding the design of the Rapid Response system. Delta sought to prove that CrowdStrike engineers *knew* these updates bypassed customer controls and proceeded anyway to maintain their “fastest in the industry” marketing claims.

Strategic use

By February 2026, the “Computer Trespass” claim had become the fulcrum of the litigation. While the fraud claims had been largely dismissed, the trespass claim provided Delta with significant use. It created a route to circumvent the liability cap and introduced the threat of a jury trial where non-technical jurors might view the “unauthorized” alteration of Delta’s systems—resulting in thousands of cancelled flights—as a violation of digital property rights. For the broader technology sector, the *Delta v. CrowdStrike* trespass argument introduced a new risk profile for SaaS vendors. It highlighted the legal danger of “shadow updates” or method that bypass customer change-management controls. If a vendor pushes code that a customer cannot stop, and that code causes damage, the vendor might no longer be shielded by the “service” aspect of their contract—they might be treated as an intruder.

Gross Negligence vs. Contractual Liability Caps: The Battle Over 'Single-Digit Millions'

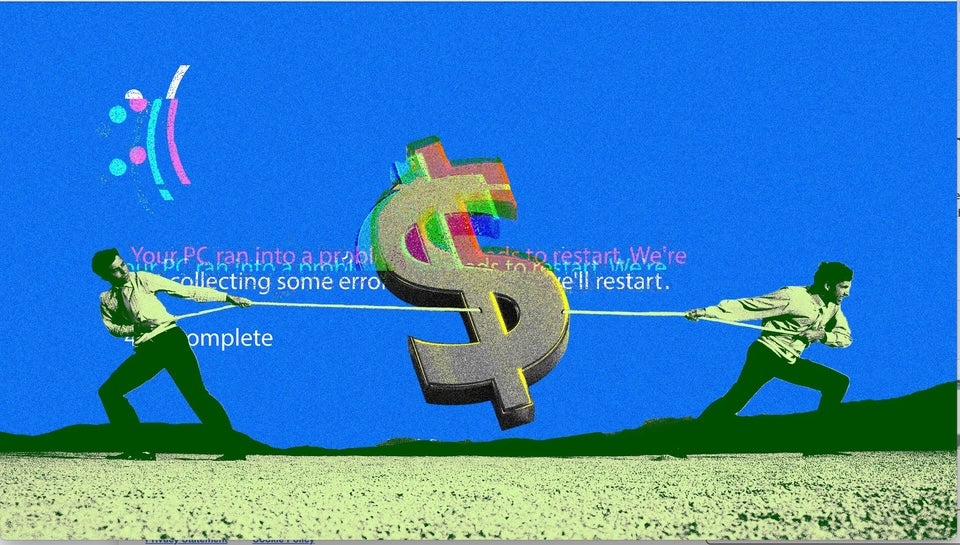

The $500 Million Chasm

The legal war between Delta Air Lines and CrowdStrike centers on a single, high- arithmetic: Delta’s claim of over $500 million in operational losses versus CrowdStrike’s assertion that its liability is contractually capped at “single-digit millions.” This financial chasm represents the most significant test of software liability limitation clauses in the modern cybersecurity era. For CrowdStrike, the defense relies entirely on the standard Limitation of Liability (LoL) provisions in its Master Subscription Agreement (MSA), which cap damages at a multiple of fees paid, in this case, allegedly two times the annual subscription value.

The ‘Single-Digit’ Letter

The conflict crystallized on August 4, 2024, when CrowdStrike’s outside counsel, Michael Carlinsky of Quinn Emanuel Urquhart & Sullivan, sent a blistering letter to Delta’s legal team. Responding to Delta CEO Ed Bastian’s public threat of litigation, Carlinsky explicitly stated that CrowdStrike’s liability was contractually limited to an amount in the “single-digit millions.” The letter dismissed Delta’s $500 million figure as unmoored from the agreed-upon terms of service. Carlinsky’s correspondence served two purposes., it acted as a rigid shield, invoking the June 2022 Subscription Services Agreement to deflect the airline’s massive consequential damages claims. Second, it aggressively pivoted the narrative, accusing Delta of refusing CrowdStrike’s offers of onsite assistance and blaming the airline’s “antiquated IT infrastructure” for the prolonged recovery. This “contributory negligence” defense argued that while other carriers recovered within 48 hours, Delta’s inability to bring its crew tracking and scheduling systems back online was a failure of its own disaster recovery, not the Falcon sensor update itself.

Piercing the Corporate Shield: The Gross Negligence Strategy

To bypass the “single-digit million” cap, Delta’s legal team, led by David Boies, had to escalate the allegations beyond simple error. Under New York and Georgia law (the relevant jurisdictions for the contract and tort claims), contractual liability caps are generally enforceable for ordinary negligence. yet, they can be rendered void if the plaintiff proves “gross negligence” or “willful misconduct.” Delta’s complaint, filed in Fulton County Superior Court, was meticulously crafted to meet this high evidentiary bar. The airline argued that CrowdStrike’s failure to test Channel File 291, even once, before deploying it to millions of active sensors constituted a “reckless disregard” for customer safety. By bypassing its own internal “staged rollout” procedures and the Microsoft WHQL certification process, Delta argued CrowdStrike exercised zero care, fitting the legal definition of gross negligence. The complaint alleged that CrowdStrike “cut corners, took shortcuts, and circumvented the very testing and certification processes it advertised,” so forfeiting the protection of the liability cap.

The May 2025 Judicial Ruling

The validity of this strategy was tested in May 2025, when Judge Kelly Lee Ellerbe of the Fulton County Superior Court issued a pivotal ruling. CrowdStrike had moved to dismiss the gross negligence claims, arguing that the outage was a “mistake” inherent to complex software development and that the contract’s LoL clause should end the dispute. Judge Ellerbe rejected CrowdStrike’s motion to dismiss the gross negligence count. In her order, she wrote that Delta’s allegations, specifically that CrowdStrike pushed kernel-level updates without a single valid test run and bypassed established safety , were “sufficient to state a claim for gross negligence.” The court noted that while software bugs are common, the complete absence of deployment testing for a file with Ring 0 privileges could be interpreted by a jury as a conscious indifference to consequences. This ruling was a tactical disaster for CrowdStrike. While the judge dismissed Delta’s claims regarding pre-contractual fraud (ruling that the 2022 contract superseded prior marketing claims), allowing the gross negligence claim to proceed meant the “single-digit million” cap was no longer guaranteed. The dispute would move to discovery, exposing CrowdStrike’s internal communications and development logs to forensic scrutiny.

The ‘Two Times Fees’ Clause

The specific mechanics of the liability cap, as revealed in court filings, limited damages to “two times the fees paid” during the relevant subscription term. For a client of Delta’s size, paying estimated annual fees in the low millions, this formula produced the “single-digit” figure by Carlinsky. The clause also explicitly excluded “consequential damages,” such as lost revenue, flight cancellations, and reputational harm—precisely the categories comprising the bulk of Delta’s $500 million demand. If the court finds CrowdStrike’s actions constituted ordinary negligence, the cap holds, and Delta recovers a trivial fraction of its losses. If a jury finds gross negligence, the cap evaporates, chance exposing CrowdStrike to the full half-billion-dollar judgment. This binary outcome has turned the litigation into an all-or-nothing battle over the definition of “recklessness” in software engineering.

Absence of Canary Deployment: The Failure to Test on a Subset of Hosts

The “Configuration” Fallacy

At the core of this failure lay a dangerous internal classification. CrowdStrike’s engineering distinguished sharply between “Sensor Content” (the executable code of the Falcon agent) and “Rapid Response Content” (the definition files used to identify threats). Sensor updates were subject to a rigorous, standard software development lifecycle (SDLC) involving internal dogfooding, staged rollouts to early adopters, and, a general availability (GA) release. Customers retained control over these sensor updates, with opting for “N-1” or “N-2” policies to delay installation until stability was proven. Conversely, Channel File 291 was categorized as Rapid Response Content. CrowdStrike viewed these files not as software, as configuration data, akin to a virus signature update. Because the company treated this content as benign “data” rather than executable logic, it bypassed the safety rings established for the sensor itself. The Post Incident Review (PIR) released on July 24, 2024, confirmed this fatal distinction: while the *code* that interpreted the file (the Template Type) had been tested months earlier, the specific *instance* of the file released on July 19 was pushed to all eligible online sensors instantly. This classification ignored the technical reality that in a kernel-mode driver, “configuration” data that dictates memory management and logic execution is functionally indistinguishable from code. When the Falcon sensor read the malformed file, it executed the instructions within the kernel’s Ring 0. By exempting this content from staged deployment, CrowdStrike allowed a data file to crash the operating system without the safeguards applied to the driver itself.

The 79-Minute Blast Radius

The absence of a canary group meant that the “blast radius” of the error was total. In standard DevOps practices, a “canary deployment” involves pushing an update to a small, non-serious percentage of the user base (e. g., 1% or internal test machines) to monitor for adverse effects. If the canary “dies” (crashes), the rollout halts automatically, containing the damage. CrowdStrike’s delivery system for Rapid Response Content possessed no such brake. Between 04: 09 UTC and 05: 27 UTC, the update flowed unimpeded to every Windows host running Falcon sensor version 7. 11 or higher that was connected to the internet. The velocity of this propagation was designed to counter fast-moving cyber threats, yet it functioned as a method for mass instability. Had CrowdStrike deployed Channel File 291 to even a modest internal test group of 1, 000 machines, the Blue Screen of Death (BSOD) loops would have manifested immediately. The crash was deterministic; it did not depend on rare race conditions or specific user behaviors. It occurred upon the sensor reading the file. A canary group of any size would have generated a spike in crash telemetry, triggering an automatic rollback before the file left CrowdStrike’s internal network. Instead, the “testers” were the serious infrastructure of global airlines, hospitals, and banks.

Comparison of Deployment (Pre-Incident)

The between how CrowdStrike handled sensor updates versus content updates illustrates the negligence argument. The following table reconstructs the deployment in place on July 19, 2024, based on the company’s admission in the PIR.

| Protocol Aspect | Sensor Update (Agent Code) | Rapid Response Content (Channel File 291) |

|---|---|---|

| Deployment Strategy | Staged Rings (Dogfood → Early Adopter → GA) | Simultaneous Global Push |

| Canary Testing | Mandatory internal and external subsets | None (Direct to production) |

| Customer Control | High (N-1, N-2 policies allowed delays) | None (Bypassed update policies) |

| Validation Rigor | Full SDLC (Unit, Integration, Stress tests) | Automated Validator (which contained a bug) |

| Rollback Speed | Granular, policy-based | Manual intervention required (79-minute delay) |

The “Gross Negligence” Argument

In the litigation following the outage, particularly *Delta Air Lines v. CrowdStrike*, the absence of canary testing serves as the primary evidence for “gross negligence.” While standard negligence involves simple carelessness, gross negligence implies a reckless disregard for the safety or rights of others. Plaintiffs that pushing a kernel-interacting update to 8. 5 million devices without a single live test on a canary machine constitutes a voluntary assumption of extreme risk. Legal filings cite industry standards from Microsoft, Google, and Amazon Web Services, all of which mandate staged rollouts for any change capable of impacting system availability. By deviating from this universal IT reliability standard, CrowdStrike arguably breached the duty of care owed to its clients. The argument posits that the crash was not an “unforeseeable accident” a statistical certainty given the deployment method. If you bypass testing on a subset, you implicitly accept that the entire fleet is the test subject. Delta’s complaint emphasizes that CrowdStrike’s marketing materials touted “cloud- AI” and “rigorous validation,” creating a reasonable expectation of safety. The that the “Rapid Response” channel bypassed the very safety method (staged rollouts) that CrowdStrike advertised for its sensor updates is framed as a “bait and switch” on reliability.

The Illusion of Customer Control

The failure to use canary deployments also exposed a serious breach of customer trust regarding control. enterprise CIOs maintained “N-1” or “N-2″ sensor policies specifically to avoid being the ” to fail” when new updates arrived. These organizations believed their conservative update policies shielded them from day-zero bugs. The July 19 incident shattered this illusion. Because Channel File 291 was a “content” update, it ignored the sensor version policies. An organization running a sensor version from two months prior (N-2) received the faulty content file at the exact same moment as an organization running the latest bleeding-edge version. The absence of a staged rollout meant that conservative customers were stripped of the buffer they believed they had contractually secured. This specific method, bypassing customer-defined stability policies, supports the “computer trespass” and “unauthorized update” claims currently proceeding in the Georgia courts.

Post-Incident Validation of Negligence

In its remediation plan released alongside the PIR, CrowdStrike announced it would immediately implement “staged deployment for Rapid Response Content,” starting with a canary deployment. While intended to restore confidence, this admission serves legally as a “subsequent remedial measure.” While inadmissible to prove negligence in contexts, in the court of public opinion and shareholder confidence, it functions as a confirmation that the capability to stage these rollouts existed or could have been built prior to the disaster. The fix was not a technological breakthrough; it was a procedural adjustment. The fact that CrowdStrike could implement canary rings for content updates *after* the fact suggests that the decision not to do so *before* July 19 was a choice—a choice to prioritize the speed of threat signature distribution over the stability of the host operating system. That choice, and the catastrophic silence of the canaries that were never deployed, defines the liability CrowdStrike faces.

Congressional Scrutiny: CEO George Kurtz's Testimony on Quality Control Gaps

The Summons and the Substitute Witness

On September 24, 2024, the House Committee on Homeland Security convened to exact answers regarding the largest information technology outage in history. While Committee Chair Mark Green and Subcommittee Chair Andrew Garbarino had initially issued a letter on July 22 demanding the presence of CrowdStrike CEO George Kurtz to explain the “catastrophe,” the executive suite dispatched Adam Meyers, Senior Vice President of Counter Adversary Operations, to testify. Meyers appeared before the Subcommittee on Cybersecurity and Infrastructure Protection, serving as the company’s proxy to address the structural failures that paralyzed 8. 5 million Windows devices. His testimony, delivered under oath, provided the official public confirmation of the internal procedural voids that plaintiffs in the Plymouth County and Delta Air Lines litigation would later cite as evidence of gross negligence.

The atmosphere in the Rayburn House Office Building was charged with bipartisan frustration. Representative Green opened the proceedings by comparing the outage to a fictional disaster, stating, “A global IT outage that impacts every sector of the economy is a catastrophe that we would expect to see in a movie.” He noted that while nation-state actors execute such disruptions, this event stemmed from an internal error, adding, “We cannot allow a mistake of this magnitude to happen again.” The hearing focused not on the technical glitch on the governance decisions that allowed a single file to bypass standard safety checks.

The “Perfect Storm” Admission

Meyers characterized the July 19 incident as a “perfect storm” of defects, a phrase that drew sharp scrutiny from lawmakers who viewed the failure as a predictable outcome of insufficient testing. The core of his testimony centered on the “Content Validator,” the internal software tool designed to check updates before release. Meyers admitted to the committee that this tool contained a logic error that allowed the malformed Channel File 291 to pass as valid.

Detailed questioning from Representative Garbarino forced Meyers to explain the specific mechanics of the failure. Meyers revealed that the update introduced a new threat detection capability requiring 21 input fields. yet, the sensor running on customer endpoints was configured to expect only 20 inputs. When the sensor attempted to read the 21st field, it triggered an out-of-bounds memory read, crashing the Windows operating system. The Content Validator, which should have flagged this mismatch, failed to do so because of a bug in its own code. This admission was significant: it established that the safety method itself was defective and had not been adequately tested against the specific “Template Type” used in the update.

The “Content vs. Code” Distinction

The most damaging for CrowdStrike’s legal defense appeared when Meyers explained why the update bypassed the company’s standard staged rollout procedures. For years, CrowdStrike had distinguished between “sensor updates” (executable code) and “content updates” (threat definition files). Sensor updates underwent rigorous testing, including canary deployments to a small subset of machines before global release. Content updates, yet, were treated as benign data streams, similar to antivirus signature files, and were pushed to all customers simultaneously to combat “fast-moving adversaries.”

Meyers admitted to the subcommittee that Channel File 291 was categorized as “Rapid Response Content,” a classification that exempted it from the staged deployment rings. “The content updates had not previously been treated as code because they were strictly configuration information,” Meyers testified. This policy decision meant that the faulty file was transmitted to millions of sensors concurrently, without a “canary” test on a live machine that would have immediately revealed the Blue Screen of Death (BSOD). Legal observers noted that this testimony conceded that the company prioritized speed over safety for this class of updates, a central pillar of the shareholder negligence claims.

Scrutiny of Kernel-Level Access

The hearing also addressed the risks associated with CrowdStrike’s operation at “Ring 0,” the kernel level of the Windows operating system. Lawmakers questioned whether a third-party vendor should possess the ability to crash the entire system. Representative Morgan Luttrell challenged the need of such deep integration, asking if the company could operate in “user mode,” which would isolate crashes to the application rather than the operating system.

Meyers defended the kernel-level architecture, arguing that it provides the necessary visibility to detect sophisticated threats that attempt to disable security software. He stated, “The kernel is the central most part of an operating system… having visibility into that is serious to being able to stop the adversary.” While he acknowledged the risk, he insisted that the architectural choice was industry standard for endpoint detection and response (EDR) tools. yet, this defense did little to assuage concerns that the company wielded too much power over the stability of global infrastructure without commensurate safeguards.

Commitments to Procedural Overhaul

Under pressure from the committee, Meyers outlined the remedial measures CrowdStrike had implemented following the outage. These changes directly addressed the gaps exposed by the incident and served as a tacit admission that previous were insufficient. The new framework included:

| Procedural Gap | Remedial Action Testified by Meyers |

|---|---|

| Rapid Response Exemption | All content updates are treated with the same rigor as code, undergoing staged rollouts. |

| Testing Environment | Introduction of “canary” deployments where updates are tested on internal and early-adopter rings before general release. |

| Customer Control | Customers are granted the ability to select their deployment ring (e. g., “General Availability” vs. “Early Adopter”) to prevent simultaneous global updates. |

| Validator Logic | Enhanced validation checks to ensure input parameters match sensor expectations explicitly. |

Meyers emphasized that these changes would prevent a recurrence, stating, “We have introduced new validation checks… and gradual rollout across increasing rings of deployment.” While these pledge aimed to restore confidence, they also highlighted that these standard industry practices, specifically staged rollouts for configuration changes, were absent at the time of the outage.

The “Dress Rehearsal” for Adversaries

A recurring theme throughout the hearing was the national security implication of the outage. Chair Green CISA Director Jen Easterly’s comment that the incident served as a “dress rehearsal for what China may want to do to us.” Lawmakers expressed alarm that a single vendor’s error could achieve what foreign adversaries spend billions attempting to accomplish: the simultaneous disruption of American aviation, healthcare, and banking sectors.

Meyers acknowledged this reality, apologizing repeatedly to the committee and the public. “We let our customers down,” he said. “Trust takes years to make and seconds to break.” even with the apology, the hearing crystallized the view that CrowdStrike’s internal quality control was a single point of failure for the global economy. The testimony provided a verified factual record that plaintiffs in the Delta Air Lines v. CrowdStrike suit would use to that the company’s negligence was not a momentary lapse, a widespread failure to anticipate the risks of its own update method.

Legal Ramifications of the Testimony

The transcript of the September 24 hearing became a foundational document for the subsequent litigation. By admitting that the Content Validator was the primary fail-safe and that it contained a bug, Meyers removed the defense that the error was unforeseeable. also, by confirming that “Rapid Response” updates skipped canary testing, he validated the plaintiffs’ argument that CrowdStrike had bypassed reasonable duty of care standards. The distinction between “content” and “code” proved to be a semantic difference with catastrophic physical consequences, a point that judges in the Southern District of Texas and the Superior Court of Fulton County would later examine when ruling on motions to dismiss.

Post-Incident Protocol Overhaul: The Shift to Staged Deployment for All Content

The 'Rapid Response' Loophole: How Channel File 291 Bypassed Staged Rollouts — The global digital infrastructure faced a catastrophic disruption on July 19, 2024, when CrowdStrike Holdings, Inc. distributed a defective configuration update to millions of Windows systems.

The Architecture of Channel File 291 — To understand the catastrophic failure of July 19, 2024, one must examine the specific method CrowdStrike uses to update its defenses. The component at the center.

The Wildcard Factor — A disturbing question arises from this analysis: why did this not happen sooner? CrowdStrike had released updates to Channel File 291 before July 19. The feature.

The Arithmetic of Failure: 21 Inputs vs. 20 Expectations — At the core of the July 19, 2024, global outage lay a rudimentary arithmetic error that no modern software supply chain should permit, let alone one.

The Validator's Blind Spot — The existence of a mismatch is a coding error; the deployment of that mismatch to 8. 5 million devices is a process failure. The "Content Validator,".

The Automated Gatekeeper's Collapse — The catastrophe that struck on July 19, 2024, did not originate from a malicious external actor from the silent failure of an internal quality assurance tool.

The Ring 0 Trap: Absolute Power, Absolute Failure — The catastrophic failure of July 19, 2024, was not a coding error; it was the inevitable result of a high-risk architectural gamble known as kernel-mode execution.

The 2009 EU Accord and Microsoft's Hands-Off Policy — Following the outage, scrutiny turned toward Microsoft's role in permitting third-party vendors such as CrowdStrike to operate with such dangerous privileges. Microsoft officials, including Vice President.

Litigation Status: The Distinction Between Fraud and Negligence — As of early 2026, the legal from the outage has bifurcated into two distinct tracks: securities fraud litigation and commercial negligence claims. In January 2026, a.

Plymouth County Retirement Association v. CrowdStrike: The Lead Shareholder Plaintiff — July 19, 2024 Global Outage Falcon sensor update crashes 8. 5M devices; stock drops 11%. July 30, 2024 Complaint Filed Plymouth County files class action in.

The 'Validated, Tested, and Certified' Allegation: Scrutinizing Marketing Claims — The central friction of the post-outage litigation rests on three specific words: "validated, tested, and certified." On March 5, 2024, during a conference call with investors.

The Scienter Ruling: Judicial Dismissal of Shareholder Fraud Claims (Jan 2026) — On January 12, 2026, the United States District Court for the Western District of Texas delivered a decisive blow to the consolidated shareholder class action against.

SECTION 9 of 14: Delta Air Lines v. CrowdStrike: Investigating the $500 Million Damages Claim — The legal collision between Delta Air Lines and CrowdStrike represents the single largest financial aftershock of the July 2024 outage. While other carriers recovered within forty-eight.

The 'Computer Trespass' Argument: Delta's Claim of Unauthorized Updates — The "Computer Trespass" Argument: Delta's Claim of Unauthorized Updates In the high- litigation following the July 2024 outage, Delta Air Lines deployed a legal strategy that.

The Legal Theory: Updates as Unauthorized Access — At the core of Delta's argument was the assertion that CrowdStrike exceeded its authorized access privileges. Under the **Georgia Computer Systems Protection Act (O. C. G.

Judicial Validation: The May 2025 Ruling — CrowdStrike's legal team, led by Quinn Emanuel Urquhart & Sullivan, moved swiftly to dismiss the trespass claim, characterizing it as a creative meritless attempt to "tortify".

CrowdStrike's Defense and Industry — CrowdStrike vehemently denied the trespass characterization. In legal filings and public statements, they argued that characterizing a security update as a "trespass" was absurd and dangerous.

Strategic use — By February 2026, the "Computer Trespass" claim had become the fulcrum of the litigation. While the fraud claims had been largely dismissed, the trespass claim provided.

The 'Single-Digit' Letter — The conflict crystallized on August 4, 2024, when CrowdStrike's outside counsel, Michael Carlinsky of Quinn Emanuel Urquhart & Sullivan, sent a blistering letter to Delta's legal.

The May 2025 Judicial Ruling — The validity of this strategy was tested in May 2025, when Judge Kelly Lee Ellerbe of the Fulton County Superior Court issued a pivotal ruling. CrowdStrike.

Absence of Canary Deployment: The Failure to Test on a Subset of Hosts — The immediate and catastrophic propagation of the July 19, 2024, outage was not a result of a coding error; it was the direct consequence of a.

The "Configuration" Fallacy — At the core of this failure lay a dangerous internal classification. CrowdStrike's engineering distinguished sharply between "Sensor Content" (the executable code of the Falcon agent) and.

Comparison of Deployment (Pre-Incident) — The between how CrowdStrike handled sensor updates versus content updates illustrates the negligence argument. The following table reconstructs the deployment in place on July 19, 2024.

The Summons and the Substitute Witness — On September 24, 2024, the House Committee on Homeland Security convened to exact answers regarding the largest information technology outage in history. While Committee Chair Mark.

Post-Incident Protocol Overhaul: The Shift to Staged Deployment for All Content — The July 2024 outage forced CrowdStrike to its "speed-at-all-costs" deployment philosophy, replacing it with a rigid, multi-tiered release protocol that prioritizes stability over immediate threat responsiveness.

Questions And Answers

Tell me about the the 'rapid response' loophole: how channel file 291 bypassed staged rollouts of CrowdStrike.

The global digital infrastructure faced a catastrophic disruption on July 19, 2024, when CrowdStrike Holdings, Inc. distributed a defective configuration update to millions of Windows systems. This event, in federal securities litigation, centered on a specific file: `C-00000291-00000000-00000032. sys`, commonly referred to as Channel File 291. The crash did not result from a sophisticated cyberattack or a compromise of CrowdStrike's source code repositories. Instead, it stemmed from an internal failure.

Tell me about the the architecture of channel file 291 of CrowdStrike.

To understand the catastrophic failure of July 19, 2024, one must examine the specific method CrowdStrike uses to update its defenses. The component at the center of this disaster was Channel File 291. This file is not a driver binary or a traditional software executable. It is a configuration file. Specifically, it belongs to a category CrowdStrike calls "Rapid Response Content." These files contain behavioral heuristics and rules that the.

Tell me about the the parameter count mismatch of CrowdStrike.

The technical root cause of the outage was a logic error involving a gap in input parameters. The IPC Template Type used for this specific update defined twenty-one distinct input fields. These fields represent different attributes or data points that the sensor needs to evaluate to decide if a Named Pipe is malicious. The definition file, which acts as a blueprint for the content, stated that twenty-one inputs were required.

Tell me about the the wildcard factor of CrowdStrike.

A disturbing question arises from this analysis: why did this not happen sooner? CrowdStrike had released updates to Channel File 291 before July 19. The feature itself was introduced in February 2024 with sensor version 7. 11. Between March and April 2024, the company deployed three other Template Instances using this same IPC Template Type. Those updates did not crash systems. They functioned perfectly. The difference on July 19 was.

Tell me about the the failure of the content validator of CrowdStrike.

The existence of a bug in the driver code is serious, software bugs are inevitable. The true negligence lies in how this buggy file escaped into the wild. CrowdStrike employs an automated testing system known as the Content Validator. This tool is responsible for checking every configuration file before it is pushed to the content delivery network. The Content Validator should have rejected the malformed Channel File 291. It did.

Tell me about the widespread fragility in kernel design of CrowdStrike.

This incident exposes a deep fragility in the architecture of modern Endpoint Detection and Response (EDR) systems. To stay ahead of adversaries, companies like CrowdStrike built method to push updates instantly, bypassing the slow and cautious certification process required for kernel drivers. They achieved this by creating a "driver that reads data" rather than updating the driver itself. Channel File 291 was technically just data. Microsoft does not require a.

Tell me about the the arithmetic of failure: 21 inputs vs. 20 expectations of CrowdStrike.

At the core of the July 19, 2024, global outage lay a rudimentary arithmetic error that no modern software supply chain should permit, let alone one operating at the Windows kernel level. The technical root cause was not a sophisticated cyberattack or a complex race condition, a structural mismatch between the engine and its fuel. The Falcon sensor's code, running on millions of endpoints, was hardwired to accept 20 input.

Tell me about the the validator's blind spot of CrowdStrike.