,

,

| Bot Tier | Initial Cost | Recurring Fee | Payment Method | Risk Level |

|---|---|---|---|---|

| Public Scripts | $50, $100 | None | CashApp / Venmo | High (Easily Detected) |

| ‘ShopperX’ Class | $400 | $150 per $1, 200 earned | Bitcoin / USDT | Medium (Remote Kill Switch) |

| Private / Invite-Only | $1, 000+ | $200+ Weekly | Monero (XMR) / BTC | Low (Frequent Updates) |

The Scam Economy

The opacity of this market has spawned a secondary economy of scammers preying on desperate shoppers. For every functional “ShopperX” or “Lucky” bot, there are dozens of fraudulent listings. Scammers create convincing Telegram channels, complete with fake testimonials and doctored screenshots of high earnings. They demand payment in crypto, deliver a non-functional file (or malware), and block the victim. These “honey traps” exploit the desperation of honest shoppers who feel they cannot compete without cheating. In late 2023, a wave of scams involving a fake bot named “InstaGod” defrauded users of thousands of dollars, illustrating the total absence of consumer protection in this black market.

GPS Spoofing: Faking Proximity to 'High-Demand' Store Zones

The Geofence Mandate: Priority Access and the Parking Lot Trap

Instacart’s batch allocation algorithm operates on a strict proximity basis, a system designed to minimize delivery times yet one that inadvertently birthed a hostile environment for honest contractors. The platform uses a “Priority Access” feature, frequently visualized as a highlighted circle or “bubble” around high-demand retailers like Costco or Wegmans. Shoppers physically located within this geofence, frequently defined as a radius of 0. 5 miles or less, receive offers before those further away. This mechanic forces legitimate workers to idle in store parking lots for hours, wasting fuel and time in hopes of securing a viable order. The “parking lot culture” is not a habit a requirement for survival on the app, as the algorithm penalizes distance with silence. Yet, for illicit operators, this physical tether is non-existent.

Android’s ‘Mock Location’ Vulnerability

The technical foundation of proximity fraud lies in the Android operating system’s “Developer Options,” specifically the “Select mock location app” feature. While intended for legitimate software testing, this API allows users to overwrite the device’s true Global Positioning System coordinates with falsified data. Illicit bot rings exploit this capability to project their digital presence into high-value zones without leaving their homes. By using readily available tools such as “Fake GPS Joystick” or “GPS Emulator,” a spoofer can position their account marker directly inside a Costco warehouse while physically residing miles away. This manipulation grants them the “Priority Access” status reserved for shoppers waiting on-site, cutting the line ahead of workers who are physically present.

The ‘Jitter’ Evasion Technique

Early attempts at GPS spoofing were easily detectable because they broadcasted a static, unmoving coordinate. Real GPS signals naturally fluctuate due to atmospheric interference and signal reflection, a phenomenon known as “drift.” To counter detection algorithms that flag perfectly stationary accounts, sophisticated bot software incorporates “jitter” mechanics. These scripts automatically introduce micro-variations to the falsified latitude and longitude, simulating the natural movement of a human holding a phone or walking near a store entrance. This calculated randomness mimics the erratic data signature of a genuine GPS receiver, allowing the spoofer to blend in with the noise of legitimate location data.

Altitude Discrepancies and Rooted Devices

Instacart’s security team employs telemetry analysis to identify anomalies in location data, specifically looking for altitude mismatches. A genuine GPS signal includes elevation data relative to sea level, which varies based on the user’s actual environment. basic spoofing applications fail to replicate this vertical coordinate, broadcasting a default altitude of zero or a fixed value that contradicts the local topography. To bypass this check, advanced bot rings use “rooted” Android devices, phones with privileged administrative access, to inject falsified altitude data that matches the target store’s elevation. also, rooting allows the installation of modules that hide the “Mock Location” flag from the Instacart application, preventing the software from recognizing that the GPS data is being manipulated.

Teleportation and the ‘Soft Ban’ Threshold

A major risk for spoofers is the “teleportation” error, where an account jumps between two distant locations faster than physically possible. If a bot user snipes a batch in San Francisco immediately after appearing in Oakland, the platform’s velocity checks trigger a “soft ban,” locking the account for 24 hours. To mitigate this, modern illicit software includes “cooldown” timers that calculate the realistic travel time between the user’s last known location and the new target. The software prevents the user from logging in or accepting orders until enough time has passed to simulate a drive, automating the patience required to evade velocity-based fraud detection.

The of Labor

The proliferation of GPS spoofing creates a distinct economic schism between rule-abiding contractors and fraudulent actors. Honest shoppers are bound by the laws of physics and the costs of operation; they must expend gasoline to enter the priority zone and endure the physical discomfort of waiting in vehicles. Spoofers incur none of these costs. They can monitor multiple high-demand zones simultaneously, jumping their digital location to whichever store shows the highest activity on the “heat map.” This asymmetry drains the earning chance of legitimate workers, who watch their screens remain empty while “ghost” shoppers claim the most lucrative batches from the comfort of their living rooms.

Instacart’s Countermeasures and Failures

Instacart has attempted to curb this behavior by tightening geofences and implementing “You are not at the store” error messages when a shopper attempts to start a batch without valid GPS verification. The company also uses Bluetooth beacons and Wi-Fi triangulation in partner stores to verify physical presence. Yet, the bot developers respond with rapid updates that spoof Wi-Fi SSIDs or randomize device identifiers. The arms race continues, with the platform’s detection logic frequently lagging behind the adaptability of the black market software. Consequently, the “Priority Access” circle remains a contested digital territory where the advantage heavily favors those to manipulate the code.

The 'Unicorn' Batch: Automating Selection by Dollar-to-Mile Ratios

The ‘Unicorn’ Batch: Automating Selection by Dollar-to-Mile Ratios

In the lexicon of the gig economy, a “Unicorn” represents the apex of profitability: a single order offering triple-digit pay, minimal driving distance, and a manageable item count. For an honest shopper, spotting one is a rare stroke of luck, a momentary flash on a smartphone screen that induces a rush of adrenaline. For a user of illicit automation software, yet, luck is a variable that has been systematically eliminated. The acquisition of these high-value batches is no longer a function of chance or reflex a calculated output of rigid mathematical logic.

The architecture of modern batch-grabbing software relies on a fundamental inversion of the standard user experience. While a legitimate worker must visually scan, interpret, and physically tap a notification, the bot operates at the data, intercepting the raw JSON payload from Instacart’s servers before it ever renders on a device. Inside this payload, the variables that define a batch’s value, total_pay, total_distance, and item_count, are exposed as plain text. The software parses these figures in microseconds, applying a user-defined algorithm to determine viability. If the batch meets the criteria, the accept signal is sent back to the server in under 100 milliseconds, a speed that renders human competition biologically impossible.

The Algorithm of Greed

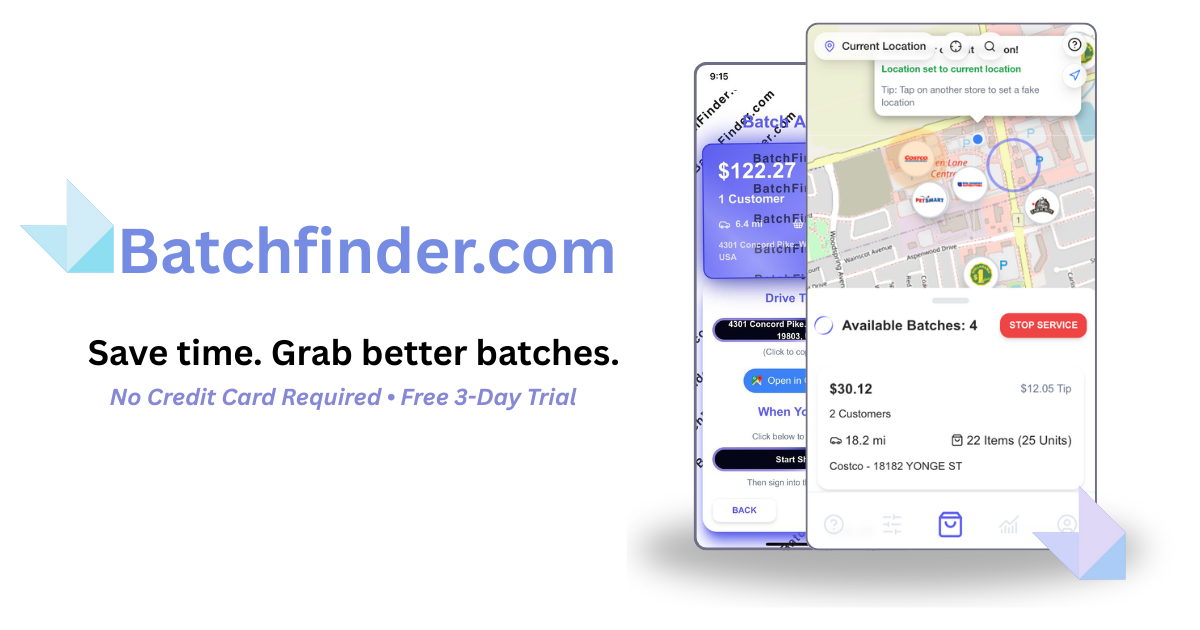

The primary metric driving this automation is the dollar-to-mile ratio. In the settings panel of bots like “ShopperX” or “BatchFinder,” users are presented with a dashboard of sliders and input fields that resemble a high-frequency trading terminal more than a grocery delivery app. Here, the operator defines their floor. A common configuration might demand a minimum payout of $50, a maximum travel distance of 5 miles, and a tip threshold of at least $20. the sophistication goes deeper. Advanced scripts allow for compound logic. A user might set a rule that accepts a $30 order only if the item count is 15 units and the delivery distance is under 3 miles. This creates a “profitability fence” around the user’s account. Any order falling outside these parameters is ignored, leaving the scraps, the $7 batches with 50 items and 10-mile drives, to the manual shoppers who are desperate for any work at all.

| Step | Human Shopper | Automated Bot |

|---|---|---|

| Detection | Wait for push notification and screen refresh (1-3 seconds). | Intercept API payload immediately upon transmission (10-50ms). |

| Analysis | Read store name, pay, miles, and items. Calculate worth mentally (2-5 seconds). | Parse variables against pre-set logic: if (pay/miles> 2. 5) (1ms). |

| Action | Physical finger movement to swipe or tap “Accept” (300-500ms). | Send HTTP POST request to /accept_batch endpoint (10-20ms). |

| Result | “Batch no longer available” error message. | Order secured and locked to account. |

Eliminating the “Blind Swipe”

For manual shoppers, the of high-paying orders carries a significant risk known as the “blind swipe.” When a notification appears with a high dollar amount, the shopper knows they have less than a second to react. In this panic, they frequently swipe without reading the details, only to discover they have agreed to transport 40 cases of water to a third-floor walk-up apartment 15 miles away. The bot removes this hazard entirely. By pre-validating every parameter against the user’s settings, the software ensures that no “bad” batch is ever accepted. The bot filters out heavy pay orders unless the compensation exceeds a specific multiplier. It rejects orders from blacklisted stores known for long checkout lines or poor inventory. This pre-screening capability transforms the gig from a gamble into a rigged game. The bot user does not just win more frequently; they win only the bets that are guaranteed to pay out.

The Ghost Notification

The proliferation of this technology has birthed a phenomenon known among drivers as the “ghost notification.” A shopper’s phone chime, alerting them to a $80 batch at a nearby Costco. By the time their eyes shift to the screen, a fraction of a second later, the screen is blank. The batch did not ” ” in the mystical sense; it was claimed by a script running on a server or a rooted Android device before the legitimate app could even finish drawing the pixels of the offer card. This reality creates a psychological toll on the workforce. Shoppers report staring at their screens for hours in parking lots, terrified to blink or look away, only to be beaten repeatedly by an invisible adversary. The “Unicorn” has shifted from a symbol of good fortune to a marker of widespread inequity. In 2024 and 2025, online forums for gig workers filled with screenshots of these missed opportunities, accompanied by the realization that the playing field had tilted irrevocably against biological operators.

The Market for “God Mode”

Developers of these illicit tools market their software with aggressive pledge of “passive income” and “dominating your zone.” Subscription tiers for these bots can run upwards of $150 per month, a fee that users justify by snagging just one or two Unicorns a week. The return on investment is clear: a bot that secures a single $100 order has paid for itself. This economic incentive drives a continuous arms race. As Instacart patches one vulnerability, bot developers release updates—frequently within days—that bypass the new security measures, sometimes even mocking the platform’s attempts at control in their patch notes. The “Unicorn” batch, once a reward for patience and position, is a commodity harvested by code. The romantic notion of the lucky break has been replaced by the cold efficiency of the `if/then` statement. For the honest shopper, the only remaining option is to accept the orders the bots deem mathematically unworthy, feeding on the crumbs left behind by the algorithm.

Third-Party Overlays: Abusing Android Accessibility Services for Speed

The Surface-Level Cheat: Weaponizing Accessibility

While API injection represents the sophisticated backend of the illicit batch-grabbing ecosystem, a far more common and accessible method operates directly on the device screen. This technique relies on “overlays” and the abuse of Android Accessibility Services. These tools do not require the complex network decryption keys needed for man-in-the-middle attacks. Instead, they exploit a feature designed to help disabled users navigate their smartphones. By granting an unauthorized application permission to control the screen, cheaters install a digital finger that never tires, never blinks, and reacts faster than human biology allows.

Android Accessibility Services provide a set of APIs intended for screen readers and assistive devices. When a legitimate app uses these services, it can read the text displayed on the screen to a blind user or perform gestures for someone with motor impairments. Illicit bot developers realized years ago that this same permission set grants an application “God mode” over the user interface. A bot with accessibility permissions can read the dollar amount of a batch the microsecond it renders in the view hierarchy. It can then trigger a programmatic click on the “Accept” button before the light from the screen even reaches the shopper’s retina.

The Mechanics of the “Clicker” Bot

The technical operation of these bots is relatively straightforward yet devastatingly. The bot runs as a background service with a “draw over other apps” permission. This creates a floating control panel or “overlay” that sits on top of the legitimate Instacart Shopper application. To the operating system, the bot appears to be a helpful utility. In reality, it is a high-speed screen scraper. The bot continuously polls the active window content using the `AccessibilityNodeInfo` class. It scans the tree of UI elements looking for specific keywords or numerical values that match the user’s pre-set filters.

When a new batch appears on the list, the Instacart app generates a standard Android view. The bot intercepts this event. It parses the text fields associated with “Batch Pay,” “Tip,” “Distance,” and “Item Count.” If the batch meets the criteria, for example, a payout over $40 with a distance under 5 miles, the bot executes a `performAction(ACTION_CLICK)` command on the specific coordinate or node ID of the batch. This entire process occurs in milliseconds. The human shopper standing to the bot user might see a flash of a batch appearing and disappearing instantly. They frequently describe this phenomenon as “ghost batches,” where a notification arrives the screen is empty by the time they look.

The User Experience: Set It and Forget It

For the cheater, the experience is designed to be low-effort. Upon installing a bot like “BatchFinder” or “ShopperX,” the user is prompted to enable Accessibility Services in the Android settings menu. Android displays a dire warning that the app have full control of the device, including the ability to read passwords and banking details. Desperate for high-paying orders, thousands of shoppers ignore this warning and grant the permission. Once active, the bot presents a configuration menu. Users set sliders for minimum dollar amounts. They can toggle switches to avoid specific stores or exclude orders with “heavy pay” indicators if they absence the vehicle capacity.

The overlay itself frequently manifests as a small, semi-transparent button or widget floating on the screen. advanced versions offer a “stealth mode” where the overlay is invisible, or it mimics a benign system tool like a calculator or a volume slider. The user simply opens the Instacart app, activates the bot via the overlay, and waits. The bot handles the refreshing. It handles the scrolling. It handles the acceptance. The user can sit in their car, watching Netflix or scrolling social media on a second device, waiting for the alert that a batch has been secured. This passive income generation model fundamentally breaks the gig economy’s pledge of meritocratic effort.

Speed Wars: Milliseconds vs. Biology

The primary advantage of the accessibility bot is pure speed. Human reaction time to a visual stimulus averages around 250 milliseconds. Adding the motor function to move a thumb and tap a specific point on glass adds another 100 to 150 milliseconds. In the competitive environment of a “Costco Drop”, the morning rush when wholesale clubs release hundreds of orders simultaneously, a delay of 400 milliseconds is fatal. An accessibility bot processes the visual tree and executes the click command in under 50 milliseconds. In a digital race, the human is running through molasses.

Bot developers have even engaged in speed wars with each other. Early versions of these clickers simply tapped as fast as possible. This led to a problem known as “collision.” If two bots try to grab the same batch at the exact same millisecond, the Instacart server rejects both or awards it to the request that arrived at the network level. To combat this, newer overlays introduced “turbo modes” that increase the polling rate of the screen content. This aggressive querying consumes significant battery power and causes the phone to heat up, yet users accept the hardware as the cost of doing business.

Detection and the Cat-and-Mouse Game

Instacart is aware of this abuse. The company has implemented various detection methods within the Shopper app to identify illicit overlays. One common method involves scanning the list of installed applications and flagging known package names associated with bot software. In response, bot developers implemented randomization. When a user downloads the bot, the installer generates a unique package name and icon. One user’s bot might look like “Candy Crush” while another’s resembles “System WiFi Tool.” This makes blacklist-based detection nearly impossible.

Another detection vector is behavioral analysis. If an account consistently accepts batches in under 100 milliseconds, it triggers a fraud flag. Instacart’s algorithms look for “superhuman” reflexes. To circumvent this, bot developers added “humanization” delays. The user can configure the bot to wait a random interval, say, between 200 and 400 milliseconds, before clicking accept. This artificial delay makes the interaction appear legitimate to the server’s fraud detection systems while still being faster than a distracted or tired human shopper. The bot never hesitates. It never misclicks. It never second-guesses the mileage.

The Security Nightmare

The proliferation of these tools creates a serious security hole, not just for Instacart, for the gig workers themselves. By granting Accessibility Services to an unverified application downloaded from a Telegram channel, shoppers are handing over the keys to their digital lives. These permissions allow the app to read every keystroke, including passwords for banking apps, email accounts, and the Instacart earnings portal itself. Security researchers have found that “batch grabber” bots contain code borrowed from banking trojans. The same method used to click “Accept” on a grocery order can be used to click “Transfer” on a banking app while the screen is dimmed or obscured.

There have been reports of bot sellers double-dipping. They charge the shopper a weekly subscription fee for the software, then use the accessibility permissions to harvest the shopper’s login credentials. bot rings have been accused of stealing Instacart accounts entirely, locking out the original owner and reselling the “verified” account to another user. The shopper, blinded by the pledge of $100 batches, ignores the massive cybersecurity risk they are inviting onto their device. They view the bot as a tool for survival, unaware that they are the product being exploited by the software developers.

The Android Ecosystem Problem

This problem is largely unique to the Android ecosystem. Apple’s iOS operating system has much stricter controls over accessibility permissions and sandboxing, making it significantly harder (though not impossible) to create overlay-style clickers. This has led to a demographic skew in the bot market. Serious cheaters almost exclusively use Android devices. shoppers carry two phones: an iPhone for personal use and a “burner” Android phone specifically for running the Instacart app with a bot. This hardware division further complicates Instacart’s ability to police the platform, as the device fingerprinting data becomes inconsistent.

Instacart has attempted to block the use of Accessibility Services entirely for the Shopper app. Yet this method runs into legal and ethical blocks. Blocking these services would also lock out legitimate shoppers with disabilities who rely on screen readers to work. Bot developers hide behind this shield. They that their software is an “assistive tool” for efficiency, blurring the line between a productivity aid and a cheat. Instacart is forced to walk a tightrope, trying to distinguish between a blind user’s screen reader and a bot’s high-speed scraper, a distinction that becomes blurrier with every software update.

The Persistence of the Overlay

Even with aggressive updates from Instacart, the overlay remains the most persistent form of cheating. It is less brittle than API injection, which breaks whenever Instacart changes its encryption keys. The overlay only breaks if Instacart completely redesigns the visual layout of the batch screen. Even then, bot developers can push an update within hours to retarget the new button coordinates. The visual nature of the attack makes it resilient. As long as the information is displayed on a screen for a human to read, a bot can read it faster. The battle for the batch has moved from the server room to the pixel buffer, and in that arena, the machine always holds the advantage.

The 'Bot Ring' Structure: Organized Groups Dominating Warehouse Stores

The Parking Lot Syndicate: Visualizing the Monopoly

In the concrete expanse of a Costco or Sam’s Club parking lot at 9: 50 AM, the digital inequality of the gig economy manifests physically. Legitimate shoppers, sitting in their vehicles with a single smartphone, wait for the store’s inventory to sync with the Instacart platform. They are the “scavengers,” hoping for a mid-tier order that might net them $30 for an hour of labor. Nearby, frequently clustered near the store’s entrance or a specific signal-heavy light pole, sits the “syndicate.” These are not loose associations of friends organized, hierarchical cells designed to extract maximum value from the platform’s batch allocation algorithm.

Observers and frustrated independent contractors frequently refer to these groups as the “Instacart Mafia” or “Bot Rings.” Their presence is unmistakable. A single individual in these circles frequently operates three to five smartphones simultaneously, laid out on a dashboard or held in a fanned deck like playing cards. When the store opens, a moment known as “the drop”, the difference in processing power becomes visible. While the legitimate shopper’s screen refreshes on a standard pattern, the syndicate’s device farm, powered by illicit scripts like “ShopperX” or “Thunder,” seizes the high-value batches instantly. The $100, $150, and $200 orders from the server queue in milliseconds, allocated directly to the ring’s devices before they ever render on a standard user’s screen.

The Hierarchy: Brokers, Mules, and the Rent-Seeking Elite

These groups operate less like casual gig workers and more like organized racketeering enterprises. The structure divides into two distinct tiers: the “Broker” (or Admin) and the “Runner” (or Mule). The Broker holds the keys to the kingdom. This individual possesses the technical know-how to acquire and manage the bot software, and more importantly, they control the supply of verified shopper accounts.

The Runner is the labor force. Reports from major metropolitan areas, including Los Angeles, Chicago, and Miami, indicate that Runners are frequently individuals unable to pass Instacart’s background checks due to criminal records or immigration status. The Broker “rents” an active, verified account to the Runner. This rental agreement is predatory. The Runner must pay a weekly fee, frequently ranging from $150 to $300, or surrender a percentage of their weekly earnings to the Broker. In exchange, the Broker provides the phone, the pre-loaded bot software, and the stolen or synthesized identity required to log in.

This arrangement creates a perverse incentive structure. The Runner, load by the high cost of “renting” their job, is forced to shop aggressively and continuously to break even. The Broker, meanwhile, assumes zero physical labor risk while collecting passive income from five, ten, or twenty Runners operating in different zones. The economic extraction is total: Instacart pays the account, the Broker takes a cut, and the Runner survives on the remainder, all while the legitimate shopper is pushed out of the market entirely.

The Supply Chain of Stolen Identities

A serious component of the bot ring’s longevity is the continuous churn of shopper accounts. Instacart’s fraud detection algorithms eventually flag and ban accounts exhibiting bot-like behavior. To maintain operations, the ring requires a steady stream of fresh identities. This demand fuels a secondary black market for “aged” and “verified” accounts.

Investigations reveal that these accounts are frequently sourced through targeted phishing campaigns. Scammers posing as Instacart support agents contact legitimate shoppers, claiming an problem with their current order or earnings. They trick the shopper into revealing their six-digit login code. Once the scammers access the account, they change the phone number and email, hijacking the identity. This “zombie” account, with its history of good ratings and passed background checks, is then sold or leased to a bot ring.

Another method involves the exploitation of “synthetic identities”, combinations of real Social Security numbers (frequently belonging to children or deceased persons) and fake names. Brokers use these synthetic IDs to pass the initial automated background screenings. Once the account is active, it enters the rental pool. The sophistication of this supply chain means that when Instacart bans one account, the Runner simply swaps the device for a backup phone with a new profile, returning to the store floor within minutes.

Territorial Control and Intimidation

The dominance of these groups extends beyond digital manipulation into physical territory. In high-demand zones, specific rings claim ownership of specific stores. Independent shoppers who manage to secure a good batch or who attempt to document the ring’s activity frequently report hostility. Tactics range from verbal warnings, “This is our store”, to more aggressive intimidation, such as blocking cars in parking spots or tampering with vehicles.

In one documented instance, a group identified as the “Florencia” ring in Los Angeles allegedly distributed flyers and enforced a “code of conduct” for shoppers in their territory, acting as a localized regulatory body outside of Instacart’s control. When legitimate shoppers these unwritten rules, the rings have been known to use their bot networks to launch “denial of service” attacks against the individual. By flooding the store’s zone with phantom orders or using multiple accounts to accept and then cancel orders, they manipulate the algorithm to penalize the outsider’s account, driving them away from the location.

Bypassing the Biometric Firewall

Instacart has attempted to the of account sharing and renting through “Persona” verification, a random prompt requiring the shopper to take a selfie to verify their identity matches the account profile. For a legitimate user, this is a minor annoyance. For a bot ring using rented accounts, it should be a fatal system failure. It is not.

The rings have developed low-tech and high-tech workarounds for the “selfie check.” In the low-tech version, the Broker remains on-site or nearby. When a Runner’s phone triggers a verification request, they physically hand the device to the Broker (or the person whose face matches the account), who takes the photo and hands it back. In more advanced operations, rings use high-resolution photographs or even life-like mannequins to fool the facial recognition software.

also, “jailbroken” iPhones and rooted Android devices allow the bot software to inject a pre-saved image into the camera feed. When the app requests a live selfie, the software intercepts the camera’s data stream and feeds it a static photo of the account holder. This digital sleight-of-hand renders the biometric security measure useless, allowing a Runner to operate under the identity of a woman named “Sarah” while being a male of an entirely different demographic. The customer, expecting Sarah, is met by a stranger, creating a serious safety gap that Instacart’s automated support systems frequently fail to address.

The Economic for the Honest Shopper

The proliferation of these rings fundamentally breaks the “meritocracy” Instacart claims to uphold. The platform’s gamification, where higher ratings and faster speeds supposedly yield better batches, cannot compete with a script that reads the API directly. A human reaction time of 1. 5 seconds is glacial compared to a bot’s 200-millisecond capture rate.

Legitimate shoppers are left to fight over the “scraps”, orders with low tips, high mileage, or heavy items that the bot rings have filtered out. The “Unicorn” batches, those rare orders paying $100 or more, are mathematically inaccessible to the honest user in a bot-dominated zone. This forces legitimate shoppers to work longer hours for less pay, increasing vehicle wear and tear and driving down the hourly wage well minimum standards.

The store environment also suffers. Warehouse staff report that bot ring members, pressured by the need to maximize volume to pay their rental fees, are frequently rude, aggressive, and careless. They block with multiple carts, rush through checkout lines with confusing multi-order transactions, and disregard store policies. Yet, because they move high volumes of product, the stores and Instacart itself are slow to ban them. The revenue flows, and as long as the orders are delivered, the “black box” nature of the fulfillment is tolerated.

This organized domination of the warehouse sector is not a glitch; it is a parasitic economy grafted onto the host platform. It extracts value from the customers (who pay for premium service get unvetted strangers), the stores (who deal with the chaos), and the honest shoppers (who are pushed into poverty). The bot ring is the inevitable result of an algorithmic management system that prioritizes speed and completion over human verification and fairness.

Collateral Damage: Economic Devastation for Legitimate 'Diamond' Shoppers

Collateral Damage: Economic Devastation for Legitimate ‘Diamond’ Shoppers

The pledge of Instacart’s “Diamond Cart” tier, priority access to high-value orders for top-performing shoppers, has crumbled under the weight of automated theft. While honest workers wait in parking lots for the “priority” drop, illicit software rings intercept the most lucrative batches before they ever reach a human screen. These “batch grabbers,” known by names like ShopperX and Lucky, use overlay scripts to refresh the dispatch server milliseconds faster than the official app allows. A 2024 Business Insider investigation verified that users of these bots could filter for orders paying over $50 and auto-accept them instantly, leaving legitimate Diamond shoppers with only the low-pay scraps that the algorithms rejected.

This digital black market has created a pay-to-play economy that imposes a tax on survival. Reports from 2024 and 2025 indicate that bot subscriptions cost between $200 and $550 for initial access, with developers charging recurring fees as high as $150 for every $1, 200 earned. Honest shoppers who refuse to violate the Terms of Service face a mathematically impossible disadvantage. They compete with GPS-spoofing software that falsely positions a cheater’s device inside the store, overriding the physical proximity requirements that Instacart claims to enforce. Consequently, a veteran shopper with a perfect rating frequently earns less per hour than a rule-breaker using a script.

The financial impact on rule-abiding workers is severe and measurable. In major metropolitan markets, shoppers report that the “Priority Access” label frequently appears on their screens only as a “Batch Unavailable” error message, signaling that a bot claimed the order in the sub-second latency window. This phenomenon renders the Diamond status meaningless, as the tiered reward system cannot function when the allocation is compromised. Veteran shoppers, who once relied on $200 daily earnings to support families, find their income slashed by 30% to 50% as they are forced to accept high-mileage, low-tip orders that the automated filters discard.

Instacart’s countermeasures have proven largely ineffective against this adaptive threat. Although the company frequently updates its API and sues bot developers, such as the legal action taken against “Shopper Gopher” and similar entities, the underground market simply shifts to private WhatsApp and Telegram channels to distribute updated scripts. The company’s “whack-a-mole” security strategy leaves the core vulnerability exposed: the dispatch system prioritizes speed over verification. Until Instacart implements biometric identity checks at the moment of batch acceptance rather than just at login, the economic devastation for its most loyal workforce continue unchecked.

Predator Becomes Prey: Phishing Scams Masquerading as Bot Software

The underground economy surrounding Instacart batch allocation has mutated into a predatory ecosystem where the cheaters frequently become the victims. While legitimate shoppers battle against automated scripts, a secondary of cybercriminals has emerged to exploit the desperation of those seeking an illicit advantage. These operators do not sell functional software; they peddle “vaporware” and malware designed to harvest credentials, drain earnings, and hijack accounts. The pledge of a “unicorn” batch grabber frequently serves as the bait in a digital trap, turning the hunter into the prey.

The “Vaporware” Trap

The primary vector for these scams involves the sale of non-existent bot software on encrypted messaging platforms like Telegram and Signal. Sellers market these tools with hyperbolic claims, promising “God mode” capabilities that can bypass Instacart’s latest security patches or see batches before they reach the central server. Names like “InstaGod,” “BatchKing,” and counterfeits of known scripts like “ShopperX” circulate in private groups. The sales pitch is seductive: for a one-time crypto payment or a weekly subscription, the buyer receives an APK file guaranteed to secure high-value orders.

In reality, the file is frequently useless code or, worse, a malicious payload. Security researchers have identified numerous instances where the “bot” application simply displays a static screen mimicking the Instacart interface while a background process logs the user’s keystrokes. When the shopper attempts to “log in” to the bot using their Instacart credentials, the username and password are transmitted directly to the scammer’s command-and-control server. The software never connects to Instacart’s API; its sole function is credential harvesting.

The “Support” Impersonation Ring

A more sophisticated variation involves a social engineering attack that dovetails with the bot market. Scammers monitor shopper forums and Facebook groups where users complain about slow days or inquire about batch grabbers. The scammer contacts the target, posing as a developer or a “connected” insider who can “hardwire” their account to a priority server for a fee. Once the victim agrees, the scammer claims they need to “link” the account to the new server.

This “linking” process is a ruse to bypass Two-Factor Authentication (2FA). The scammer triggers a login attempt on the victim’s account from a remote device. Instacart’s system automatically sends a six-digit SMS verification code to the legitimate owner. The scammer then demands this code, claiming it is required to “activate the bot” or “verify the server upgrade.” Shoppers, conditioned to trust the technical jargon of these supposed experts, hand over the code. The scammer immediately gains full control of the account, changes the password, and locks the original owner out.

Malware Analysis: The “Trojan Horse” APKs

Technical analysis of seized “batch grabber” APKs reveals the depth of the deception. Legitimate-looking interfaces are constructed using standard Android UI libraries to lull the victim into a false sense of security. yet, the permissions requested by these apps frequently far exceed what a batch script would require. These malicious apps frequently demand access to SMS reading, overlay permissions, and accessibility services.

| Permission Type | Stated Purpose (The Lie) | Actual Function (The Threat) |

|---|---|---|

| SMS_READ | “Auto-verify login codes” | Intercepts 2FA codes from banks and Instacart without user knowledge. |

| ACCESSIBILITY_SERVICE | “Auto-tap batches faster” | Grants full control to click buttons, read screen text, and steal data from other apps. |

| SYSTEM_ALERT_WINDOW | “Overlay batch alerts” | Draws fake login screens over legitimate banking apps to steal credentials. |

| INTERNET | “Connect to Instacart servers” | Exfiltrates stolen data to the attacker’s remote server. |

Once installed, the malware does not just target Instacart credentials. By abusing Accessibility Services, the malicious code can monitor activity across the entire device. If the shopper opens a banking app or a crypto wallet, the malware can capture login details or overlay a fake login window to intercept the password. The “bot” becomes a full-spectrum spyware tool, with the Instacart cheat serving as the delivery method.

The “Instant Cashout” Drain

The immediate financial goal of these scams is the “Instant Cashout” feature. Instacart allows shoppers to withdraw their accrued earnings instantly to a debit card. Once a scammer compromises an account, either through a fake bot login or a 2FA phishing scheme, their move is to check the current balance. If funds are available, they add their own debit card to the profile. While Instacart has security measures that sometimes delay cashouts after a card change, scammers use stolen identities or “mule” accounts to bypass these checks or simply wait out the cooling-off period if they have fully locked the victim out.

Reports indicate that shoppers have lost hundreds of dollars in accrued earnings in minutes. Because the breach frequently involves the shopper voluntarily handing over credentials or 2FA codes (technically a violation of terms), they face an uphill battle in recovering funds. The platform frequently views the unauthorized access as a result of the shopper’s negligence or illicit activity, complicating the reimbursement process.

Double Jeopardy: Deactivation

The final blow for victims of these scams is frequently permanent deactivation from the Instacart platform. The company’s fraud detection algorithms flag the sudden device switch, the suspicious login location, and the rapid change of banking information. To the automated system, the account looks like it has been sold or compromised. also, if the shopper admits to support agents that they were trying to use a third-party bot when the “hack” occurred, they provide a confession to violating the Terms of Service.

This creates a paradox where victims are afraid to report the crime. Reporting the scam requires admitting to the attempt to cheat, which carries the penalty of immediate termination. Scammers rely on this silence. They know their victims are engaged in illicit activity and are therefore less likely to seek help from the platform or law enforcement. The “black market” for Instacart bots thus functions as a perfect closed loop of exploitation, where the desire to game the system exposes the cheater to risks far greater than a missed order.

Identity Leasing: The Gray Market of Rented and Stolen Accounts

The digital storefront of Instacart is guarded not by physical turnstiles, by a fragile of identity verification that have spawned a sprawling, illicit economy. While software bots provide the speed to seize orders, the leased identity provides the essential camouflage required to operate. This is the “gray market” of gig work, a subterranean industry where shopper accounts are treated as tradable commodities, rented by the week, sold to the highest bidder, or synthesized from the stolen data of unsuspecting citizens. For the illicit bot rings dominating warehouse zones, these accounts are ammunition; without a steady supply of “fresh” identities, their algorithmic advantages are useless against platform bans.

The Supply Chain: Lessors and Unwitting Victims

The inventory for this marketplace originates from two distinct sources, creating a bifurcated supply chain that feeds the same end-user demand. The stream consists of consensual lessors. These are individuals who pass the platform’s background checks, frequently students, retirees, or former shoppers, have no intention of performing the labor. Instead, they monetize their clean records. In private Telegram channels and invite-only Facebook groups, these account holders offer their credentials for a weekly “rent,” ranging from $150 to $300, depending on the market’s volatility and the account’s “Diamond” status.

The second, more sinister stream flows from identity theft. Sophisticated brokers harvest personal identifiable information (PII) from data breaches, purchasing Social Security numbers and driver’s license details in bulk on the dark web. They use this data to manufacture accounts in the names of people who have never downloaded the Instacart app. In 2024, investigators uncovered “synthetic identity” farms where real SSNs were paired with fictitious names or addresses, creating “Frankenstein” profiles that bypass automated screenings. These accounts are sold outright for flat fees, frequently between $500 and $800, marketed as “fully verified and ready to earn.” The victim of this fraud frequently remains oblivious until the IRS sends a tax bill for thousands of dollars in unreported income earned by a stranger three states away.

The Economics of Account Rental

For the undocumented worker or the shopper banned for previous infractions, these rented accounts are not a luxury a prerequisite for employment. The pricing models mirror predatory lending. A “broker”, frequently a ringleader managing a fleet of bots, lease an account to a worker for a 30% cut of their weekly earnings, or a fixed fee that must be paid before the worker sees a dime. This arrangement creates a system of digital indentured servitude. The worker takes all the physical risk, performing the labor and bearing the vehicle costs, while the broker extracts a premium simply for holding the digital keys.

| Product Type | Description | Street Price (USD) | Risk Level |

|---|---|---|---|

| Burner Account | New account, low trust score, likely to be banned quickly. | $150, $250 (One-time) | High |

| Diamond Lease | High-tier account with priority access to batches. Rented weekly. | $200, $350 / week | Medium |

| “Fullz” Profile | Stolen identity account with full victim info (SSN, DL) included. | $600, $800 (One-time) | High |

| Managed Fleet Slot | Access to a bot-controlled account; worker only does delivery. | 30-40% of Gross Earnings | Low (for worker) |

The transaction is frequently settled in cryptocurrency to avoid paper trails, though peer-to-peer payment apps are also common. In organized rings, the “landlord” retains full control of the earnings, cashing out the weekly pay to their own bank account and then distributing the worker’s share in cash, minus the rental fee. This control method ensures the worker cannot abscond with the account or the money, cementing the power in favor of the ringleader.

Defeating the “Selfie Check”

Instacart attempts to this through periodic “selfie verification” prompts, requiring the shopper to take a real-time photo to match against the profile on file. yet, the gray market has developed industrial-grade workarounds. For consensual rentals, the bypass is low-tech: the worker simply texts the account owner when a prompt appears. The owner logs in from their own device, takes the selfie, and clears the checkpoint, allowing the worker to resume shopping minutes later. This “remote unlock” service is frequently included in the weekly rental fee.

For stolen or synthetic accounts, the methods are more technical. Hackers use “camera injection” software, tools originally designed for streaming or testing, to feed a pre-recorded video or a high-resolution static image into the app’s camera stream. The software tricks the application into believing it is receiving a live feed from the phone’s hardware. Advanced versions of this software can even animate a static photo, adding blinks or slight head movements to satisfy “liveness” detection algorithms. These tools are sold alongside the accounts, a bundled software suite designed to defeat the platform’s biometric sentinels.

The “Mule” System and Warehouse Dominance

In the parking lots of high-volume warehouse stores, this identity leasing system physically manifests as the “mule” structure. A single ringleader may control ten or twenty active accounts on multiple devices, or even on a single device using “app cloner” tools. This leader sits in a vehicle, using bot software to secure orders across all leased identities simultaneously. Once an order is secured, they dispatch a “runner” or “mule”, frequently an undocumented worker renting one of the identities, to enter the store and perform the shop. The runner works for the ringleader, not Instacart.

This structure insulates the ringleader from bans. If a runner is caught or an account is deactivated due to a failed verification check, it is a minor operational hiccup. The leader simply discards the burned identity, purchases a new one from a broker, and the runner continues working under a new name by the shift. The legitimate “Diamond” shopper, playing by the rules with a single account, cannot compete with a hydra-headed operation that can absorb deactivations and its presence indefinitely.

The Tax Time Bomb

The most delayed and devastating consequence of this market is the fiscal wreckage left in its wake. Because the platform reports income to the IRS under the identity on file, the “lessor”, whether a participant or an identity theft victim, receives a 1099-NEC form for income they never physically earned. For the student who rented their account for $200 a week, the arrival of a tax bill for $60, 000 in earnings creates a financial emergency that far outweighs their illicit profits. They face a choice: admit to tax fraud and terms-of-service violations, or pay taxes on money they never touched.

For victims of identity theft, the situation is even more dire. They must navigate a bureaucratic labyrinth to prove they did not work for Instacart, frequently requiring police reports and affidavits to clear their tax records. Meanwhile, the actual earner, the renter, operates in a tax-free vacuum, their labor invisible to the state. This disconnect a massive, decentralized form of tax evasion, with the liability shifted entirely onto the shoulders of the identity holder. The platform’s reliance on digital verification, rather than physical oversight, allows this transfer of liability to, turning the gig economy into a haven for untraceable income and financial ruin.

Instacart's 'Selfie' Defense: Biometric Verification and Its Workarounds

The 'Bug Bounty' Mirage: Shopper Skepticism of Corporate Security

The Security Theater of HackerOne

Instacart maintains a public profile of cybersecurity rigor through its partnership with HackerOne. This platform allows “white hat” researchers to report software vulnerabilities in exchange for monetary rewards. By January 2026 the program listed average payouts for serious vulnerabilities between five thousand and fifteen thousand dollars. The company boasts of paying out over half a million dollars in bounties since 2020. To the outside observer or a corporate shareholder a fortified digital where code flaws are identified and patched with high efficiency. Yet this program represents a fundamental disconnect between technical security and platform integrity. The scope of these bug bounties focuses strictly on traditional information security flaws like data breaches or cross-site scripting. The “business logic” flaws that allow bot rings to manipulate batch allocation are frequently classified as out-of-scope or “intended functionality abuse.”

This exclusion creates a perverse market. A security researcher who discovers a way to steal user emails might earn five hundred dollars. A black market developer who discovers a way to bypass the batch acceptance timer can sell that exploit to thousands of shoppers for a monthly subscription fee. The financial incentive for ethical disclosure is microscopic compared to the illicit revenue chance of weaponizing the flaw. Consequently the most talented reverse-engineers ignore the bug bounty program entirely. They instead build the very “ShopperX” and “Thunder” bots that plague the platform. Instacart’s security team polices the windows while the front door stands wide open for algorithmic manipulation.

The ‘Trust and Safety’ Black Box

Legitimate shoppers who witness fraud in real time face a bureaucratic dead end known as “Trust and Safety.” The in-app reporting tools are designed with high friction and low transparency. A shopper might observe a competitor using three phones to secure orders at a Costco loading dock. They report the specific license plate and account behavior through the official app. In nearly all documented cases the whistleblower receives a templated email response acknowledging receipt promising no specific action due to “privacy policies.” The reported bot user is frequently seen working the same store the very day. This absence of feedback convinces honest workers that the reporting system is a placebo designed to vent frustration rather than solve the problem.

The operational reality suggests that Instacart prioritizes order fulfillment over shopper verification. A bot-controlled account fulfills orders just as quickly as a legitimate one. the bot user is faster because they are cheating. Banning a high-volume account creates a temporary void in the delivery network. Therefore the “Trust and Safety” algorithms appear tuned to ignore all the most egregious anomalies. Shoppers on forums like Reddit and local Facebook groups have aggregated thousands of screenshots showing support agents admitting they cannot override the system or even see the evidence provided. The support staff are frequently third-party contractors with no direct line to the engineering teams capable of patching the exploits.

The August 2020 Update and the Whac-A-Mole pattern

Corporate communications from Instacart frequently highlight specific updates as definitive solutions to the bot emergency. A prime example occurred in August 2020 when the company announced a ban on “device switching.” The patch was intended to stop shoppers from accepting a batch on one phone and completing it on another. This practice was a hallmark of organized rings where a “dispatcher” grabbed orders and distributed them to “runners.” For a brief period the update disrupted lower-level syndicates. Yet within weeks the bot developers released patches of their own. They introduced “cloned” application packages that spoofed the device ID. The software tricked the Instacart server into believing the runner’s phone was the same device that accepted the order.

This pattern of update and bypass characterizes the entire security relationship. Instacart releases a patch. Bot developers analyze the code changes. A workaround is sold to subscribers. The legitimate shopper is the only casualty in this arms race. They must navigate increasingly glitchy apps and intrusive identity checks that fail to stop the cheaters. The “Shopper ID” selfie verification feature is another example. While marketed as a biometric firewall it is frequently defeated by high-resolution photos or deepfake software. Honest shoppers report being deactivated because of bad lighting during a selfie check while bot accounts using static images continue to operate without interruption.

Vigilantism and the of Trust

The failure of corporate security has pushed shopper communities toward vigilantism. In markets like Florida and California veteran shoppers have organized their own surveillance networks. They record video evidence of bot rings operating in parking lots. They document license plates and cross-reference them with delivery times. have even confronted bot users directly which leads to physical altercations. When this evidence is presented to Instacart it is almost universally rejected. The company cites legal liability and the inability to verify third-party media. This rejection reinforces the belief that Instacart is complicit in the fraud. The sentiment is that the company profits from the efficiency of bots and only feigns concern to maintain a public image of fairness.

The FTC Settlement as a Credibility Indicator

Skepticism regarding Instacart’s commitment to honesty is grounded in legal precedent. In 2025 the Federal Trade Commission finalized a settlement requiring Instacart to pay sixty million dollars for deceptive business practices. The allegations included hiding service fees and misleading consumers about delivery costs. Shoppers view this corporate behavior as evidence of a culture that prioritizes profit over ethics. If the company is to mislead the customers paying the bills it is unlikely to protect the independent contractors doing the work. The “Bug Bounty” program and “Trust and Safety” initiatives are viewed through this lens of distrust. They are seen not as genuine security efforts as liability shields designed to deflect regulatory scrutiny while the bot economy thrives.

The economic devastation for legitimate shoppers is not a technical glitch. It is the direct result of a security strategy that values the appearance of safety over the eradication of fraud. Until the financial incentives change or the legal penalties for negligence exceed the cost of enforcement the “mirage” of security remain the.

Legal Gray Zones: Why 'Cheating' the Gig Economy Rarely Leads to Prosecution

Underground Marketplaces: The Sale of 'ShopperX' and 'Lucky' Bots — The digital trade of illicit software operates not on the dark web, in the gray corridors of Telegram channels and private Discord servers. Here, the "gig.

Pay-to-Play: Subscription Models and Crypto Payments for Illicit Access — The illicit software market targeting Instacart has mutated from a scattered collection of one-time purchase scripts into a sophisticated, tiered subscription economy. By 2024, the dominant.

The High Cost of Cheating: The 'ShopperX' Model — Investigative reports from mid-2024 exposed the financial structure of top-tier bot rings, specifically a widely circulated suite known as "ShopperX." Unlike earlier iterations that sold for.

The 'Lucky' Bot and the Drug Dealer Model — Competition among bot developers has led to aggressive marketing tactics mirroring the narcotics trade. A rival software suite known as "Lucky," identified in the same 2024.

The Scam Economy — The opacity of this market has spawned a secondary economy of scammers preying on desperate shoppers. For every functional "ShopperX" or "Lucky" bot, there are dozens.

The Ghost Notification — The proliferation of this technology has birthed a phenomenon known among drivers as the "ghost notification." A shopper's phone chime, alerting them to a $80 batch.

Collateral Damage: Economic Devastation for Legitimate 'Diamond' Shoppers — The pledge of Instacart's "Diamond Cart" tier, priority access to high-value orders for top-performing shoppers, has crumbled under the weight of automated theft. While honest workers.

The Supply Chain: Lessors and Unwitting Victims — The inventory for this marketplace originates from two distinct sources, creating a bifurcated supply chain that feeds the same end-user demand. The stream consists of consensual.

The Security Theater of HackerOne — Instacart maintains a public profile of cybersecurity rigor through its partnership with HackerOne. This platform allows "white hat" researchers to report software vulnerabilities in exchange for.

The August 2020 Update and the Whac-A-Mole pattern — Corporate communications from Instacart frequently highlight specific updates as definitive solutions to the bot emergency. A prime example occurred in August 2020 when the company announced.

The FTC Settlement as a Credibility Indicator — Skepticism regarding Instacart's commitment to honesty is grounded in legal precedent. In 2025 the Federal Trade Commission finalized a settlement requiring Instacart to pay sixty million.

Legal Gray Zones: Why 'Cheating' the Gig Economy Rarely Leads to Prosecution — The following investigative review analyzes the legal complexities surrounding illicit software in the gig economy. ### **SECTION 14: Legal Gray Zones: Why 'Cheating' the Gig Economy.

Questions And Answers

Tell me about the the invisible race: ui vs. api of Instacart.

To the honest gig worker, the Instacart "Carrot" application acts as the absolute authority. They wait for the graphical interface to load, for the spinning loading icon to cease, and for the colorful batch cards to populate the screen. This visual rendering process, designed for human eyes, is agonizingly slow in the context of high-frequency data trading. The illicit software rings manipulating the platform understand a fundamental truth: the app.

Tell me about the breaking the: bypassing certificate pinning of Instacart.

Instacart's engineering team is aware of traffic interception risks. To prevent this, the application employs a security measure known as SSL Certificate Pinning. Under normal circumstances, the app is hard-coded to trust only a specific cryptographic certificate issued by Instacart's servers. If a proxy server attempts to intercept the traffic, it must present its own certificate to decrypt the data. The app, seeing an unfamiliar certificate, should theoretically terminate the.

Tell me about the the json payload: reading the matrix of Instacart.

When the Instacart server dispatches a list of available batches to a region, it sends a complex JSON document. To a human, this data is invisible until the app parses it and draws text on the screen. To the bot, this text file contains everything needed to make a split-second financial decision. The intercepted payload includes fields such as batch_summary, total_payout, item_count, distance_unit, and store_location_id. The bot software parses this.

Tell me about the the 'accept' signal: injection over interaction of Instacart.

The most damaging aspect of the MITM attack is not just reading the data, the ability to inject responses. In the legitimate user experience, accepting a batch requires a physical interaction: the finger must touch the screen and drag a slider from left to right. This action triggers a sequence of events in the app code, eventually constructing a network request to the server endpoint, likely structured as a POST.

Tell me about the spoofing the 'heartbeat': location and presence of Instacart.

The API vulnerabilities extend beyond simple order acceptance. The Instacart platform relies on frequent "heartbeat" signals from the Shopper app to track the courier's location and availability. These requests contain GPS coordinates (latitude and longitude) used by the dispatch algorithm to determine which shoppers are close enough to a store to receive an offer. This reliance on client-side data reporting creates a serious vector for manipulation. Illicit software rings have.

Tell me about the the authentication replay of Instacart.

Security researchers have also noted that the persistence of authentication tokens aids these illicit networks. Once a user logs in, the app receives a session token used for all subsequent requests. In a secure environment, these tokens would rotate frequently or be bound to specific device signatures that change. Yet, the black market for Instacart accounts suggests that these tokens can be harvested and replayed. "Zombie" accounts, profiles belonging to.

Tell me about the the cat-and-mouse signature war of Instacart.

Instacart attempts to counter these direct API injections by implementing request signing. This involves generating a cryptographic signature for every request based on the request body, a timestamp, and a secret key hidden within the app code. If the signature does not match the payload, the server rejects the request. This is intended to ensure that the request originated from the official, unmodified app. The developers behind the bot rings.

Tell me about the underground marketplaces: the sale of 'shopperx' and 'lucky' bots of Instacart.

The digital trade of illicit software operates not on the dark web, in the gray corridors of Telegram channels and private Discord servers. Here, the "gig economy" dissolves into a raw, unregulated bazaar where code is the only currency that matters. For the Instacart shopper to risk deactivation for a living wage, the names "ShopperX" and "Lucky" are not software titles; they are the keys to the kingdom—expensive, risky, and.

Tell me about the pay-to-play: subscription models and crypto payments for illicit access of Instacart.

The illicit software market targeting Instacart has mutated from a scattered collection of one-time purchase scripts into a sophisticated, tiered subscription economy. By 2024, the dominant business model for bot developers had shifted to "Software as a Service" (SaaS), levying a tax on the earnings of dishonest shoppers. Access to premium batch-grabbing tools is no longer a static product a recurring liability, enforced through cryptographic payments and remote kill switches.

Tell me about the the high cost of cheating: the 'shopperx' model of Instacart.

Investigative reports from mid-2024 exposed the financial structure of top-tier bot rings, specifically a widely circulated suite known as "ShopperX." Unlike earlier iterations that sold for a flat fee of $200 or $300, ShopperX introduced a performance-based pricing tier. Users were required to pay an upfront "initiation fee" of approximately $400 USD. yet, possession of the software did not guarantee continued utility. The developers implemented a revenue-sharing model where the.

Tell me about the crypto-only: the wall of anonymity of Instacart.

Financial trails are the primary vulnerability for any illicit operation. To mitigate this risk, bot sellers have almost universally migrated to cryptocurrency. Bitcoin (BTC) and Tether (USDT) are the standard currencies of this underground trade. Transactions occur off-platform, coordinated through encrypted messaging apps like Telegram or Signal. A prospective buyer contacts a "distributor," receives a wallet address, and must transfer the funds within a tight window to secure their activation.

Tell me about the the 'lucky' bot and the drug dealer model of Instacart.

Competition among bot developers has led to aggressive marketing tactics mirroring the narcotics trade. A rival software suite known as "Lucky," identified in the same 2024 investigations, adopted a " hit is free" strategy. New users were granted a trial period allowing them to secure up to $200 worth of batches without payment. This proof-of-concept phase serves two purposes: it demonstrates the bot's efficacy to skeptical buyers and hooks the.

Email Verification

Enter the 8 digit verification code sent to your email.

Email Verification

Enter the 14-digit code sent to your email.