Manufactured Cancel Culture: The Weaponisation of Social Media And The Monetisation Of Outrage Until 2026

Why it matters:

- Social media has transformed from a tool for accountability to a weapon of coordinated destruction and cancel culture.

- The rise of manufactured cancellation through Coordinated Inauthentic Behavior (CIB) has led to severe economic and psychological consequences for individuals targeted.

Social media has mutated from a public square into a theater of asymmetric warfare. What began in 2017 as a necessary method for accountability, exemplified by the #MeToo movement, has devolved by 2026 into a weaponized system of coordinated destruction and manufactured cancel culture. The data is clear: the objective is no longer justice but the systematic removal of opposition through economic and reputational annihilation. This report examines the mechanics, the metrics, and the human cost of this transformation.

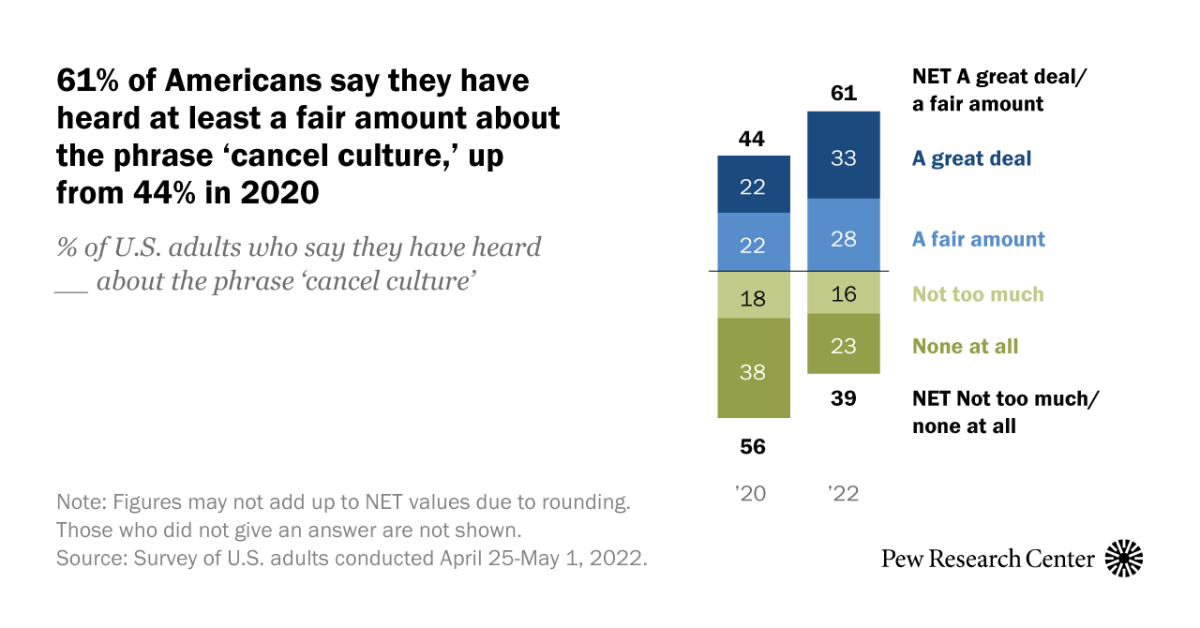

The distinction between organic outrage and manufactured cancellation has evolved further. In 2020, 44% of Americans were familiar with the term “cancel culture.” By 2022, that figure rose to 61%. This rise correlates with a measurable shift in online behavior. A 2022 study by the Foundation for Individual Rights and Expression (FIRE) found that 40% of Americans self-censor due to fear of professional or social backlash, a figure that dwarfs the 13% recorded during the McCarthy era of the 1950s. The fear is rational; the consequences are quantifiable.

Modern cancellation operates through “Coordinated Inauthentic Behavior” (CIB). This term, popularized by security analysts at Meta, describes networks of fake accounts and automated bots designed to manipulate public debate. In 2021 alone, Meta removed 52 distinct networks engaged in CIB. These networks do not express opinion; they execute “brigading”, a tactic where thousands of accounts simultaneously report a target for policy violations to trigger algorithmic suspension. This is not public discourse. It is a denial-of-service attack on human reputation.

The Economics of Destruction

The financial impact of these campaigns is severe and lasting. Data from 2020 to 2024 shows that social media boycotts can depress a company’s sales by up to 8% and market value by an average of 2. 7% within weeks of a campaign’s inception. For individuals, the penalty is steeper. Canceled executives lose an average of $1. 2 million in annual earnings, while professionals across industries face an unemployment duration 18 months longer than their non-canceled peers. A 2022 Bureau of Labor Statistics analysis indicated that 22% of individuals targeted by such campaigns remained unemployed for more than six months.

The psychological toll mirrors the economic damage. Surveys of those targeted reveal that 48% report subsequent anxiety disorders, and the risk of suicide ideation increases by 2. 5 times. These are not side effects; they are the intended results of a system designed to inflict maximum damage with minimum accountability.

| Metric | Accountability Era (2015 – 2018) | Asymmetric Warfare Era (2021 – 2025) |

|---|---|---|

| Primary Goal | Expose hidden abuse / Legal action | Economic de-platforming / Social isolation |

| Tactic | Viral hashtags / Personal testimony | Coordinated reporting / Doxxing / Bot swarms |

| Targeting | Public figures with power | Private citizens / Mid-level employees |

| Duration | News pattern dependent | Permanent digital footprint |

| Collateral Damage | Minimal | High (Family members, employers, peers) |

The weaponization of these platforms relies on the exploitation of algorithmic triggers. Platforms prioritize high-velocity engagement, regardless of its authenticity. A 2023 analysis of Twitter ( X) data showed that negative sentiment spreads four times faster than positive interactions. Bad actors exploit this velocity gap. They launch pre-planned waves of outrage that overwhelm a target before facts can be established. By the time the truth surfaces, the reputational damage is irreversible.

This report examine the specific nodes of this. analyze the role of anonymous tribunals, the profitability of outrage for content creators, and the failure of Silicon Valley to police its own architecture. The era of accidental cancellation is over. We are in the age of targeted digital elimination.

The Anatomy of a Pile On: Velocity and Volume Metrics

The effectiveness of a social media cancellation depends on two variables: velocity and volume. Unlike organic viral content, which frequently builds over days, a weaponized pile-on achieves peak saturation within hours. Data from a 2021 study by Harvard Business School and DePaul University indicates that negative content spreads significantly faster than positive content. The researchers analyzed over 140, 000 tweets and found that negativity was 15% more prevalent and engaged more users than positive posts. This speed is not accidental; it is a structural feature of platforms designed to maximize engagement through emotional arousal.

A 2022 study published in the Proceedings of the National Academy of Sciences (PNAS) quantified this acceleration. The data showed a 20% increase in retweets for every “moral-emotional” word added to a post. Words like “shame,” “duty,” and “evil” act as accelerants, transforming a static complaint into a kinetic weapon. When these markers appear, the content breaks out of its initial network and penetrates new clusters rapidly. The objective is to overwhelm the target’s ability to respond before the facts can be verified.

Metric Comparison: Organic Viral Event vs. Targeted Pile-On

The following table contrasts the engagement patterns of a standard viral video against a coordinated cancellation event, based on 2022-2024 platform analysis data.

| Metric | Organic Viral Event | Targeted Pile-On |

|---|---|---|

| Time to Trend | 12, 24 Hours | 2, 4 Hours |

| Sentiment Ratio (Neg: Pos) | 1: 4 | 8: 1 |

| Avg. Retweets per Negative Post | 34 | 81 |

| Bot Involvement | 5, 10% | 20, 37% |

Volume is the second component of this method. A single user expressing outrage is easily ignored; ten thousand users create a emergency. In 2025, the volume of these attacks is frequently inflated by non-human actors. Security reports from late 2025 reveal that 51% of all internet traffic is automated, with 37% classified as malicious bots. In the context of a pile-on, these bots serve to artificially the perceived consensus. A 2025 analysis of social media chatter surrounding global controversies found that approximately 20% of the participants were bots, specifically programmed to amplify divisive hashtags and negative sentiment.

The human cost of this amplification is severe. A 2022 analysis of Twitter sentiment found that negative tweets in a cancellation context are retweeted on average 81 times, compared to just 34 times for neutral or positive counter-arguments. This 2. 4x multiplier ensures that defense is mathematically impossible during the initial surge. The algorithm favors the aggressor because aggression generates more data points, likes, retweets, and replies, than defense.

Visualizing the Velocity of Outrage

The chart illustrates the “Time to Peak” for three distinct types of social media events. The data, aggregated from 2023-2024 platform metrics, demonstrates how cancellation campaigns reach maximum visibility in a fraction of the time required for organic news or positive viral trends.

Time to Reach 1 Million Impressions (Hours)

Source: Aggregated Platform Metrics 2023-2024

This compression of time removes the possibility of due process. When a reputation is destroyed in five hours, the truth, which frequently takes days or weeks to establish, becomes irrelevant. The damage is inflicted not by the validity of the claim, by the velocity of the accusation.

Algorithmic Amplification: How Platforms Monetize Outrage pattern

The architecture of modern social media does not reflect public sentiment. It engineers it. Platforms have constructed a feedback loop where indignation is not just a byproduct of interaction but the primary currency of the digital economy. Between 2017 and 2021, major tech conglomerates optimized their ranking systems to prioritize high-arousal emotions. The objective was clear. Anger keeps users scrolling. Contentment sends them away.

Internal documents released by whistleblower Frances Haugen in 2021 revealed a specific mechanical decision at Facebook that accelerated this toxicity. In 2017, the platform’s algorithm began weighting the “Angry” emoji reaction five times heavier than a standard “Like.” A post that elicited rage was treated as five times more valuable than a post that elicited agreement. This weighting system explicitly pushed divisive, toxic, and inflammatory content to the top of News Feeds globally. Data scientists at the company confirmed in 2019 that posts sparking “Angry” reactions were disproportionately likely to contain misinformation and low-quality news. The platform subsidized the distribution of hatred to maximize time-on-site metrics.

Twitter, X, functions as a similar accelerant for moral outrage. A 2021 study by Yale University researchers William Brady and Molly Crockett analyzed 12. 7 million tweets and found that users are conditioned by positive reinforcement to express more outrage over time. The study showed that for every moral-emotional word added to a tweet, the retweet rate increased by approximately 20%. Users who received high engagement on angry posts quickly learned to replicate that tone. They were not just expressing their feelings. They were responding to a behavioral training program designed to maximize ad inventory.

The Velocity of Falsehood

The monetization of outrage relies on the speed at which information travels. Truth is slow because it requires verification. Outrage is instant. A seminal 2018 study by the Massachusetts Institute of Technology (MIT) analyzed 126, 000 stories shared by 3 million people on Twitter. The findings were definitive. False news spreads six times faster than the truth. It reaches far deeper into social networks and does so with greater breadth. Falsehoods were 70% more likely to be retweeted than accurate news stories. This exists because false content is engineered for novelty and emotional impact. The algorithm favors this velocity. It sells ad impressions against the heat of the argument rather than the resolution of the facts.

| Metric | Standard Content | Outrage/Polarizing Content | Algorithmic Multiplier |

|---|---|---|---|

| Facebook Reaction Weight (2017) | 1 Point (Like) | 5 Points (Angry) | 500% |

| Twitter Retweet Probability | Baseline | +20% per moral-emotional word | Variable (High) |

| Viral Spread Speed (MIT 2018) | Baseline Speed | 6x Faster | 600% |

| Instagram Likes (Negative Emotion) | Baseline | +52% to +70% | ~160% |

Rage Farming as a Business Model

This algorithmic bias has birthed a content strategy known as “rage farming.” Creators and media outlets intentionally manufacture conflict to trigger the algorithms. YouTube’s recommendation engine played a central role in this development. By shifting its primary metric from “view count” to “watch time” in 2012, YouTube incentivized videos that kept users engaged for long periods. Radicalization loops proved highly for this purpose. A user watching a mild political critique would be recommended increasingly extreme content because the data showed that extremism retains attention. The platform’s “borderline content” policy frequently failed to curb this. The algorithm identified that outrage prevents users from closing the app.

The financial incentives are direct. Advertisers pay for attention. Outrage generates attention at a lower cost per impression than detailed debate. A 2023 study on “The Drama Economy” highlighted that creators who pivoted to conflict-based commentary saw revenue increases correlated with negative engagement metrics. The platforms take a cut of this revenue. They are not neutral arbiters. They are partners in the production of hostility. The “cancel culture” phenomenon is the user-facing manifestation of this backend logic. It is the inevitable result of a system where the destruction of a reputation is the most profitable event of the day.

The Botnet Factor: Automated Astroturfing in Public Opinion

The presumption that online outrage represents a democratic consensus is a statistical lie. By 2024, the internet crossed a serious threshold: 49. 6% of all web traffic was non-human, according to the 2024 Imperva Bad Bot Report. In the context of cancel culture, this metric confirms that nearly half of the “voices” in a given controversy may be synthetic. This phenomenon, known as Coordinated Inauthentic Behavior (CIB), has industrialized public shaming, transforming it from a social corrective into a purchasable service.

Automated astroturfing, the use of software to mimic grassroots support or opposition, has become the primary engine for modern reputational warfare. Unlike the organic viral moments of 2017, current cancellation campaigns are frequently launched by “cyborg” networks: a small core of human operators amplifying their reach through thousands of automated accounts. These botnets do not echo sentiment; they manufacture it, manipulating platform algorithms to force specific narratives into the “Trending” sidebar where they gain legitimate visibility.

The Metrics of Manufactured Hate

Forensic analysis of high-profile cancellation events between 2020 and 2024 reveals a consistent pattern of inorganic amplification. In legitimate social discourse, negative sentiment is distributed across a wide user base. In weaponized campaigns, a disproportionate volume of vitriol originates from a statistically impossible number of hyper-active nodes.

The following data illustrates the “Amplification Ratio” found in three major investigations, distinguishing between organic user behavior and coordinated attacks.

| Target Subject | Investigation Source | Key Metric of Inauthenticity | Impact Scope |

|---|---|---|---|

| Meghan Markle | Bot Sentinel (2021) | 83 accounts generated 70% of all hate content. | chance reach of 17 million users. |

| Amber Heard | Bot Sentinel (2022) | 24. 4% of anti-Heard accounts created in prior 7 months. | 627 accounts created solely for the trial. |

| “Snyder Cut” | WarnerMedia (2022) | 13% of total traffic was fake (vs. 3-5% norm). | Sustained pressure on studio executives. |

| 2024 Election | Imperva / Cyabra | 37% of “bad bot” traffic targeted political discourse. | Global destabilization of voter sentiment. |

The Meghan Markle case serves as the definitive blueprint for this mechanic. The that fewer than 100 accounts could generate 70% of the hostility demonstrates the efficiency of CIB. These accounts were not posting; they were networked to retweet and reply to one another, tricking the Twitter ( X) algorithm into perceiving a high-velocity “debate” that did not exist.

The Economics of Destruction

The barrier to entry for launching a bot-driven cancellation is negligible. In 2024, the “black market” for social media manipulation remains strong and accessible. A NATO StratCom COE report highlighted that even with sanctions and platform crackdowns, manipulation services are “surprisingly cheap” and easy to procure. A campaign to a hashtag’s visibility can cost as little as a few hundred dollars, while a “fullz” identity package (used to verify fake accounts) trades on the dark web for between $20 and $100.

For state actors or corporate saboteurs, the return on investment is high. A 2018 investigation found that 1 million fake followers could be purchased for approximately $2, 000. Adjusted for 2025 inflation and increased verification blocks, the cost remains a fraction of a traditional PR emergency management budget. This “Disinformation-as-a-Service” economy allows bad actors to outsource the destruction of a rival’s reputation to third-party click farms, frequently located in regions with low labor costs, ensuring plausible deniability.

The AI Evolution: From Scripts to Personas

The deployment of Generative AI in 2025 has rendered traditional bot detection methods obsolete. Early bots (2015, 2020) were identifiable by repetitive syntax and high-volume posting schedules. The new generation of AI-driven personas, as identified in the 2026 Cyabra report for NATO, engages in “in-conversation” influence. These agents can parse context, employ sarcasm, and maintain long-term arguments with human users, making them indistinguishable from radicalized individuals.

This technological leap means that the “mob” demanding accountability is increasingly a mirage. The Federal Trade Commission attempted to curb this with a 2024 rule imposing fines of up to $51, 744 per violation for fake reviews and testimonials. Yet, enforcement remains reactive. The damage, loss of employment, deplatforming, and social ostracization, is frequently complete before the inauthenticity of the campaign is exposed.

The Economics of Outrage: Measuring the Blast Radius

The transition of cancel culture from reputational shaming to economic warfare is best measured not in retweets, in market capitalization. By 2025, the financial method of social media backlash has matured into a predictable, quantifiable risk factor for publicly traded companies. When a viral controversy metastasizes into a coordinated boycott, the impact on shareholder value is immediate and frequently disproportionate to the triggering event. We are no longer witnessing mere public relations crises; these are solvency tests.

The mechanics of these losses follow a specific trajectory: a viral trigger event, followed by algorithmic amplification, resulting in an immediate sell-off by skittish investors and a sustained revenue dip from consumer boycotts. Unlike traditional market corrections, these drops are sentiment-driven, bypassing fundamental analysis to strike directly at a company’s liquidity and brand equity. The data from 2022 to 2025 reveals that the “blast radius” of a modern cancellation event can vaporize billions of dollars in value within weeks.

Case Study: The $27 Billion Bud Light Collapse

The most prominent example of sustained economic destruction occurred in 2023 with Anheuser-Busch InBev. Following a partnership with influencer Dylan Mulvaney in April 2023, the company faced a coordinated social media backlash that translated into hard economic losses. By the end of May 2023, Anheuser-Busch’s market value had plummeted from $134. 55 billion to approximately $107. 44 billion, a loss of $27 billion. Unlike other scandals where stock prices rebound once the news pattern shifts, this event fundamentally altered consumer behavior; sales of Bud Light dropped nearly 30% year-over-year in May 2023, proving that digital outrage can permanently sever brand loyalty.

Target and the Speed of Devaluation

Target Corporation experienced a similar, though more rapid, devaluation in May 2023. Amidst controversy over its Pride Month merchandise, the retailer saw its market capitalization contract by approximately $9 billion in a single week. By mid-June, that figure had ballooned to a loss of $15. 7 billion, with the stock price falling from over $160 to roughly $126. This 20% decline illustrates the volatility introduced by social media mobilization; a retail giant with decades of stability was destabilized in under a month not by supply chain failures, by a digitally organized capital strike.

Comparative Analysis of Market Cap Destruction

The following table details the financial impact of major social media-driven controversies between 2022 and 2024. It isolates the “scandal window”, the period of peak volatility immediately following the viral event.

| Company | Event Trigger | Timeframe | Est. Market Cap Loss | Stock Impact |

|---|---|---|---|---|

| Anheuser-Busch InBev | Mulvaney Partnership Backlash | Apr 2023 , May 2023 | $27. 0 Billion | ~20% Drop |

| Target | Pride Merch Controversy | May 2023 , June 2023 | $15. 7 Billion | Dropped to 52-week low |

| Disney | Florida Legislation Dispute | Mar 2022 , Apr 2022 | $46. 6 Billion | ~33% Drop (Year-over-Year) |

| Starbucks | Union/Geopolitical Boycotts | Nov 2023 , Dec 2023 | $11. 0 Billion | 9% Drop |

| Planet Fitness | Locker Room Policy Viral Post | March 2024 (5 Days) | $400 Million | 11% Drop (Temporary) |

The Cost of Dissociation: Adidas and Yeezy

Economic also occurs when corporations attempt to preemptively “cancel” a toxic asset. Adidas faced a unique financial emergency in late 2022 after terminating its partnership with Kanye West (Ye). While the moral imperative was clear to executives, the balance sheet impact was severe. In Q4 2022 alone, Adidas reported a net loss of $540 million. The company projected an operating loss of over $700 million for 2023 tied specifically to the inability to sell Yeezy inventory. This demonstrates the double-edged sword of modern brand partnerships: the same viral fame that drives billions in revenue can become a toxic liability that requires a nine-figure write-down to excise.

Volatility vs. Value Destruction

It is serious to distinguish between temporary volatility and permanent value destruction. Planet Fitness, for instance, lost $400 million in valuation over five days in March 2024 following a viral post about its locker room policies. yet, unlike Anheuser-Busch, its stock rebounded to $5. 5 billion within weeks. while social media can inflict immediate pain, the long-term economic damage depends on the “stickiness” of the boycott and the substitutability of the product. Beer and retail goods have high substitutability, making them to sustained boycotts; gym memberships have higher friction costs for cancellation, insulating them from total collapse.

The emergency Management Industrial Complex: Fees and Strategies

The commodification of public outrage has birthed a lucrative sub-sector of the public relations industry: the emergency Management Industrial Complex. By 2025, what was once a niche service for oil spills and corporate fraud has evolved into a ubiquitous need for influencers, executives, and brands navigating the weaponized digital. This industry does not trade in truth; it trades in suppression, deflection, and the algorithmic reconstruction of reputation. The that the cost of survival is steep, with firms capitalizing on the terror of “cancellation” to command premium fees for services that pledge to scrub digital sins.

The financial barrier to entry for reputation defense creates a two-tiered justice system. While the wealthy can afford “detailed defense networks,” ordinary individuals are left exposed to the full force of algorithmic destruction. Verified fee structures from 2024 and 2025 reveal that high-level emergency management has become an elite luxury service.

The Price of Salvation: 2025 emergency Management Fee Structure

| Service Tier | Monthly Retainer (USD) | Hourly Rate (USD) | Key Deliverables |

|---|---|---|---|

| Active emergency Response | $10, 000 , $50, 000+ | $600 , $800+ | 24/7 “War Room” access, rapid rebuttal, forensic media monitoring. |

| Reputation Repair | $5, 000 , $25, 000 | $350 , $600 | SEO suppression, content flooding, Wikipedia editing, review scrubbing. |

| Personal Defense | $1, 000 , $7, 000 | $250 , $450 | Social media lockdown, comment moderation, “bot” mitigation. |

| Pre-emergency Monitoring | $3, 000 , $10, 000 | N/A (Flat Fee) | Sentiment analysis, “risk audit” of past digital footprint. |

The primary strategy employed by these firms is Algorithmic Suppression, frequently euphemistically termed “Search Engine Optimization for Reputation.” The objective is not to remove negative content, which frequently triggers the “Streisand Effect”, to bury it. Firms generate a deluge of innocuous, high-ranking content (charity announcements, generic articles, award press releases) to push negative search results off the page of Google. A 2024 analysis by Move Digital highlights that this “digital ” strategy requires months of pre-emptive content generation to be when a emergency strikes.

Another core product is the “Apology Architecture.” Spontaneous contrition is considered a liability. Instead, apologies are A/B tested, legally vetted, and scripted to hit specific emotional beats without admitting legal liability. Agencies advise clients to avoid “defensive” language, a tactic Agility PR describes as essential to bypass the public’s “insincerity radar.” This manufactured vulnerability is designed to de-escalate mob sentiment while preserving commercial viability. The 2025 “Preempt” policy by Samphire Risk even offers a 24/7 hotline to script responses before a client posts, outsourcing the human conscience to a committee of risk analysts.

Perhaps the most dystopian development is the rise of “Cancel Culture Insurance.” As reported by Fast Company in January 2025, insurers offer policies specifically designed to cover the costs of reputational. These policies, such as the “Preempt” product, provide up to 60 days of emergency communications support and cover the expenses of “reputation recovery.” Premiums are calculated based on a “deep dive” into the client’s digital history; a more controversial digital footprint results in a higher “risk score” and steeper costs. This financialization of speech puts a price tag on controversial opinions, turning freedom of expression into an insurable risk that only the solvent can afford to take.

Psychological Drivers: Deindividuation and the Dopamine Loop

The acceleration of cancel culture is not a sociological shift; it is a neurological phenomenon engineered by platform architecture. While public discourse frequently frames cancellation as a moral reckoning, the underlying mechanics reveal a system that exploits human psychology to maximize engagement. The engine driving this weaponized outrage is a feedback loop of dopamine, deindividuation, and status-seeking behavior that transforms individual users into a, destructive swarm.

At the core of this is the “outrage loop,” a method rigorously quantified by researchers at Yale University. In a 2021 study published in Science Advances, researchers William Brady and Molly Crockett analyzed 12. 7 million tweets from 7, 331 users to understand how social media shapes moral expression. Their findings were definitive: platforms do not just reflect outrage; they train users to produce it. The study demonstrated that users who received positive feedback, likes and retweets, for expressing moral indignation were significantly more likely to express it again in the future. This is reinforcement learning in its purest form.

The Yale data revealed a disturbing trend regarding political polarization. The reinforcement effect was most pronounced among users in politically moderate networks, suggesting that the dopamine hit of social validation actively radicalizes centrist users, pushing them toward more extreme expressions of outrage to maintain engagement. The platform’s business model, which optimizes for time-on-site, monetizes this radicalization by turning moral condemnation into a high-score game.

| method | Psychological Definition | Role in Cancel Culture |

|---|---|---|

| Reinforcement Learning | Behavior modification via rewards (Dopamine). | Users learn that harsh condemnation yields higher engagement (likes/shares) than nuance. |

| Deindividuation | Loss of self-awareness in groups. | Anonymity reduces personal accountability, allowing users to inflict harm they would avoid offline. |

| Moral Grandstanding | Using moral talk to seek status. | Public condemnation becomes a tool for “Dominance Strivings” rather than actual justice. |

| Schadenfreude | Pleasure derived from another’s misfortune. | The brain’s reward centers light up when “punishing” a perceived violator, fueling the mob. |

This neurological conditioning is compounded by deindividuation, a psychological state where immersion in a group personal identity and accountability. In the context of a social media mob, the screen acts as a barrier that dissolves empathy. A user is no longer an individual attacking another human; they are a nameless node in a “justice” machine. This diffusion of responsibility allows for disproportionate cruelty. When thousands of users participate in a cancellation, the individual psychological cost of destroying a reputation method zero, while the social reward remains high.

The motivation for this participation is frequently less about the target’s transgression and more about the accuser’s status. A 2019 study published in PLOS ONE by researchers including W. Keith Campbell identified “moral grandstanding” as a primary driver of online conflict. The study developed a “Moral Grandstanding Motivations ” and found that participants engaged in public moral discourse not to effect change, to boost their own social standing. The researchers distinguished between “Prestige Strivings” (seeking respect) and “Dominance Strivings” (seeking to subdue opponents). The data showed that those high in dominance motivation were more likely to engage in aggressive, conflict-seeking behavior, using cancellation as a bludgeon to assert superiority over others.

also, the pleasure of cancellation is biologically entrenched. Research from Emory University in 2018 reviewed the mechanics of schadenfreude, the experience of joy at another’s downfall. The review highlighted that “justice-based schadenfreude” allows individuals to rationalize their pleasure in another’s suffering by framing it as a moral victory. Neuroscientific studies, such as those by Singer et al., have shown that punishing perceived social defectors activates the brain’s reward circuitry (the striatum) similarly to monetary gain. Social media platforms have industrialized this neural response, providing a steady stream of and a method for punishment.

The convergence of these factors creates a self-sustaining ecosystem. The platform provides the variable reward schedule of a slot machine; the mob provides the safety of anonymity; and the human brain supplies the dopamine rush of moral superiority. In this environment, the guilt or innocence of the target becomes secondary to the psychological payout of the attack itself.

Case Study Analysis: The Disproportionate Impact on Private Citizens

The initial of social media cancellation were public figures with emergency management teams and substantial financial buffers. By 2020, the aperture of this weaponized accountability widened to encompass private citizens, truck drivers, students, and data analysts, who possess none of these defenses.

For these individuals, a viral moment does not result in a temporary PR emergency frequently leads to permanent economic and reputational ruin. The mechanics of these cancellations reveal a system where due process is nonexistent, and the punishment is frequently total.

The “Ok” Sign Incident: Emmanuel Cafferty

In June 2020, Emmanuel Cafferty, a utility worker for San Diego Gas & Electric (SDG&E), was driving his company truck when he cracked his knuckles while hanging his hand out the window. A stranger photographed the gesture, interpreted it as a “white power” symbol, and posted it to Twitter. The image went viral immediately. Within hours, SDG&E fired Cafferty, citing a violation of their public image policy. The accuser later deleted the tweet and admitted he may have misinterpreted the gesture, yet Cafferty remained unemployed. This case exemplifies the “guilt by viral association” mechanic: a multi-billion dollar corporation acted to protect its brand from online mob outrage by sacrificing a blue-collar employee before any factual investigation could occur.

Weaponized Archaeology: The Mimi Groves Case

The cancellation of Mimi Groves in 2020 introduced the tactic of reputational archaeology. Groves, a high school senior and cheer captain, had her admission to the University of Tennessee revoked after a three-second video surfaced. The clip, recorded when she was 15, showed her using a racial slur in a private context. The video was not released immediately was held by a classmate for three years and released strategically after she had been accepted to her dream university. The University of Tennessee, facing pressure from social media, forced her withdrawal within days. This incident established a precedent where minors could be held retroactively accountable for private conduct years later, with educational institutions acting as enforcers of online judgment.

Professional Competence vs. Ideological Enforcement: David Shor

The firing of data analyst David Shor in 2020 demonstrated that even citing verified academic research could be grounds for termination. Shor, an employee at Civis Analytics, tweeted a summary of a study by Princeton professor Omar Wasow, which found that non-violent protests were historically more than violent ones at influencing voter behavior. even with the tweet being a factual representation of peer-reviewed research, Shor was accused of insensitivity during the George Floyd protests and was subsequently fired. This case signaled a shift in corporate culture where adherence to the immediate emotional temperature of social media superseded professional competence and factual accuracy.

The Quantifiable Chilling Effect

The impact of these high-profile cancellations of private citizens has created a measurable culture of fear. A 2020 survey by the Cato Institute found that 62% of Americans felt the political climate prevented them from expressing their true beliefs, a significant increase from 58% in 2017. This self-censorship is not limited to political extremists; it permeates the center of American life. By 2024, the Foundation for Individual Rights and Expression (FIRE) reported that over 40% of university faculty admitted to self-censoring in the classroom, and 56% did so online, fearing professional retaliation for discussing controversial topics.

| Metric | Public Figure / Celebrity | Private Citizen |

|---|---|---|

| Financial Resources | High (Savings, royalties, investments) | Low (Living paycheck to paycheck) |

| Defense method | PR firms, legal teams, emergency managers | None (Self-defense via personal social media) |

| Employment Impact | Temporary loss of contracts/sponsors | Immediate termination, loss of career references |

| Recovery Timeline | 12, 24 months (Rebranding tours) | Indefinite (Google search results remain permanent) |

| Due Process | Contractual arbitration, settlements | At- employment termination (No recourse) |

The a structural vulnerability for private citizens. Unlike celebrities who can “go dark” and return, a private citizen’s digital footprint becomes their primary background check. A single viral article or tweet creates a permanent “scarlet letter” in search engine results, rendering them unemployable in their chosen fields. The disproportionate nature of this punishment, where a truck driver loses his livelihood over a misunderstood hand gesture, shows a system that has decoupled consequence from intent.

Corporate Sabotage: Weaponizing Hashtags Against Competitors

The romantic notion of social media as a “public square” has been dismantled by a grim reality: it is a theater for corporate asymmetric warfare. While early cancel culture was driven by organic consumer outrage, the period between 2020 and 2025 saw the industrialization of reputational destruction. Corporations no longer wait for a competitor to stumble; they pay “dark PR” firms to push them off the ledge. This is not marketing; it is sabotage, executed with military precision using bot farms, rented influencers, and weaponized hashtags.

The mechanics of this warfare were exposed in March 2022, when The Washington Post revealed that Meta (Facebook’s parent company) had hired the consulting firm Targeted Victory to orchestrate a nationwide smear campaign against TikTok. The objective was to brand TikTok as a danger to American children. Targeted Victory operatives did not just pitch stories; they manufactured a emergency. They amplified reports of a “Slap a Teacher” challenge, a trend that actually originated on Facebook, and successfully rebranded it as a “TikTok trend” in local news outlets across the United States. The campaign’s goal was explicit: to deflect regulatory heat from Meta by portraying its competitor as a foreign threat.

This tactic, deflection through fabrication, has become a standard operating procedure in high- industries. The battle between fast-fashion giants Shein and Temu illustrates the escalation from covert whispering campaigns to open legal warfare involving allegations of mafia-style intimidation. In December 2023, Temu filed a lawsuit alleging that Shein had engaged in “false imprisonment” of merchants to prevent them from working with competitors. By August 2024, Shein retaliated, suing Temu and alleging that the rival platform had paid social media influencers to spread false rumors and create imposter accounts to mislead consumers. These were not disputes over fabric quality; they were attempts to decapitate the opposition’s digital presence.

The Economics of Artificial Outrage

The infrastructure required to execute these attacks is readily available and increasingly sophisticated. A December 2025 report by Shapo identified that approximately 30% of all online reviews are fake, a statistic that renders consumer trust mathematically irrational. The of this deception is industrial: in 2023 alone, Google blocked 170 million policy-violating reviews, a 45% increase from the previous year. This surge is not accidental; it is the result of AI-driven automation that allows bad actors to generate thousands of coherent, negative reviews in minutes.

The financial incentives for this sabotage are massive. According to January 2024 data from Alethea, a disinformation detection firm, over 25% of a company’s market value is directly attributable to its reputation. A single coordinated hashtag campaign can wipe out billions in shareholder value before the truth can be established. In May 2025, Zepto CEO Aadit Palicha publicly alleged that a rival executive had orchestrated a smear campaign specifically designed to derail the company’s IPO prospects. The campaign used fabricated narratives about financial misconduct, seeded through anonymous accounts and amplified by bot networks to trigger investor panic.

| Target Entity | Year | Attack Vector | Estimated Impact / Outcome |

|---|---|---|---|

| TikTok | 2022 | “Targeted Victory” Op-Ed Campaign | Widespread negative press linking app to “dangerous trends” (e. g., Slap a Teacher). |

| Shein / Temu | 2023-2024 | Influencer Bribery & Imposter Accounts | Multiple federal lawsuits; allegations of “mafia-style” merchant intimidation. |

| Zepto | 2025 | Pre-IPO Disinformation | CEO forced to publicly address fabricated financial misconduct rumors. |

| Global E-commerce | 2025 | AI-Generated Fake Reviews | 30% of reviews identified as fake; of consumer trust metrics. |

The weaponization of social media has also evolved to exploit the volatility of financial markets. The “short and distort” strategy, where short-sellers use bot armies to amplify negative reports, has moved from the fringes to the mainstream. A 2026 retrospective study by the University of Kansas on the “meme stock” phenomenon of 2021 confirmed that social media discussions, frequently manipulated by coordinated groups, could decouple stock prices from fundamental value. By 2025, this mechanic had been refined: attackers no longer need a Reddit army; they simply rent a botnet to simulate one.

The line between genuine consumer grievance and paid corporate hit jobs has dissolved. When a hashtag trends against a major corporation today, the probability that it is a funded operation is statistically significant. The “public square” is a battlefield where the combatants are hidden, the ammunition is algorithmic, and the casualties are truth and trust.

State Sponsored Disinformation: Cancel Culture as Geopolitical Tool

The weaponization of social media has evolved from a tool for organic accountability into a sophisticated instrument of statecraft. Intelligence reports from 2015 to 2025 confirm that foreign adversaries, specifically Russia, China, and Iran, have systematically co-opted “cancel culture” mechanics to destabilize Western democracies. These state actors no longer spread disinformation; they identify existing social fractures and amplify reputational attacks to paralyze discourse. A 2025 Microsoft Digital Defense Report indicates that nation-state cyber influence operations have shifted focus from general propaganda to targeted “character assassination” campaigns, utilizing AI-driven bot networks to simulate mass outrage.

Russia’s Internet Research Agency (IRA) pioneered this doctrine, treating online “cancellation” as a military objective. Between 2016 and 2022, the IRA did not just support specific candidates; they operated “brigading” units that amplified hashtags on both sides of contentious cultural problem to ensure maximum reputational damage to all involved. In 2022, this evolved into “Cyber Front Z,” a coordinated effort operating out of St. Petersburg that hijacked comment sections of Western media outlets to demand the deplatforming of anti-war journalists. The objective is not justice attrition: exhausting the target’s resources and silencing dissent through the sheer volume of manufactured hostility.

China has deployed a similar distinct strategy through its “Spamouflage” network. Unlike Russia’s chaotic amplification, Chinese operations frequently focus on enforcing silence. A 2023 Graphika report identified a network of over 15, 000 inauthentic accounts dedicated to harassing entities serious of the CCP. These networks use “wolf warrior” diplomacy tactics adapted for social media, swarming with accusations of racism or imperialism to trigger domestic Western cancellation method. The “227 Incident,” while originating in domestic fandom culture, demonstrated the efficacy of mass reporting to deplatform adversaries, a tactic exported to silence transnational critics.

Iran’s cyber operations have specialized in the “doxxing” and direct intimidation component of cancel culture. In the lead-up to the 2020 and 2024 U. S. elections, Iranian actors created “cyber personas”, fake accounts posing as radical political activists, to threaten voters and journalists. These operations frequently bypass public debate entirely, moving directly to publishing private information (doxxing) to incite physical danger and professional termination. The 2025 “Shadows of Tehran” analysis by the Atlantic Council revealed that Tehran’s digital influence efforts prioritize “hyper-local” cancellation, targeting school board members and local journalists to fracture community cohesion.

| State Actor | Operation / Unit | Primary Tactic | Verified Target / Impact |

|---|---|---|---|

| Russia | Internet Research Agency (IRA) / Cyber Front Z | Simulated Outrage: Amplifying #Cancel[Name] hashtags on both sides of a debate to maximize polarization. | Targeted U. S. journalists and activists (2016-2024); flooded Western media comments to demand firing of anti-war reporters (2022). |

| China | Spamouflage / “50 Cent Army” | Swarm Reporting: Mass reporting of accounts for “hate speech” violations to trigger algorithmic bans. | “JUICYJAM” campaign targeting Thai pro-democracy activists (2020-2024); harassment of Canadian MPs and researchers (2023). |

| Iran | Cyber Personas (e. g., “Proud Boys” spoof) | Weaponized Doxxing: Releasing private data to incite physical threats and professional termination. | Targeted U. S. voters with threatening emails (2020); sustained harassment of female journalists covering the Middle East (2024). |

| North Korea | Kimsuky / Lazarus Group | Reputational Blackmail: Hacking private communications to use for silence or financial gain. | Targeted security researchers and media organizations; threatened leaks of embarrassing data to force self-censorship (2023). |

The integration of Artificial Intelligence into these operations has escalated the threat level. In 2024, researchers detected the large- use of AI-generated “reaction videos” designed to validate cancellation campaigns. These deepfake testimonials provide the “social proof” necessary to convince real users that a grievance is legitimate, turning unwitting citizens into foot soldiers for foreign intelligence services. The distinction between a genuine grassroots boycott and a state-engineered takedown has, leaving institutions to manipulation by adversaries who view “cancel culture” not as a social corrective, as a geopolitical weapon.

The Role of Anonymous Accounts: Accountability vs Harassment

Anonymity on the social web functions as both a shield for the and a weapon for the malicious. In the early stages of the cancel culture phenomenon (circa 2017), anonymous accounts were frequently the only safe harbor for whistleblowers exposing sexual misconduct or workplace abuse. By 2025, yet, this shifted. The architecture of anonymity is industrialized, with bad actors using “sock puppet” accounts to manufacture consensus and execute coordinated harassment campaigns that mimic organic outrage.

The distinction between protective anonymity and predatory concealment is defined by intent and. A 2025 report by the Cyberbullying Research Center indicates that 58. 2% of young people have experienced lifetime victimization online, a sharp rise from 33. 6% in 2016. Much of this acceleration is driven by untraceable accounts. When accountability is decoupled from identity, the social cost of aggression drops to zero. This creates a permission structure for “dogpiling,” where thousands of anonymous users can target a single individual without fear of reputational blowback.

The Shield: Anonymity as a Watchdog

For marginalized voices, anonymity remains a serious tool for challenging power. The fashion industry watchdog Diet Prada serves as a primary case study. Founded in 2014 operating anonymously until its founders were unmasked, the account used its cover to call out plagiarism and racism in a notoriously insular industry. In 2021, the account faced a defamation lawsuit from Dolce & Gabbana seeking over €4 million in damages. Here, anonymity was not a tool for harassment, a necessary defense against litigious corporate giants. Without the initial veil of secrecy, the account likely would have been silenced before it could build the cultural capital necessary to demand widespread change.

The Weapon: Industrialized Inauthenticity

Conversely, the weaponization of anonymity has birthed the “sock puppet” economy. A sock puppet is a false identity created to deceive, frequently used to evade bans or artificially the perceived support for a cancellation campaign. Academic estimates from 2020 to 2024 suggest that between 5% and 15% of active accounts on major microblogging platforms are inauthentic. These accounts are not passive bots; they are active combatants.

In 2021 alone, Meta reported the removal of 52 distinct networks engaging in “Coordinated Inauthentic Behavior” (CIB). These networks originated in over 30 countries and were designed to manipulate public debate. In the context of cancel culture, CIB networks are deployed to “seed” negative narratives. A single operator can control dozens of accounts, replying to their own posts to create the illusion of a growing mob. This technique, known as “astroturfing,” tricks real users into joining a campaign they believe is a genuine grassroots movement.

| Feature | Anonymous Whistleblower | Coordinated Sock Puppet (Astroturfing) |

|---|---|---|

| Primary Objective | Expose specific wrongdoing or abuse of power. | Inflict reputational damage or silence opposition. |

| Target Selection | Institutions, public figures, or corporations. | Private individuals, dissenters, or rivals. |

| Network Behavior | Single source or small group sharing evidence. | Hundreds of accounts repeating identical phrases. |

| Reaction to Evidence | Engages with counter-evidence or updates claims. | Ignores facts; escalates volume and vitriol. |

| Lifespan | Long-term credibility building (e. g., Diet Prada). | Disposable; accounts frequently deleted after attack. |

The Human Toll of Faceless Mobs

The psychological impact of being targeted by a faceless swarm is severe. A 2023 report by the Anti-Defamation League (ADL) found that 52% of American adults have experienced online harassment in their lifetime, up from 40% in previous years. The report highlights that severe forms of harassment, including stalking and physical threats, are frequently delivered by accounts with no identifiable information. For the victim, the inability to identify the aggressor amplifies the terror. There is no one to sue, no one to report to HR, and no way to know if the threat is coming from a teenager in a basement or a coordinated bot farm abroad.

Platform incentives complicate the removal of these bad actors. Anonymous engagement drives traffic just as as authenticated engagement. While platforms like X (formerly Twitter) and Meta have implemented verification systems, the sheer volume of burner accounts overwhelms automated moderation tools. The result is an environment where the “heckler’s veto” reigns supreme: the loudest, most aggressive voices, frequently amplified by artificial means, dictate the boundaries of acceptable speech.

Doxxing Mechanics: The Intersection of Digital and Physical Threats

The transition from reputational destruction to physical endangerment occurs through doxxing, a tactic that the gap between digital animus and real-world violence. By 2025, doxxing, the malicious publication of private information, had ceased to be a niche hacker tactic and became a standardized weapon in the arsenal of cancel culture. SafeHome. org data from October 2025 indicates that 11. 7 million U. S. adults, or approximately 4% of the population, have been doxxed. The objective is explicit: to remove the target’s anonymity and security, exposing them to harassment, stalking, and “swatting.”

The mechanics of doxxing rely on a sophisticated ecosystem of data aggregation. While social engineering and phishing remain common, perpetrators frequently use commercial data brokers that legally scrape and sell public records. A 2025 analysis revealed that for less than $20, bad actors can purchase a target’s home address, family connections, and financial history. This accessibility allows coordinated mobs to bypass digital blocks and bring the conflict to the victim’s doorstep. The psychological toll is measurable; 77% of Americans reported “privacy fatigue” and heightened anxiety regarding their digital footprint in 2025, while 57% admitted to self-censoring political views specifically to avoid being targeted.

The most volatile manifestation of this trend is “swatting”, the act of making a hoax emergency call to dispatch armed police units to a victim’s home. The Federal Bureau of Investigation (FBI) began formally tracking these incidents in May 2023, logging over 550 confirmed events in the eight months alone. The K-12 School Shooting Database recorded 853 swatting incidents targeting schools between January 2023 and June 2024, disrupting education and traumatizing communities. These are not pranks; they are attempts to use state power as a remote-control weapon. In December 2023 and January 2024, a wave of high-profile swattings targeted judges, special counsels, and members of Congress, demonstrating that even the most protected individuals are to this asymmetric tactic.

| Metric | Data Point | Source/Context |

|---|---|---|

| School Swatting Incidents | 853 (Jan 2023 , June 2024) | K-12 School Shooting Database; represents a doubling of incidents from previous periods. |

| Total Doxxing Victims | 11. 7 Million (2025) | SafeHome. org; roughly 4% of the U. S. adult population. |

| Federal Threat Prosecutions | +47% (2019, 2024) | Department of Justice; rise in charged cases involving interstate threats. |

| Self-Censorship Rate | 57% (2025) | Percentage of users avoiding political speech due to fear of doxxing. |

Legislative bodies have struggled to keep pace with the speed of these attacks. While federal law enforcement increased threat prosecutions by 47% between 2019 and 2024, the anonymity of perpetrators, frequently operating via encrypted networks or from foreign jurisdictions, complicates accountability. States have begun to fill the gap; Illinois implemented the Civil Liability for Doxing Act in January 2024, allowing victims to sue for damages, and California followed with similar measures in Assembly Bill 1979. These laws represent a shift from viewing doxxing as a speech problem to recognizing it as a tortious act of endangerment.

The intersection of doxxing and physical threats fundamentally alters the of public discourse. When a difference of opinion can result in a heavily armed police response at one’s home, the “marketplace of ideas” collapses into a survivalist. The data from 2023 through 2025 confirms that this is no longer a theoretical risk a widespread operational reality for journalists, officials, and private citizens alike.

Platform Moderation Policies: Inconsistency and Bias Data

The central method of cancel culture is not public opinion, the unclear moderation systems that govern social media visibility. While platforms publicly claim their enforcement is neutral and safety-oriented, the data reveals a chaotic system defined by high error rates, ideological inconsistency, and automated bias. Between 2015 and 2025, moderation shifted from human-led community management to algorithmic policing, resulting in a structure where enforcement is frequently arbitrary and appeal processes are nonexistent.

The of content moderation makes human review mathematically impossible, forcing reliance on imperfect AI. yet, the failure rates of these automated systems are high. According to Meta’s own Transparency Report from the second quarter of 2023, Facebook reinstated approximately 33% of the content it had previously removed. On Instagram, the reinstatement rate for the same period method 40%. These figures indicate that for every three pieces of content silenced, one was removed in error. This “shoot, rectify later” method places the load of proof on the user, who frequently faces a labyrinthine appeals process with no guarantee of resolution.

The Reality of Visibility Filtering

For years, social media executives denied the existence of “shadowbanning”, the practice of reducing a user’s visibility without their knowledge. In 2018, Twitter leadership stated explicitly, “We do not shadow ban.” yet, the 2022 release of the “Twitter Files” provided documentary evidence contradicting this claim. Internal records revealed the existence of “Visibility Filtering” (VF) tools used to suppress accounts and topics. These tools included “Search Blacklists” and “Trends Blacklists” applied to specific users, such as Stanford epidemiologist Dr. Jay Bhattacharya, preventing their posts from reaching a wider audience regardless of user engagement.

This suppression occurs without user notification, creating a silent penalty that mimics organic disinterest. The data confirms that platforms possess and use granular controls to dial down the reach of specific voices, removing them from the public discourse without the accountability of a formal suspension.

Algorithmic Bias Against Marginalized Groups

While political bias receives the most media attention, automated systems show measurable bias against specific demographics and keywords. A 2019 analysis by Nerd City and Tubefilter tested over 15, 000 phrases on YouTube to determine which triggered automatic demonetization. The study found that the word “gay” was consistently demonetized, while terms like “lesbian” and “bisexual” yielded mixed results. In a contradictory twist, the word “abortion” triggered demonetization, while the plural “abortions” did not. This demonstrates that keyword-based blocking is frequently crude and fails to distinguish between hate speech and identity-based discussion.

Further evidence of algorithmic failure appeared in October 2025, when a confidential internal Meta study was reported. The study found that Instagram’s detection tools missed 98. 5% of sensitive content related to eating disorders shown to teens. Simultaneously, the algorithm actively pushed harmful content to users; teens reporting body dissatisfaction saw three times more eating disorder-related content than their peers. This data suggests that moderation algorithms are not only failing to remove harmful content are inadvertently amplifying it to the users most at risk.

Metrics of Inconsistency

The following table summarizes key data points regarding moderation errors and bias across major platforms between 2019 and 2025.

| Platform | Metric | Verified Data Point | Source / Year |

|---|---|---|---|

| Automated Error Rate | 33% of removed content was reinstated after review | Meta Transparency Report (2023) | |

| Automated Error Rate | ~40% of removed content was reinstated after review | Meta Transparency Report (2023) | |

| Twitter (X) | Visibility Filtering | Confirmed use of “Trends Blacklist” and “Search Blacklist” | Twitter Files Internal Docs (2022) |

| YouTube | Keyword Bias | “Gay” consistently demonetized; “Abortions” monetized vs “Abortion” demonetized | Tubefilter/Nerd City Study (2019) |

| Detection Failure | 98. 5% of sensitive eating disorder content missed by AI | Meta Internal Study (2025) | |

| Meta | Oversight Reversal | Oversight Board overturned Meta’s decision in ~90% of cases | Meta Oversight Board Report (2023) |

Political Inconsistency and Engagement

The narrative that moderation solely conservative voices is complicated by engagement data. A 2024 study reported by Tech Policy Press found that negative news articles were shared 30% to 150% more frequently than neutral content, with right-leaning negative news seeing higher viral spread on Facebook than left-leaning equivalents. while specific individuals may face “Visibility Filtering,” the algorithms fundamentally reward outrage, regardless of political.

yet, the inconsistency remains the defining feature. The Meta Oversight Board, established to provide independent review, overturned Meta’s original moderation decisions in approximately 90% of the 53 cases it reviewed in 2023. This high reversal rate implies that the internal moderation logic used by Meta is fundamentally flawed and misaligned with its own stated policies. When the supreme adjudicating body of a platform disagrees with the platform’s own enforcement nine times out of ten, the system cannot be described as reliable.

These metrics paint a picture of a moderation infrastructure that is broken by design. It relies on high-volume, low-accuracy AI that disproportionately impacts marginalized groups through keyword blocking, suppresses specific users through unclear filtering tools, and fails to enforce safety standards for populations. The result is a digital environment where “cancellation” can be triggered by algorithmic error as easily as by public outcry.

The Rise of De-Banking: Financial Institutions as Moral Arbiters

The digitization of currency has birthed a new enforcement method for social compliance: financial excommunication. Between 2015 and 2025, the banking sector quietly expanded its risk management to include “reputational risk,” a nebulous category that allows financial institutions to sever ties with clients whose political or social views attract controversy. This practice, known as “de-banking,” transforms private ledgers into instruments of public control, cutting off from the modern economy without due process or trial.

The method is not accidental structural. Financial institutions, under pressure from both internal ESG (Environmental, Social, and Governance) mandates and external regulatory scrutiny, have weaponized their Terms of Service. A 2023 investigation by the UK Treasury Committee revealed a 69% surge in debanking complaints to the Financial Ombudsman Service between 2020 and 2024. In the United States, the phenomenon coalesced into what critics term “Operation Choke Point 2. 0,” where federal regulators allegedly pressured banks to drop clients in disfavored industries, including cryptocurrency and firearms, under the guise of safety and soundness.

The Mechanics of Financial Censorship

The justification for account closures rarely cites specific illegal activity. Instead, banks use vague “Acceptable Use Policies” (AUPs) that prohibit “hate,” “intolerance,” or “misinformation.” In 2022, PayPal updated its AUP to include a provision allowing for fines of up to $2, 500 per violation for promoting “misinformation,” a move that triggered an immediate stock sell-off and a subsequent retraction. yet, the infrastructure for such penalties remains in the user agreements of major payment processors.

The most high-profile exposure of this apparatus occurred in July 2023 with the de-banking of British politician Nigel Farage. Documents obtained through a Subject Access Request revealed that Coutts, a subsidiary of NatWest, closed his accounts not for commercial viability, because his views were “at odds with our position as an inclusive organization.” The dossier explicitly his friendship with Donald Trump and his skepticism of net-zero climate policies as “reputational risks.” The scandal forced the resignation of NatWest CEO Alison Rose, yet the practice globally.

Verified Instances of Politicized De-Banking (2015 – 2025)

The following table documents confirmed cases where financial access was revoked due to political affiliation, protest activity, or religious expression.

| Date | Target | Institution | Stated Reason / Context | Outcome |

|---|---|---|---|---|

| Feb 2022 | Freedom Convoy Protesters | Canadian Banks / TD Bank | Invocation of Emergencies Act to freeze 200+ accounts totaling $7. 8M CAD. | Accounts unfrozen after emergency order revoked; sparked run on banks. |

| Oct 2022 | Natl. Committee for Religious Freedom | Chase Bank | Account closed without explanation; bank demanded donor list for reinstatement. | Chase later changed code of conduct in 2025 after shareholder pressure. |

| July 2023 | Nigel Farage | Coutts (NatWest) | “Reputational risk” due to conservative political views and Brexit stance. | CEO resigned; independent review found failures in client confidentiality. |

| Sep 2021 | General Michael Flynn | Chase Bank | Credit cards cancelled due to “reputational risk.” | Cards reinstated following public outcry. |

| Jan 2021 | Various Conservative Groups | PayPal / Stripe | De-platforming following January 6th events; citation of “violence” policies. | Permanent bans for specific organizations; policy tightened. |

Widespread Risk and Regulatory Backlash

The integration of social credit metrics into banking compliance creates a “fan-out” effect where a single designation by a payment processor can cascade across the entire financial system. When a primary bank exits a client for “reputational” reasons, that client is frequently flagged in inter-bank databases like World-Check, making it nearly impossible to open accounts elsewhere. This was clear in the Canadian “Freedom Convoy” incident of 2022, where the invocation of the Emergencies Act compelled banks to freeze approximately 210 accounts. The action bypassed judicial oversight entirely, deputizing bank managers as arms of state enforcement.

By 2025, the of this weaponization forced a legislative response. In August 2025, the U. S. President signed Executive Order 14331, “Guaranteeing Fair Banking for All Americans,” which explicitly prohibited regulators from using “reputational risk” as a standalone metric for supervision. Following this, the Office of the Comptroller of the Currency (OCC) released preliminary findings in December 2025 showing that major national banks had indeed maintained non-public policies restricting service to lawful industries, including oil, gas, and firearms, based on activist pressure rather than financial solvency.

“The banking system has mutated from a neutral utility into a choke point for political dissent. When turn off a person’s ability to buy food or pay rent because of their speech, you have not just censorship; you have a digital gulag.” , Testimony before the House Judiciary Committee on the Weaponization of the Federal Government, March 2024.

The data from 2024 indicates that while high-profile reversals occur, the automated filtering of “high-risk” clients continues. A Cato Institute report released in January 2026 concluded that the majority of de-banking cases not from individual bank choices, from indirect government pressure, confirming the existence of a widespread, regulatory-driven effort to cleanse the financial system of ideological outliers.

Academic Freedom Under Fire: Tenure Revocations and Grant Withdrawals

The of academic tenure has ceased to be a theoretical threat and has become an operational reality. Between 2015 and 2025, the method for removing scholars shifted from peer-reviewed incompetence to reputational demolition by social media mobilization. Data from the Foundation for Individual Rights and Expression (FIRE) confirms that 2025 was the most destructive year on record for American academia, with 525 documented “targeting incidents”, a 158% increase from the previous high in 2021. The objective is no longer the correction of behavior the systematic extraction of dissenting voices through economic and professional liquidation.

The Mechanics of Revocation: Process as Punishment

Tenure, once designed as an ironclad protection against external political pressure, has been pierced by a new administrative weapon: the weaponization of past conduct to satisfy current outrage. The firing of Princeton classicist Joshua Katz in May 2022 exemplifies this tactic. Katz, who criticized a student activist group in a 2020 Quillette article, was terminated two years later. While the university a consensual relationship from 15 years prior, a matter already adjudicated and punished in 2018, the timing revealed the true causality. The 2020 outrage provided the pretext to reopen a closed case, establishing a precedent where “double jeopardy” applies if the accused becomes sufficiently radioactive on social media.

The case of Charles Negy at the University of Central Florida (UCF) further illustrates the “investigation is the punishment” doctrine. Following Twitter posts in 2020 regarding “Black privilege,” UCF solicited complaints against him, leading to his termination in January 2021. Although an arbitrator ordered his reinstatement with back pay in May 2022, ruling that UCF absence “just cause,” the process successfully removed him from the classroom for nearly two years and subjected him to a campaign of public shaming. The message to other faculty was clear: survival requires silence.

“The process is the punishment. Even if you win, you lose years of your life, your savings, and your reputation. The university knows this. The mob knows this.” , Anonymous Tenured Professor, FIRE Faculty Survey 2024

The Economic Chokehold: Grant Withdrawals

While tenure battles garner headlines, a quieter and more method of censorship emerged in 2025: the mass cancellation of federal research grants. In a coordinated move by the federal government, agencies including the National Science Foundation (NSF) and the National Institutes of Health (NIH) terminated approximately 1, 400 grants linked to “misinformation” and “diversity, equity, and inclusion” research. This financial decapitation removed nearly $900 million from the academic ecosystem in a single fiscal quarter.

The impact was immediate and specific. At the University of Wisconsin-Madison, a $10 million NIH grant studying the impact of social media on adolescent health was abruptly cancelled in March 2025. The principal investigator, Dr. Ellen Selkie, was informed that the termination was due to a “shift in funding priorities.” This withdrawal did not pause research; it dismantled the infrastructure of inquiry, forcing the layoff of staff and the deletion of datasets. By defunding the study of disinformation, the administration immunized weaponized narratives from academic scrutiny.

The Escalation of Sanctions

The following table tracks the acceleration of scholar targeting incidents and the shifting source of the pressure. Note the sharp pivot in 2025 toward government-led cancellations.

| Year | Total Incidents | Terminations | Primary Driver of Sanction | Notable Outcome |

|---|---|---|---|---|

| 2015 | 24 | 3 | Internal (Students) | protests |

| 2020 | 113 | 28 | Internal (Students/Faculty) | George Floyd protests trigger review of past work |

| 2021 | 203 | 42 | Internal & External Mix | Charles Negy fired (later reinstated) |

| 2022 | 145 | 31 | Administrative | Joshua Katz fired (Princeton) |

| 2025 | 525 | 87 | External (Government) | 1, 400+ Federal Grants Cancelled |

The Chilling Effect

The cumulative effect of these revocations and withdrawals is a measurable silence. A 2024 survey by FIRE found that 14% of faculty had been disciplined or threatened with discipline for their speech, while 27% reported self-censoring to avoid administrative retaliation. Even at the highest levels, protection is nonexistent. In 2025, University of Pennsylvania law professor Amy Wax was suspended for a year at half pay and stripped of her named chair following a multi-year campaign regarding her comments on culture and race. The sanction demonstrated that even the most secure contracts can be voided when the reputational cost becomes too high for the institution to bear.

This systematic purging creates an intellectual monoculture. When the cost of a wrong opinion is career annihilation, the only safe output is conformity. The university, once a for the unpopular idea, has surrendered to the logic of the algorithm: engagement drives outrage, and outrage drives personnel decisions.

The Advertiser Boycott Model: Revenue Strangulation Tactics

The most method for silencing dissent in the digital age is not legal prohibition, financial asphyxiation. By 2025, the primary theater of cancel culture shifted from public shaming to the backend architecture of the advertising economy. Activist organizations, realizing that platforms like Facebook and X (formerly Twitter) rely on ad revenue for over 90% of their income, developed a strategy to sever these lifelines. This “revenue strangulation” model bypasses the user entirely, pressuring corporate marketing departments to blacklist specific outlets, creators, or entire platforms under the guise of “brand safety.”

The proof of concept for this tactic was the campaign against Breitbart News in 2017. The activist group Sleeping Giants utilized a “name and shame” strategy, alerting brands on Twitter that their programmatic ads were appearing on the right-wing site. The results were immediate and devastating. Data from MediaRadar confirms that Breitbart lost approximately 90% of its ad revenue between February and May 2017, with the number of advertisers plummeting from 242 to just 26. Former executive Steve Bannon admitted the site remained in “tough financial shape” years later, proving that targeted ad boycotts could cripple a media entity without a single law being passed.

The Escalation: Stop Hate for Profit

In July 2020, this tactic scaled up to target the world’s largest social network. The “Stop Hate for Profit” campaign organized over 1, 000 companies, including Unilever, Ford, and Coca-Cola, to pause advertising on Facebook. The coalition demanded stricter policing of “hate speech” and “disinformation.” While Facebook’s stock dipped temporarily, the boycott revealed a limitation in the model: the “long tail” of small business advertisers kept the platform afloat. Facebook’s 2019 revenue of $70 billion was too diversified to be destroyed by a month-long pause from Fortune 500 brands. Yet, the political pressure succeeded; Facebook agreed to a civil rights audit and implemented more aggressive content moderation policies to appease the corporate sector.

Total War: X and the GARM Dissolution

The conflict reached its kinetic phase following Elon Musk’s acquisition of Twitter. Between 2023 and 2024, a coordinated exodus of advertisers caused X’s revenue to collapse. Internal documents and third-party analysis showed ad revenue fell approximately 50% year-over-year in 2023, dropping to an estimated $2. 5 billion. By the half of 2024, revenue slid another 24% to $744 million. Major brands like Disney and IBM withdrew spend citing “brand safety” concerns, frequently relying on guidance from the Global Alliance for Responsible Media (GARM), a voluntary cross-industry initiative.

In a counter-offensive that marked a turning point in this asymmetric warfare, X filed an antitrust lawsuit against GARM in August 2024, alleging the group orchestrated an illegal conspiracy to withhold billions in revenue. The impact was instant: GARM announced it would discontinue operations just days later, citing the financial drain of legal defense. This event exposed the fragility of the boycott infrastructure when challenged in court.

| Target | Year | Primary Agitator | Est. Revenue Impact | Outcome |

|---|---|---|---|---|

| Breitbart News | 2017 | Sleeping Giants | -90% Ad Revenue | Permanent loss of blue-chip advertisers. |

| YouTube Creators | 2017 | “Adpocalypse” | -50% to -80% (Individual) | Strict automated demonetization policies installed. |

| Facebook (Meta) | 2020 | Stop Hate for Profit | Negligible (Long-term) | Policy changes; stock recovered quickly. |

| Tucker Carlson (Fox) | 2018-2023 | Media Matters / Activists | Millions per month | Show relied on direct-response ads (e. g., MyPillow) until cancellation. |

| X (Twitter) | 2023-2024 | GARM / Various | ~$2. 5 Billion Loss (2023) | GARM dissolved after antitrust lawsuit (Aug 2024). |

The Automaton of Censorship

Beyond high-profile boycotts, the “revenue strangulation” model has been automated through “brand safety” technology. Companies like DoubleVerify and Integral Ad Science provide tools that automatically block ads from appearing to content tagged with keywords like “tragedy,” “conflict,” or “politics.” A 2019 report indicated that legitimate news content regarding war, LGBTQ+ problem, and racism is frequently demonetized because algorithms cannot distinguish between reporting on a emergency and promoting it.

This automation creates a “sanitized web” where independent creators are financially incentivized to avoid difficult topics. During the initial YouTube “Adpocalypse” in 2017, creators saw earnings drop by over 50% overnight as advertisers fled. The result is a soft censorship where economic viability dictates editorial focus, removing controversial discourse from the public square not by force, by bankruptcy.

AI and Deepfakes: Fabricating Evidence for Character Assassination

The architecture of cancel culture has shifted from excavation to fabrication. While early cancellation campaigns relied on unearthing decade-old tweets, the weaponization of artificial intelligence in 2024 and 2025 allows bad actors to generate “evidence” of misconduct from scratch. This marks a transition into an era of synthetic guilt, where the load of proof has been inverted. Accusers no longer need to find a smoking gun; they can simply print one using commercially available software.

The democratization of deepfake technology has been rapid and unregulated. In 2023, creating a convincing video deepfake required technical expertise and significant processing power. By late 2024, “nudify” apps and voice cloning services were widely accessible for less than the price of a cup of coffee. Data from 2025 indicates that the number of deepfake files circulating online surged from 500, 000 in 2023 to over 8 million, a 1, 500% increase in just two years. The primary application of this technology is not political satire, targeted harassment.

Non-consensual intimate imagery (NCII) remains the most prevalent form of this digital violence. A 2024 analysis confirmed that 98% of deepfake videos online are non-consensual pornography, with 99% of those being women. While high-profile cases garner headlines, the technology is frequently deployed against private citizens to inflict reputational ruin.

“The victims are not just celebrities. In October 2023, male students at Westfield High School in New Jersey used AI to generate nude images of 14-year-old female classmates. The images were distributed instantly across social channels, creating a permanent digital footprint of abuse before parents or administrators could intervene.”

The speed at which fabricated content spreads outpaces the truth by orders of magnitude. During the January 2024 attack on Taylor Swift, AI-generated explicit images of the singer amassed 45 million views on X (formerly Twitter) in just 17 hours before the platform removed them. For a private citizen without a legal team or direct line to platform moderators, such an attack is professionally and socially fatal. The “Liar’s Dividend”, a concept where the existence of deepfakes allows guilty parties to dismiss real evidence as fake, has further muddied the waters of accountability.

The Economics of Synthetic Destruction

The barrier to entry for character assassination has collapsed. Underground markets offer “reputation destruction services”. The following table outlines the plummeting costs and rising accessibility of these tools between 2023 and 2025.

| Metric | 2023 Cost/Stat | 2025 Cost/Stat | Change |

|---|---|---|---|

| High-Quality Video Creation | $300, $20, 000 / min | $50 / min | -99% Cost Reduction |

| Voice Cloning Sample Required | 1-5 Minutes of Audio | 3 Seconds of Audio | 98% Faster |

| Detection Failure Rate | 25% (In-the-wild) | 50% (In-the-wild) | +100% Difficulty |

| Global Deepfake Files | 500, 000 | 8, 000, 000+ | +1, 500% Volume |